Essence

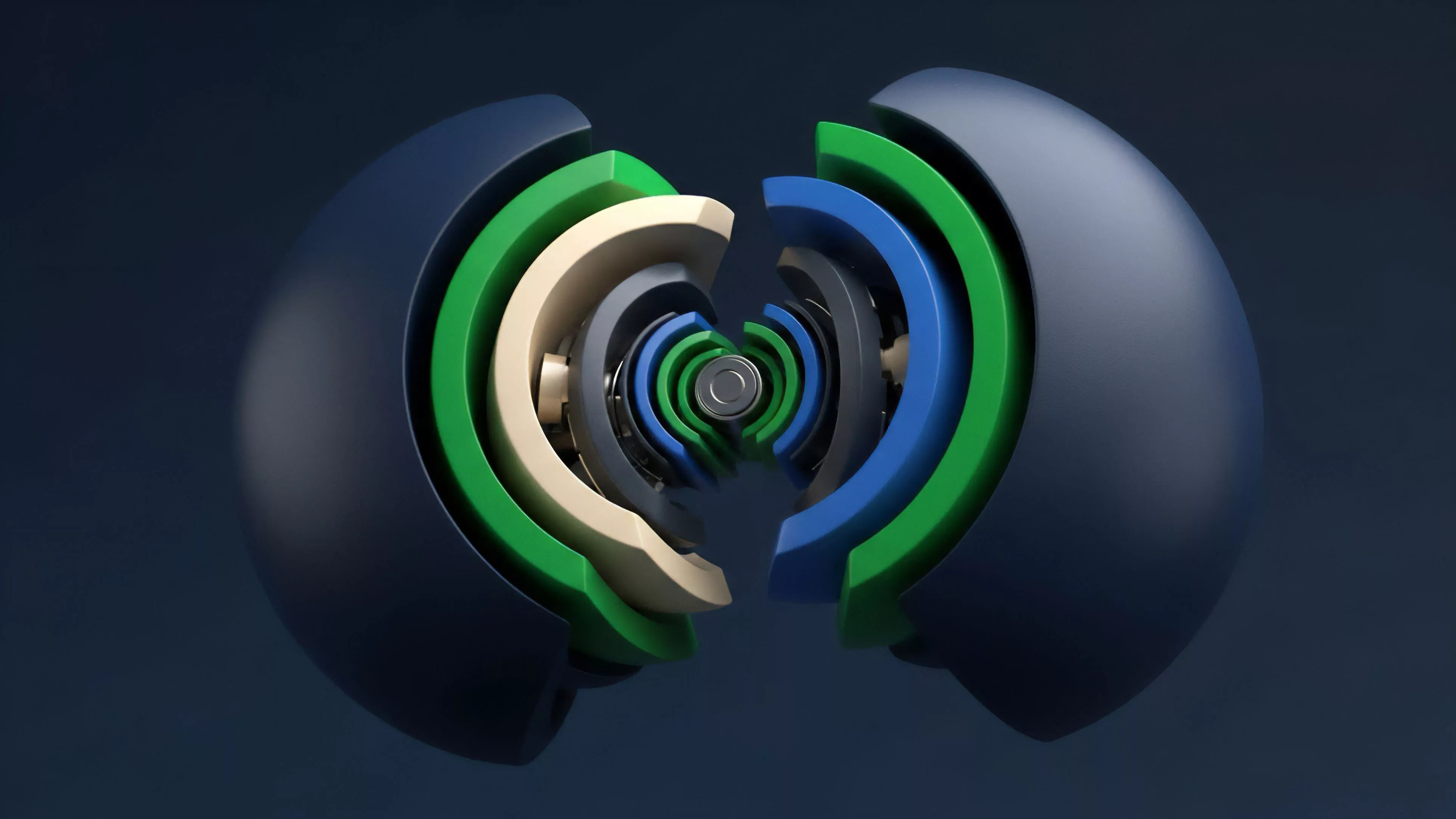

Consensus Protocol Updates represent the fundamental mechanism for upgrading the rules governing distributed ledger validation, security parameters, and state transition logic. These modifications dictate how a decentralized network reaches agreement on the canonical history of transactions. From a financial architecture perspective, these updates modify the underlying risk profile of assets residing on the protocol, directly impacting the viability and pricing of derivative instruments built upon that base layer.

Consensus protocol updates modify the core validation rules of a decentralized network, directly altering the systemic risk and operational parameters of all derivative instruments built upon that ledger.

The architectural integrity of a decentralized system relies on the ability to patch vulnerabilities or enhance throughput without compromising censorship resistance. When a protocol shifts from one consensus model to another, such as moving from proof-of-work to proof-of-stake, it fundamentally redefines the capital cost of network security and the distribution of economic rewards. Market participants must interpret these changes as adjustments to the foundational interest rate and volatility surface of the network.

Origin

The genesis of these updates resides in the requirement for software maintenance within immutable environments.

Early iterations of distributed ledgers relied on hard-coded parameters that proved insufficient for scaling or adapting to adversarial conditions. The evolution from monolithic, static designs to modular, upgradeable architectures reflects a maturation of cryptographic governance.

- Hard Fork mechanisms require a complete divergence of the chain, forcing node operators to adopt new rules or remain on a legacy network.

- Soft Fork implementations maintain backward compatibility, allowing non-upgraded nodes to remain valid within the existing network constraints.

- Governance Signaling protocols provide a structured path for stakeholders to propose and ratify changes, moving away from off-chain social coordination toward on-chain programmatic execution.

These mechanisms were developed to resolve the trilemma between security, decentralization, and scalability. Each update cycle introduces a period of heightened uncertainty, as the market recalibrates its valuation of the protocol based on the perceived success or failure of the technical implementation.

Theory

The mechanics of these updates are best analyzed through the lens of protocol physics and game theory. Every update creates a temporary state of informational asymmetry where the probability of a successful chain split or security exploit is elevated.

Participants in derivative markets must price this event risk, often leading to spikes in implied volatility prior to the activation of the new protocol rules.

Updating consensus protocols necessitates a precise calibration of economic incentives, as any change in validation logic alters the expected return on capital and the probability of systemic failure.

Consider the impact of a block time reduction. While this increases transaction throughput, it simultaneously shortens the window for propagation, potentially increasing the orphan rate and centralization pressure. This trade-off is a classic optimization problem within distributed systems.

| Update Mechanism | Economic Impact | Security Trade-off |

| Parameter Adjustment | Minor fee variation | Negligible |

| Consensus Algorithm Change | Major capital re-allocation | High transition risk |

| State Logic Expansion | New derivative primitive support | Smart contract complexity |

The strategic interaction between validators, developers, and liquidity providers creates a complex feedback loop. If an update threatens the profitability of incumbent validators, the risk of a contested fork increases, leading to a bifurcated asset landscape. The market must anticipate these scenarios, using options to hedge against the potential for sudden liquidity fragmentation.

Approach

Current methodologies emphasize the use of shadow networks and testnets to simulate the economic consequences of protocol changes before mainnet deployment.

Market makers utilize these environments to stress-test their pricing models, ensuring that delta-neutral strategies remain robust even under conditions of high network congestion or latency. The focus has shifted toward formal verification and automated auditing of the new protocol code. This reduces the surface area for exploits, which is a significant concern for large-scale derivative positions that cannot easily exit during periods of extreme market stress.

- Time-weighted governance ensures that long-term stakeholders have greater influence over protocol changes, discouraging short-term manipulation.

- Circuit breakers at the protocol level protect against automated liquidations during periods of unexpected network instability.

- Validator slashing parameters are often adjusted in updates to maintain alignment with the new security requirements of the network.

My assessment of the current state reveals a dangerous reliance on optimistic assumptions regarding developer consensus. We often ignore the reality that technical upgrades are social events as much as they are engineering tasks, and the divergence in participant goals can lead to unintended market outcomes.

Evolution

The trajectory of these updates has moved from ad-hoc, developer-led changes to sophisticated, multi-stage governance processes. Early systems lacked the modularity to handle significant changes without disrupting the entire user base.

Modern protocols now employ proxy patterns and upgradeable smart contracts to iterate on consensus logic with minimal friction.

The evolution of consensus governance demonstrates a shift toward programmatic accountability, where the risk of human error is mitigated by transparent, time-locked execution paths.

I find it fascinating how the industry has moved from fearing the hard fork to embracing it as a legitimate mechanism for resolving ideological or technical disputes. The divergence of chains, once seen as a catastrophe, is now recognized as a natural outcome of competitive decentralization. This evolution has forced the derivatives market to develop sophisticated mechanisms for handling asset splits, ensuring that option holders are protected regardless of the final state of the network.

Anyway, as I was saying, the complexity of these systems often hides the fact that we are merely building on shifting sand, where the next update could render previous risk models obsolete.

Horizon

The next phase involves the implementation of zero-knowledge proofs to verify consensus updates without exposing the underlying state data. This will allow for highly complex upgrades that were previously impossible due to computational constraints. We are moving toward a future where protocol changes are not just efficient but also cryptographically private, fundamentally changing how markets price the risk of these transitions.

| Future Capability | Systemic Benefit | Market Impact |

| ZK-Rollup Integration | Scalable validation | Lower derivative transaction costs |

| Autonomous Governance | Reduced social friction | Predictable update cycles |

| Cross-Chain Consensus | Unified liquidity | Globalized derivative pricing |

The ultimate goal is the creation of self-healing protocols that can adjust their own parameters in response to real-time market data. This represents the pinnacle of automated finance, where the protocol itself acts as the primary risk manager, reducing the need for external intervention. The challenge remains in ensuring that these autonomous systems do not develop emergent behaviors that could lead to catastrophic failure. What is the threshold at which a protocol’s autonomous adaptation ceases to be a feature and becomes an existential risk to the integrity of the underlying asset?