Essence

Consensus Protocol Performance denotes the quantitative throughput, latency, and reliability metrics governing the validation of state transitions within a decentralized ledger. It acts as the primary constraint on the velocity of capital within automated financial environments. When validators reach agreement on the ordering and validity of transactions, they determine the effective settlement finality of the entire system.

The speed and integrity of consensus validation directly dictate the capital efficiency of all derivative instruments settled on-chain.

At the architectural level, Consensus Protocol Performance encompasses the interplay between block production time, network propagation delays, and the computational cost of cryptographic signature verification. These variables are not static; they shift under load, creating fluctuating conditions for arbitrageurs and liquidity providers. Systems achieving higher performance metrics minimize the duration of market exposure to stale pricing data, thereby reducing the probability of toxic flow execution against automated market makers.

Origin

The inception of Consensus Protocol Performance analysis traces back to the fundamental trade-offs identified in early distributed systems research, specifically the constraints imposed by the CAP theorem.

Initial iterations of blockchain architecture prioritized decentralization and censorship resistance, often at the expense of transaction throughput. The evolution toward high-frequency financial applications necessitated a shift in focus toward optimizing the consensus mechanism to handle higher volumes of state changes per second.

- Proof of Work architectures established the foundational security model but introduced inherent limitations in block time and throughput scalability.

- Proof of Stake variants introduced delegated validation and sharding, aiming to decouple security from raw computational energy consumption.

- BFT-based consensus models emerged as the standard for high-performance financial chains, focusing on deterministic finality.

This trajectory reflects a clear transition from viewing blockchains as purely immutable ledgers to treating them as high-throughput execution engines for complex financial contracts.

Theory

The mechanics of Consensus Protocol Performance rest on the rigorous balancing of latency, throughput, and safety. Quantitative models evaluating these protocols utilize stochastic processes to simulate network behavior under varying degrees of adversarial interference. The efficiency of a protocol is measured by its ability to maintain consistent block production under peak load while minimizing the variance in confirmation times.

Stochastic variance in block arrival times creates systemic risks for margin engines that rely on predictable settlement windows.

In the context of derivative pricing, the consensus delay acts as a synthetic form of slippage. If the protocol requires multiple confirmations for finality, the delta between the off-chain oracle price and the on-chain settlement price widens. This gap necessitates higher margin requirements to buffer against the risk of rapid price movements occurring during the unconfirmed period.

| Metric | Financial Impact |

| Time to Finality | Capital locking duration |

| Throughput Variance | Liquidation risk volatility |

| Validator Latency | Arbitrage efficiency |

The mathematical rigor applied here mirrors traditional market microstructure analysis, where the speed of order matching is paramount. When network congestion spikes, the protocol effectively raises the cost of capital by increasing the time required to secure a position.

Approach

Current methodologies for evaluating Consensus Protocol Performance prioritize real-time telemetry and on-chain stress testing. Analysts monitor the distribution of block production intervals and the rate of uncle or orphaned blocks, which serve as indicators of network instability.

These metrics are mapped against volatility indices to determine the protocol’s resilience during periods of extreme market stress.

- Validator Set Monitoring involves tracking the geographic and stake-weight distribution to identify potential centralization vectors.

- Mempool Congestion Analysis measures the queue depth of pending transactions, providing a predictive signal for fee-based prioritization.

- Finality Latency Modeling calculates the statistical probability of reorgs occurring within a specific window.

My professional stake in this domain compels a focus on the tail-risk scenarios. Models that fail to account for correlated validator failure during high volatility events overlook the primary mechanism of system-wide contagion. We must evaluate performance not during steady-state operations, but under the duress of maximum market entropy.

Evolution

The transition from monolithic to modular blockchain architectures has fundamentally altered the performance landscape.

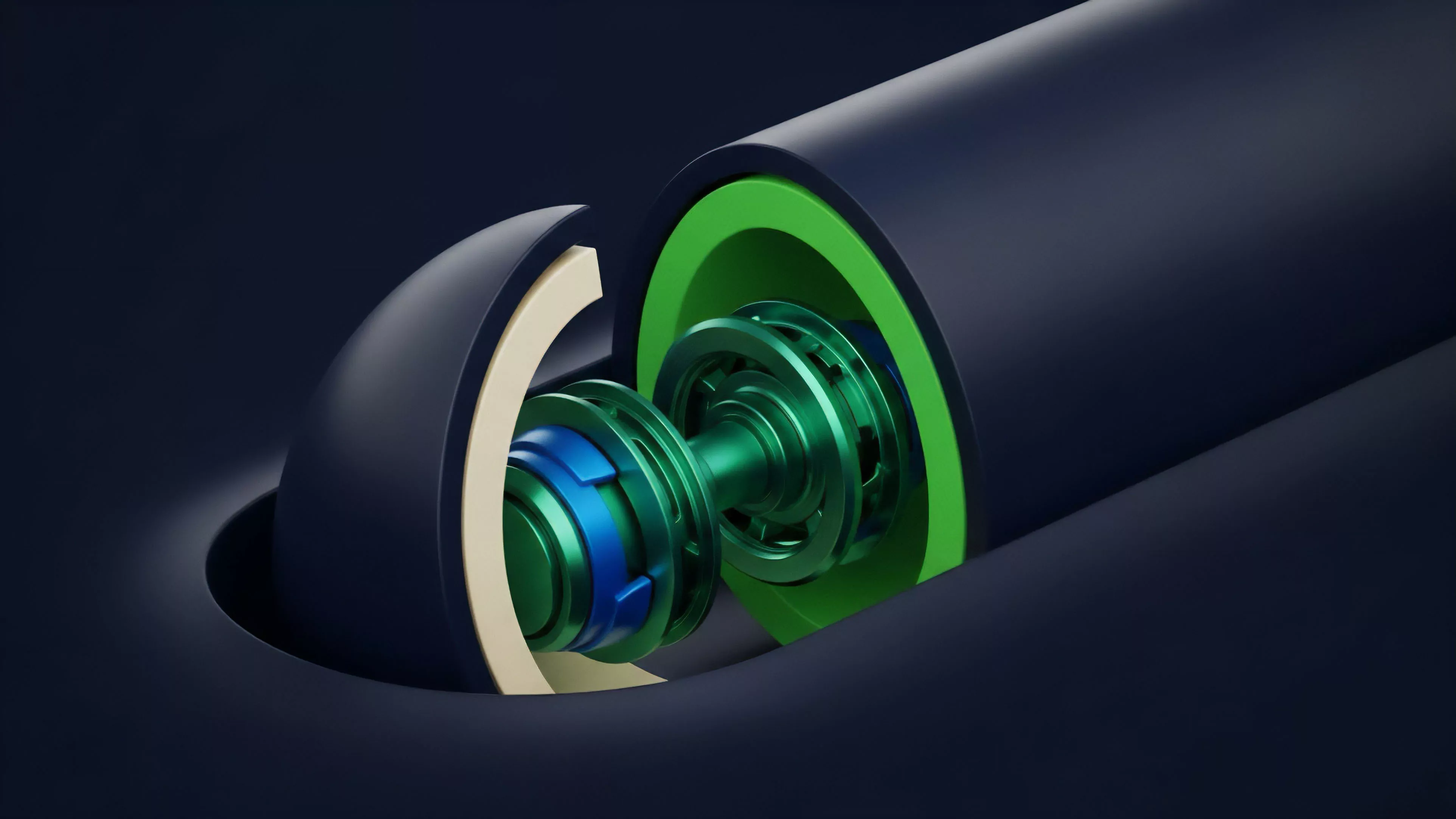

By separating the execution layer from the consensus layer, newer protocols achieve specialized efficiency. This structural decoupling allows for the optimization of Consensus Protocol Performance independently of the application-specific logic, enabling higher throughput without sacrificing security guarantees.

Modular design separates state validation from execution, allowing for specialized performance tuning at each architectural tier.

Historical market cycles demonstrate that protocols failing to adapt their consensus mechanisms to support rapid state updates eventually lose liquidity to more efficient venues. The shift toward optimistic and ZK-based rollups represents the next phase of this evolution, where consensus is deferred to a settlement layer while execution occurs in a high-speed, off-chain environment. This migration changes the nature of systems risk, moving the vulnerability from the consensus layer to the bridge and prover infrastructure.

Horizon

The future of Consensus Protocol Performance lies in the implementation of parallelized consensus and asynchronous state transition models. As decentralized finance continues to integrate with broader financial markets, the demand for sub-millisecond finality will drive the development of hardware-accelerated validator nodes. These advancements will permit the scaling of complex derivative strategies that are currently limited by network-induced latency. The ultimate goal remains the creation of a trustless settlement engine that matches the performance characteristics of centralized clearing houses. Achieving this requires not only improvements in cryptographic efficiency but also a redesign of the economic incentives that govern validator behavior. We are moving toward an era where the protocol itself functions as an automated risk management tool, dynamically adjusting its performance parameters in response to real-time market data. The critical question remains: can these decentralized systems maintain their core security guarantees when pushed to the throughput limits required for global institutional finance?