Essence

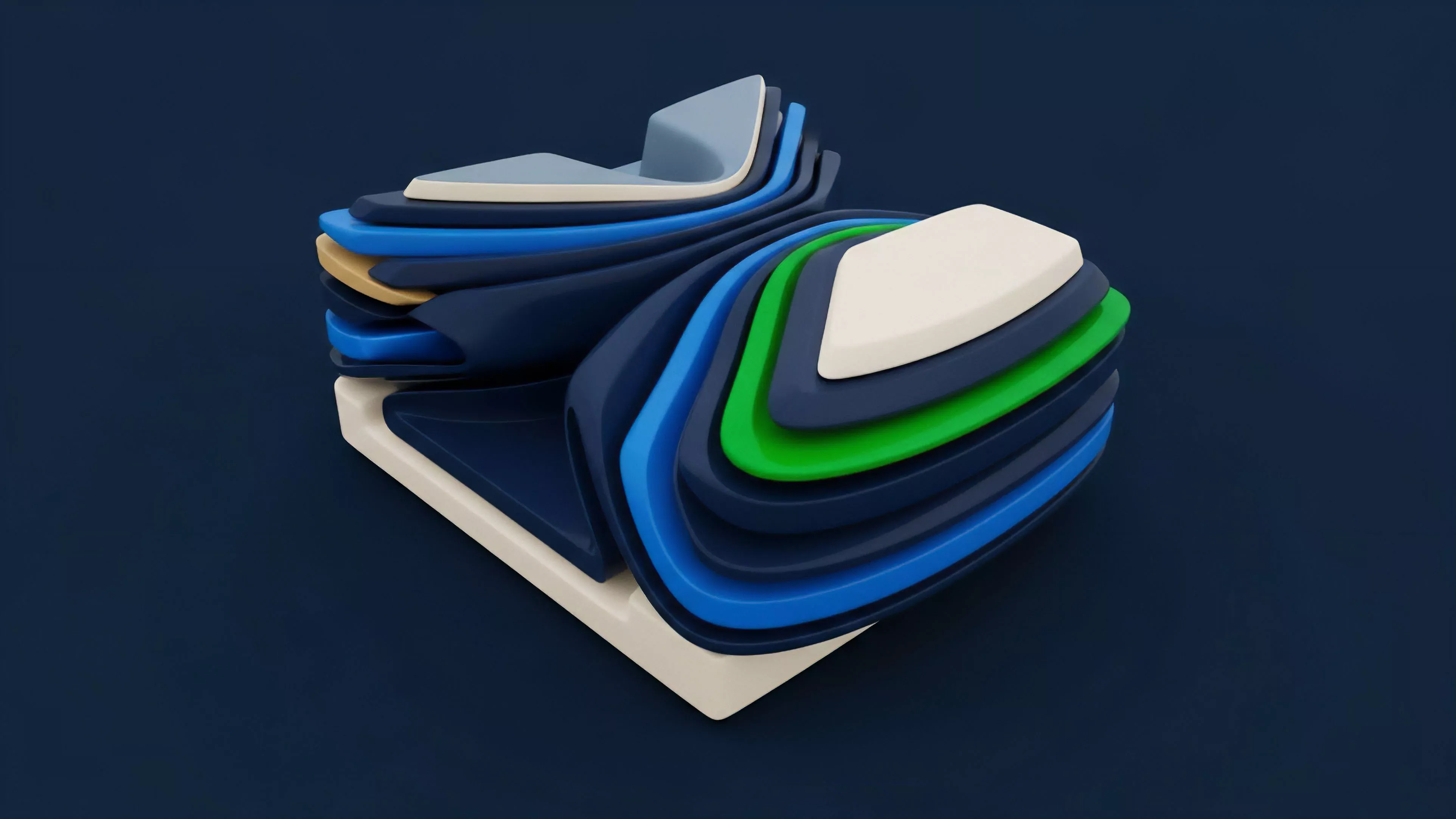

Consensus Layer Optimization denotes the systematic refinement of block production, transaction ordering, and validation finality to minimize latency and maximize capital efficiency within decentralized networks. This process addresses the friction between cryptographic security guarantees and the high-frequency requirements of modern financial derivatives. By streamlining how nodes reach agreement, protocols reduce the window of opportunity for toxic order flow and improve the predictability of settlement times for on-chain options.

Consensus Layer Optimization represents the architectural calibration of validation mechanisms to ensure financial settlement aligns with the speed requirements of derivative markets.

The primary objective involves reducing the time-to-finality, which directly influences the cost of carry and the accuracy of risk-neutral pricing models. When validation is delayed, the risk of adverse selection increases, forcing liquidity providers to widen spreads or demand higher volatility premiums. Optimizing this layer effectively tightens the link between decentralized infrastructure and the rigorous demands of institutional-grade financial engineering.

Origin

The necessity for Consensus Layer Optimization emerged from the limitations of early proof-of-work and naive proof-of-stake designs, which prioritized decentralization and security at the expense of throughput and predictable timing.

As decentralized exchanges began supporting complex instruments like options and perpetual futures, the inherent volatility of block times created massive slippage and oracle latency issues. Market participants quickly realized that the consensus mechanism acted as a hidden tax on all trading activities.

- Transaction Finality: The requirement for deterministic settlement states to prevent chain reorgs that could invalidate derivative contracts.

- Validator Latency: The physical and computational constraints on node communication that introduce jitter into order matching engines.

- MEV Extraction: The exploitation of transaction ordering within the consensus layer that drains value from liquidity providers and retail traders.

Developers and researchers began exploring techniques such as sharding, parallel execution, and off-chain pre-confirmation to decouple consensus from execution. This shift mirrors the historical evolution of traditional electronic trading, where high-frequency venues moved from centralized exchanges to colocation and low-latency network topologies. The crypto sector is currently replicating this trajectory, moving from general-purpose validation toward specialized, performance-oriented consensus architectures.

Theory

The theoretical framework rests on the intersection of game theory and distributed systems, specifically concerning how consensus parameters influence the Greeks of derivative portfolios.

In a decentralized environment, the consensus layer functions as the fundamental risk engine. If the block production process is inconsistent, the Delta and Gamma exposure of a portfolio becomes difficult to hedge, leading to increased systemic risk during periods of market stress.

| Parameter | Impact on Derivatives |

| Block Time | Influences execution latency and oracle freshness |

| Finality Latency | Determines margin call responsiveness and liquidation triggers |

| Ordering Logic | Affects susceptibility to front-running and toxic flow |

The mathematical modeling of this environment requires accounting for the probabilistic nature of consensus. If a protocol guarantees finality in one hundred milliseconds, the option pricing model can incorporate a much lower volatility premium than a protocol with variable, multi-second block times. This is where the pricing model becomes truly elegant ⎊ and dangerous if ignored.

When consensus mechanisms are optimized, they effectively reduce the noise in the system, allowing for tighter bid-ask spreads and more efficient capital allocation.

Efficient consensus architecture acts as a structural hedge against volatility by minimizing the time gap between order submission and settlement.

Approach

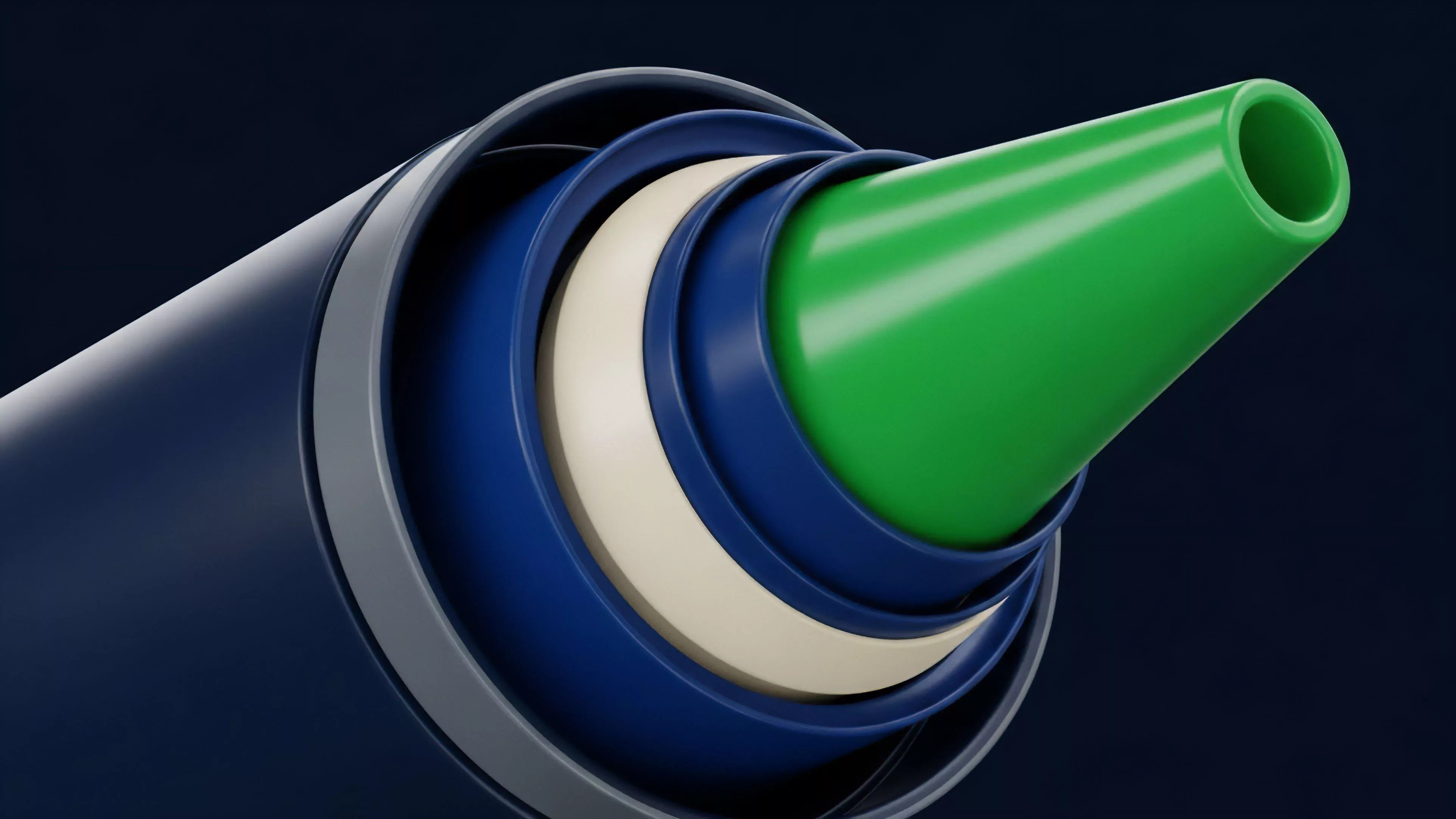

Current implementation strategies focus on modularity and the separation of concerns between ordering and execution. Protocols now frequently utilize sequencers to order transactions before they are submitted to the base consensus layer, effectively creating a two-tier system. This allows for near-instant execution while maintaining the security guarantees of the underlying blockchain.

- Pre-confirmations: Allowing validators to sign off on transactions before they are formally included in a block, reducing the perceived latency for traders.

- Shared Sequencing: Centralizing the ordering process across multiple rollups to reduce fragmentation and improve cross-chain arbitrage efficiency.

- Proposer-Builder Separation: Decoupling the role of building blocks from the role of proposing them to mitigate censorship and optimize for throughput.

The current market environment demands a pragmatic assessment of these tools. While modularity increases throughput, it also introduces new failure modes and potential points of centralization. The most sophisticated protocols now prioritize cryptographic proofs over social consensus to ensure that order flow integrity remains verifiable even under adversarial conditions.

This is not about removing the human element but about making the system resistant to the inevitable pursuit of unfair advantage by automated agents.

Evolution

The path from monolithic chains to highly specialized consensus layers marks a transformation in how we define financial trust. Early systems relied on a simple broadcast-and-validate model that proved insufficient for derivatives. The industry has shifted toward asynchronous consensus and optimistic validation, which allow for rapid state updates while providing a challenge window for fraud detection.

Sometimes, one must wonder if the drive for speed inadvertently creates new forms of fragility; by optimizing for microsecond gains, we risk overlooking the long-term systemic consequences of highly complex, interconnected validation loops. Regardless, the current trajectory is clear. We are moving toward a landscape where consensus is treated as a commodity, with specialized protocols competing on latency, throughput, and the quality of their ordering logic.

This evolution is driven by the demand for capital efficiency, as traders seek to minimize the idle time of their collateral.

The evolution of consensus mechanisms reflects a transition from securing simple state changes to facilitating high-velocity financial derivatives.

Horizon

Future developments will likely focus on MEV-aware consensus, where the protocol itself captures and redistributes the value currently lost to front-running. This will fundamentally alter the economics of market making, potentially leading to more competitive pricing for all participants. As consensus layers become more transparent and programmable, we will see the rise of native derivatives that utilize consensus proofs as a core input for pricing. The next generation of infrastructure will integrate zero-knowledge proofs directly into the validation process, allowing for private yet verifiable transaction ordering. This addresses the critical issue of information leakage in large-scale derivative trades. As these technologies mature, the barrier between decentralized protocols and traditional financial venues will dissolve, leaving behind a unified, global market where consensus optimization is the primary determinant of competitive advantage.