Essence

Computational Cost Analysis defines the aggregate expenditure of resources required to execute, validate, and settle derivative transactions within decentralized environments. This metric encompasses the overhead associated with cryptographic proof generation, state transitions, and the ongoing maintenance of margin engines on distributed ledgers.

Computational Cost Analysis quantifies the resource intensity inherent in executing and settling decentralized derivative contracts.

Market participants frequently overlook the friction imposed by blockchain throughput limitations and gas consumption when pricing complex options. This oversight distorts the true profitability of automated strategies, as the cost to update positions or trigger liquidations can exceed expected returns during periods of heightened network congestion. Systemic overhead directly impacts the viability of high-frequency trading models, necessitating a rigorous accounting of every state update.

Origin

The genesis of this discipline lies in the transition from centralized order books to on-chain execution environments where every computational step incurs a measurable fee.

Early decentralized protocols adopted simplistic pricing models, largely ignoring the underlying gas dynamics that govern ledger updates. As trading complexity increased, the limitations of these primitive architectures became apparent.

The shift toward on-chain derivatives necessitated a framework for measuring the resource expenditure required for contract lifecycle management.

Financial engineers realized that the protocol physics of blockchain networks ⎊ specifically the block space constraints ⎊ acted as a hidden tax on derivative liquidity. This realization forced a move away from traditional finance assumptions toward a model that incorporates network congestion and validator incentives as core variables in option pricing and risk management.

Theory

The theoretical foundation of Computational Cost Analysis rests upon the intersection of quantitative finance and distributed systems engineering. Traditional Greeks, such as delta and gamma, provide a partial view of risk; they remain incomplete without an overlay of execution costs.

Mathematical Modeling

Pricing models must incorporate the gas-adjusted strike price to reflect the real-world cost of exercising options. This involves calculating the expected fee variance across the duration of the contract, treating network congestion as a stochastic variable.

| Parameter | Systemic Impact |

| State Transition Overhead | Increases effective bid-ask spread |

| Proof Generation Cost | Limits high-frequency hedging capability |

| Liquidation Threshold Sensitivity | Determines systemic contagion risk |

Effective derivative pricing requires integrating network resource costs directly into standard quantitative models.

The adversarial nature of decentralized markets means that computational costs fluctuate based on strategic participant behavior. Actors may intentionally congest the network to prevent timely liquidations, effectively weaponizing the cost of execution against under-collateralized positions. This game-theoretic dimension elevates the analysis from a technical exercise to a strategic imperative.

Approach

Current methodologies prioritize the mapping of protocol-level bottlenecks to financial outcomes.

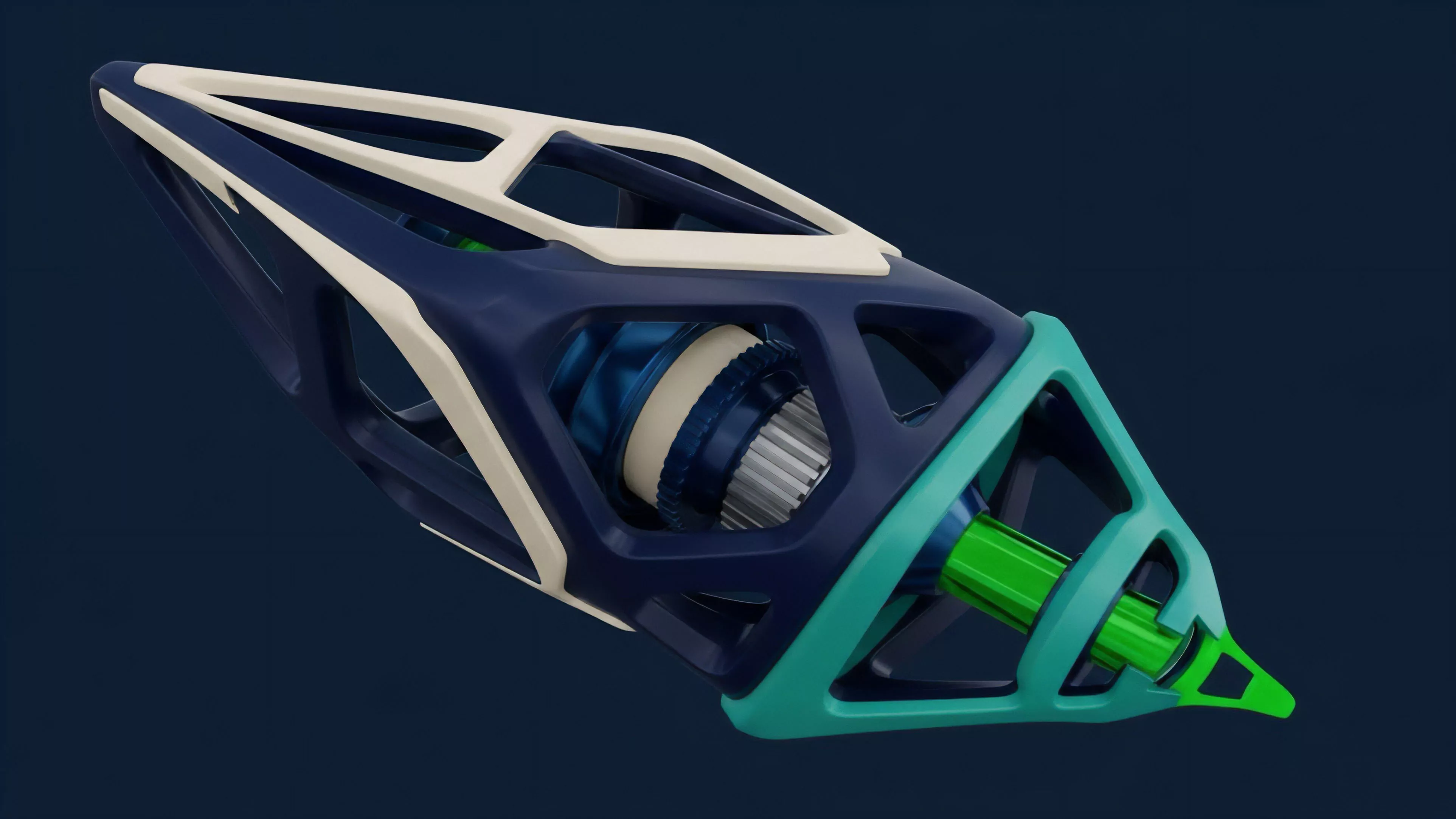

Analysts evaluate the computational footprint of specific smart contract architectures, comparing monolithic versus modular execution environments to determine their impact on derivative liquidity.

- Resource Mapping involves quantifying the precise number of operations required for margin updates.

- Latency Benchmarking assesses the delay between price oracle updates and the finality of derivative settlement.

- Cost Projection utilizes historical network fee data to model potential slippage during volatile market conditions.

This systematic approach reveals that the technical architecture of a protocol is a primary driver of its long-term financial stability. Protocols that minimize the computational burden of complex transactions demonstrate superior liquidity depth and resilience against sudden market shocks.

Evolution

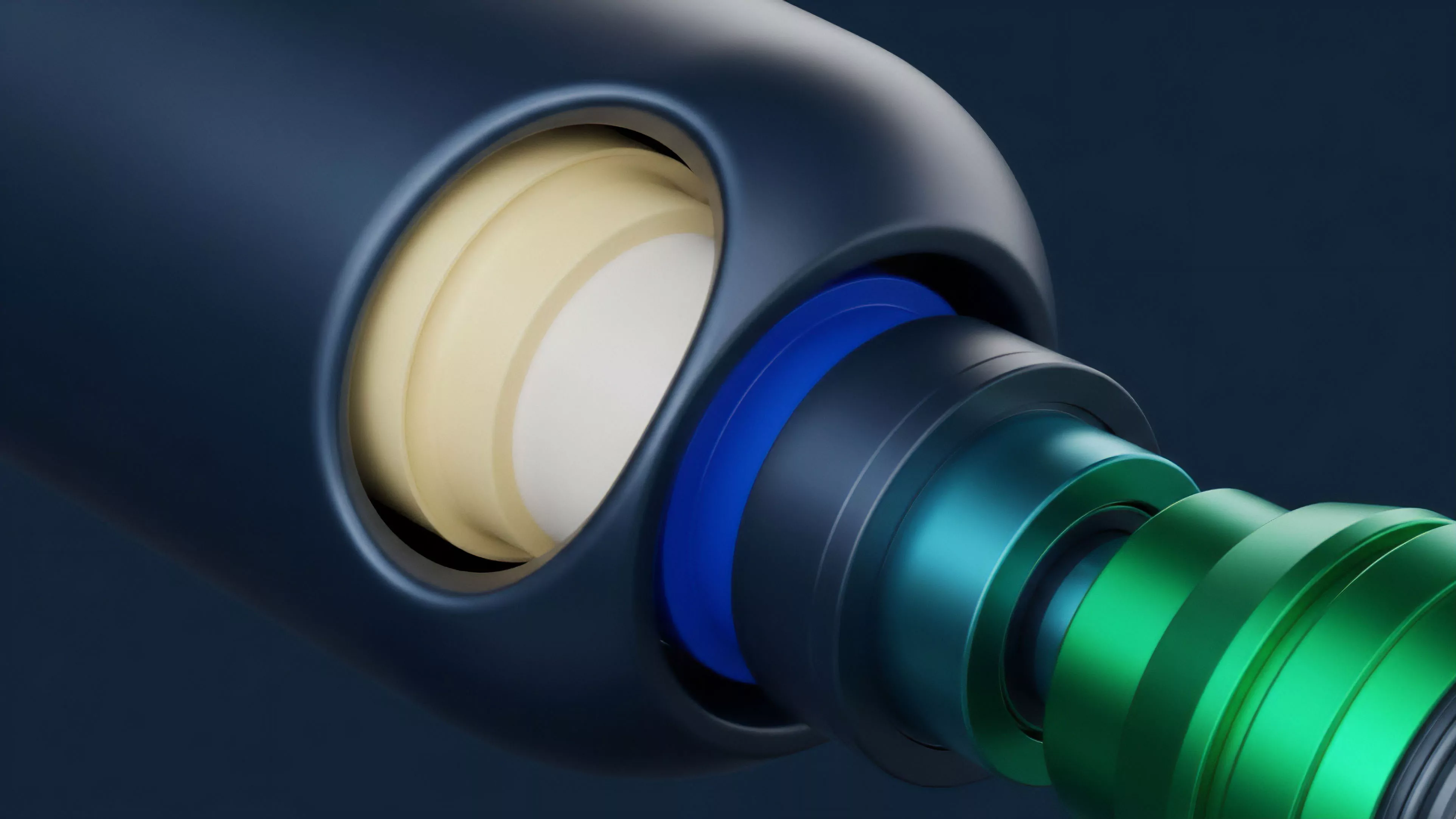

The trajectory of this field has moved from static gas estimation toward dynamic, real-time resource optimization. Early efforts focused on optimizing simple token transfers, while current developments target the reduction of recursive proof costs for complex derivative structures.

Advancements in cryptographic efficiency directly reduce the barriers to sophisticated decentralized financial participation.

The industry now utilizes advanced techniques like zero-knowledge rollups to batch transactions, effectively amortizing the cost of computation across numerous users. This shift mirrors the historical evolution of high-frequency trading platforms, where the focus transitioned from raw speed to the efficient utilization of computing resources. The integration of off-chain computation has further altered the landscape, allowing protocols to maintain complex state without sacrificing the security guarantees of the underlying blockchain.

Horizon

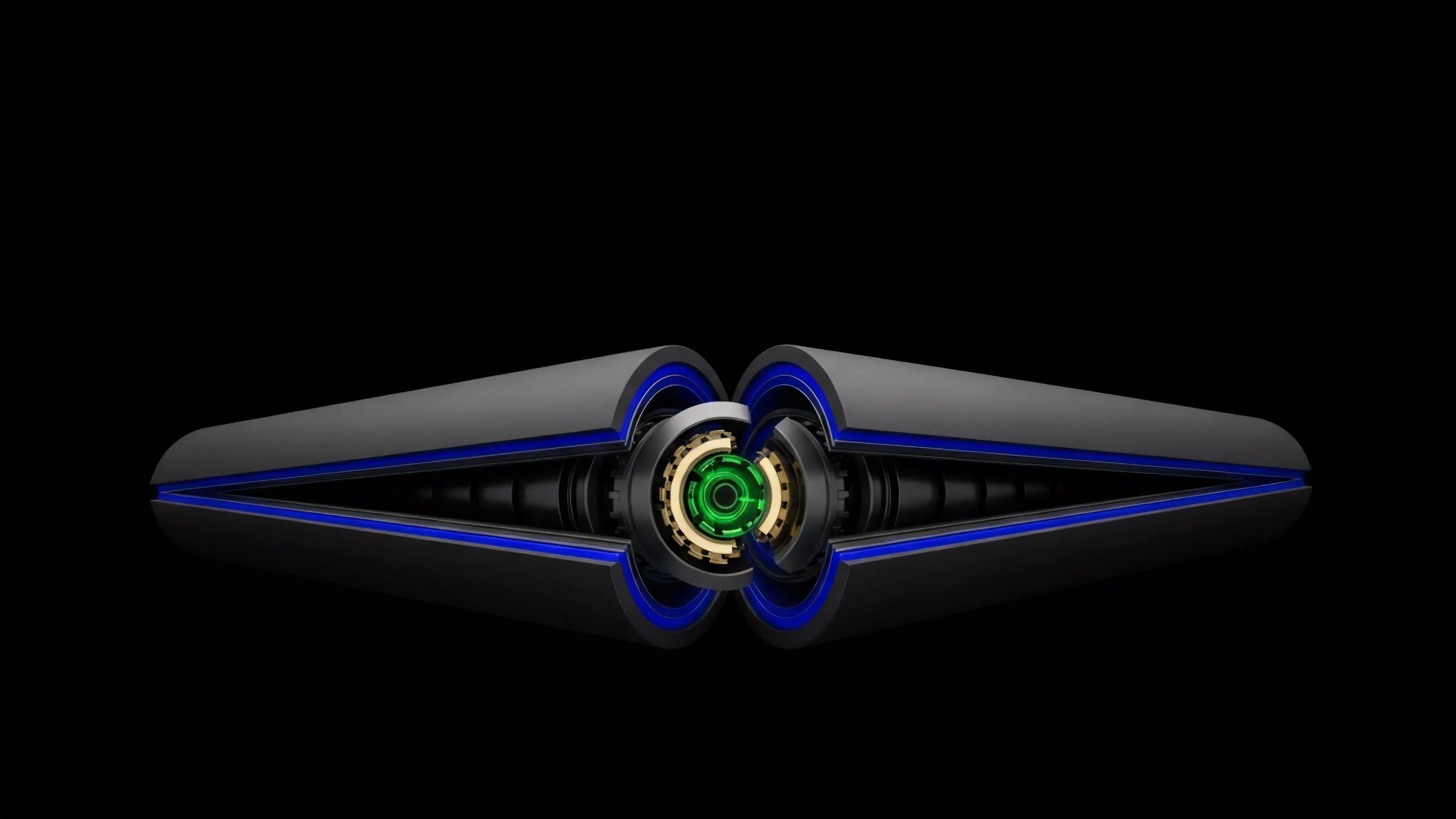

Future developments will center on the creation of standardized computational risk metrics that traders can integrate into their automated strategies.

As protocols move toward sharded architectures, the analysis must account for cross-shard communication overhead and its impact on the synchronization of derivative prices.

| Future Trend | Strategic Implication |

| Recursive Proof Compression | Reduced cost for complex options |

| Cross-Chain State Sync | Improved global liquidity efficiency |

| Autonomous Fee Hedging | Stable execution costs for traders |

The ultimate goal is the democratization of sophisticated derivative strategies through protocol-level efficiency. Market participants who master the interplay between cryptographic overhead and financial risk will hold a distinct advantage, as the ability to predict and minimize these costs becomes the primary determinant of success in decentralized markets.