Essence

The core challenge of computational complexity in decentralized finance stems from the fundamental conflict between economic security and algorithmic efficiency. On a blockchain, every calculation consumes resources, measured as gas or transaction fees. The cost of performing complex financial calculations, such as accurately pricing options or determining risk sensitivities (Greeks), often exceeds the economic viability of a transaction or even the block’s gas limit.

This constraint forces protocol architects to make difficult trade-offs. The decision to simplify a pricing model or offload computation to external entities directly impacts the security and trustlessness of the derivative product. The complexity of a derivative product is therefore not just a matter of financial design, but a direct function of the underlying computational constraints of the consensus mechanism.

Computational complexity in decentralized derivatives is the direct economic cost of trustless verification.

This constraint dictates the types of options that can be offered on-chain. Simple European options, which require a single calculation at expiration, are relatively straightforward to implement. More complex products, like American options with early exercise features or exotic options with path-dependent payoffs, demand significantly more computational power for accurate real-time pricing and risk management.

When a protocol attempts to implement these sophisticated instruments, the computational overhead can introduce systemic vulnerabilities or render the product prohibitively expensive for users, creating an efficiency-security dilemma. The architect’s challenge is to find the optimal balance point where a product remains financially sound while operating within the tight computational budget of the decentralized ledger.

Origin

The computational complexity problem for options protocols originates from the inherent limitations of deterministic virtual machines like the Ethereum Virtual Machine (EVM).

Traditional financial institutions rely on high-performance computing clusters to execute complex pricing models, such as Monte Carlo simulations for path-dependent derivatives or sophisticated numerical methods for solving partial differential equations. These calculations often run in milliseconds and are invisible to the end user. When these models are ported to a decentralized environment, they confront a system where every single operation must be verified by every node in the network.

This creates an exponential increase in cost and time. The initial design of smart contracts for options often defaulted to a simplified, off-chain model for pricing. Early protocols, facing a choice between accurate pricing and low gas costs, frequently prioritized the latter.

This resulted in a disconnect where the on-chain settlement logic was often separate from the off-chain pricing logic used by market makers. This disparity created opportunities for arbitrage and introduced systemic risks. The origin story of this complexity is one of attempting to transplant sophisticated, high-frequency financial engineering into a low-frequency, high-cost computing environment.

The initial solution involved simplifying financial models to fit within these constraints, which led to a less robust and less capital-efficient market structure.

| Model Complexity Comparison | Traditional Finance (Off-Chain) | Decentralized Finance (On-Chain) |

|---|---|---|

| Pricing Model | Black-Scholes, Monte Carlo Simulation, Finite Difference Method | Simplified Binomial Model, Time-weighted Average Price (TWAP) Oracles, Off-chain pricing with on-chain settlement |

| Risk Calculation (Greeks) | Real-time, continuous calculation of Delta, Gamma, Vega, Theta | Infrequent updates, often simplified or off-chain calculation, reliance on external keepers |

| Liquidation Logic | Centralized risk engines, instantaneous margin calls based on real-time data feeds | On-chain logic, relies on oracle updates and block-by-block execution, introduces latency risk |

Theory

The theoretical foundation of computational complexity in decentralized options revolves around the “gas cost of verification.” The cost of executing a function in a smart contract is directly tied to the number of operations required. For options pricing, this manifests in several ways. The complexity of calculating the fair value of an option often requires iterative calculations, especially for American options where early exercise must be considered at every step of the option’s life.

This iterative process, which is necessary for accuracy, quickly becomes prohibitively expensive on a blockchain. The core challenge is that the most efficient pricing models for complex derivatives, such as Monte Carlo simulations, are computationally non-deterministic and require significant processing power. A blockchain’s deterministic nature means every calculation must yield the same result for every validator.

The cost of achieving this consensus on a complex calculation is a major hurdle. The complexity of an options contract’s risk profile directly influences the complexity of its liquidation mechanism. A protocol must constantly calculate the collateral value and the option’s value to determine if a position is undercollateralized.

If this calculation is too complex, the protocol cannot react quickly to market changes, creating a systemic risk.

The fundamental constraint for decentralized options is the trade-off between the mathematical precision required for accurate pricing and the high gas cost of on-chain computation.

The challenge extends beyond simple pricing. It impacts the very design of risk management. For example, calculating Vega (the sensitivity to volatility) requires significant computational resources.

Without accurate on-chain Vega calculation, protocols cannot effectively manage the risk exposure of their liquidity pools to volatility changes. This forces protocols to either over-collateralize or accept higher risks. The current theoretical solutions focus on either simplifying the financial model (e.g. using a simplified Black-Scholes approximation) or moving the calculation off-chain and verifying the result using cryptographic proofs.

Approach

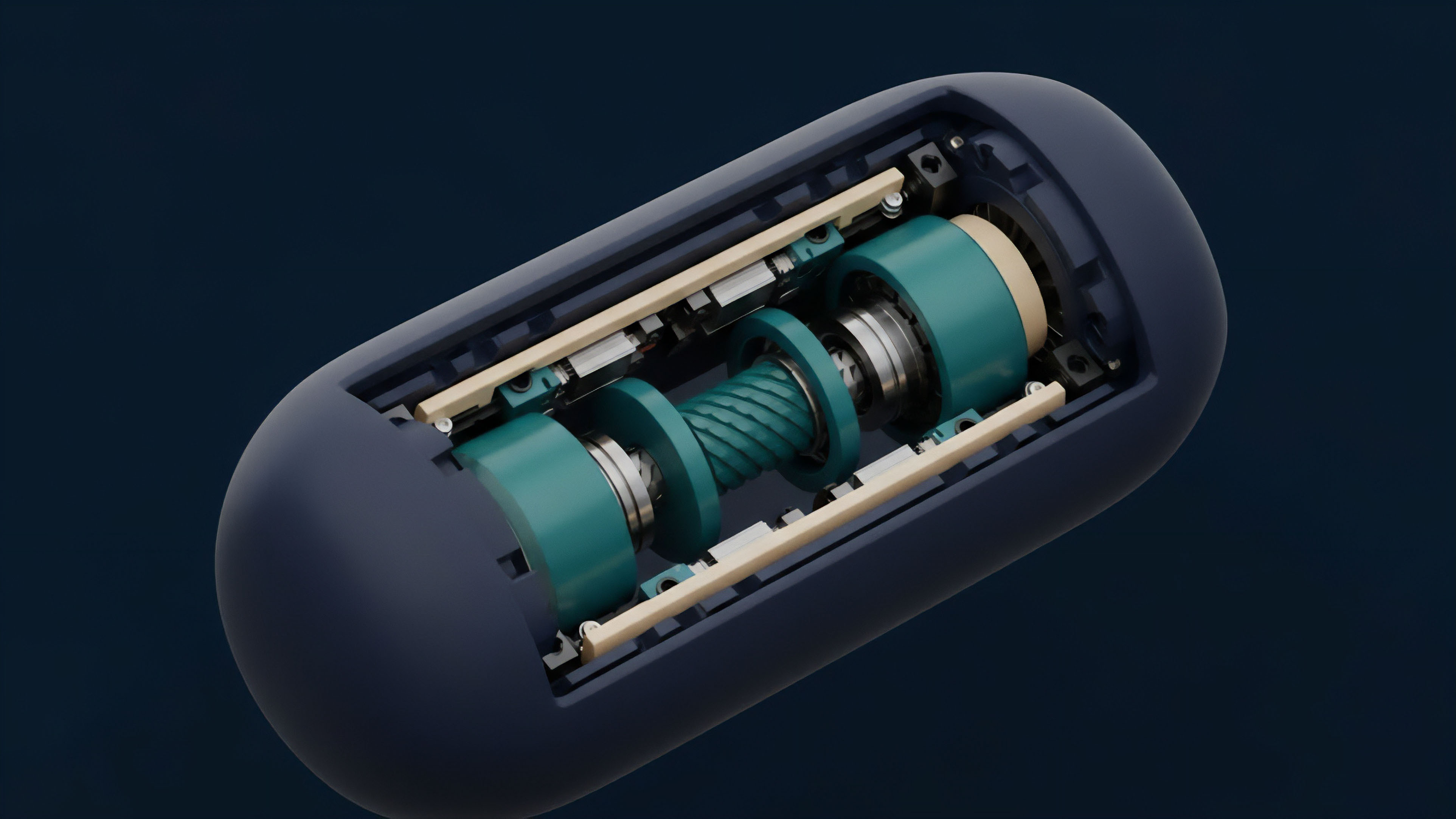

Current protocols address computational complexity through a hybrid architecture, balancing on-chain security with off-chain efficiency. The dominant approach involves executing complex calculations off-chain and using a secure mechanism to relay the results to the smart contract. This often takes the form of specialized oracles or keepers.

There are several methodologies for implementing this hybrid model:

- Off-Chain Calculation with On-Chain Settlement: The most common approach where market makers or specialized off-chain services calculate fair prices and risk metrics. The on-chain smart contract only handles the final settlement, relying on external price feeds or liquidation triggers. This significantly reduces gas costs but introduces trust assumptions on the external price provider.

- Simplified On-Chain Models: Some protocols use highly simplified pricing models, such as constant product automated market makers (AMMs) for options. While computationally cheap, these models often result in less accurate pricing and significant slippage for larger trades. The simplicity comes at the cost of capital efficiency.

- Zero-Knowledge Proofs for Verification: A more advanced approach involves performing the complex calculation off-chain and generating a zero-knowledge proof (ZK-proof) of its correctness. The smart contract then verifies this proof, which is computationally inexpensive, rather than performing the entire calculation itself. This method offers a pathway to achieve both efficiency and trustlessness.

The choice of approach dictates the protocol’s risk profile. Protocols relying on off-chain calculation for pricing often face the risk of “oracle manipulation,” where external price feeds are compromised to trigger favorable liquidations. Protocols using simplified models face the risk of capital inefficiency and poor execution for users.

The challenge for market makers is to create strategies that account for these computational limitations, often by widening spreads or reducing liquidity to compensate for the latency between off-chain calculation and on-chain execution.

Evolution

The evolution of computational complexity in decentralized options tracks the progression of blockchain scalability itself. Early iterations of options protocols were highly constrained, limited to simple European options and relying on rudimentary pricing models.

The focus was on proving the concept of on-chain derivatives rather than achieving high capital efficiency or complex risk management. The advent of Layer 2 solutions and specialized sidechains for derivatives changed this dynamic significantly. The development of rollups, particularly those leveraging zero-knowledge technology, has opened up new possibilities.

By moving computation off-chain and using cryptographic proofs for verification, protocols can now execute complex calculations at a fraction of the cost. This allows for the implementation of more sophisticated pricing models and risk engines. This shift represents a move from “trustless but expensive” to “trustless and efficient.”

| Era of Options Protocol Design | Key Computational Constraints | Risk Management Approach | Financial Products Offered |

|---|---|---|---|

| Early DeFi (2019-2021) | High gas costs, low block gas limits, simple EVM operations | Off-chain or simplified on-chain pricing, high over-collateralization requirements | Simple European options, covered calls/puts, basic fixed-rate products |

| Scalability Era (2022-2024) | Layer 2 rollups reduce gas costs, but data availability remains a bottleneck | Hybrid models, off-chain keepers, introduction of on-chain Greeks calculation via specialized AMMs | American options, structured products, more capital-efficient strategies |

| ZK-EVM Era (2025+) | Low computational cost for verification via ZK-proofs, enabling complex on-chain logic | On-chain verification of off-chain pricing, advanced risk engines, real-time liquidation mechanisms | Exotic options, complex volatility products, highly customized strategies |

The evolution reflects a growing understanding that financial engineering in a decentralized context requires a new set of architectural principles. It is not sufficient to simply replicate traditional models; instead, the system must be redesigned from first principles to optimize for computational efficiency. The focus has shifted from simple tokenized derivatives to a more sophisticated approach where protocols actively manage computational complexity to offer competitive pricing and better risk management.

Horizon

Looking ahead, the horizon for computational complexity in decentralized options is defined by the full realization of zero-knowledge technology and advanced Layer 2 architectures. The goal is to create a fully on-chain risk engine where complex pricing models can be executed and verified without prohibitive cost. This will unlock a new generation of derivative products previously impossible in a decentralized environment.

The ability to perform complex calculations privately and efficiently using ZK-proofs will allow protocols to offer exotic options with path-dependent payoffs, accurately priced and managed on-chain. This will reduce reliance on centralized market makers for pricing and liquidity provision. The next generation of protocols will move beyond simply verifying off-chain calculations; they will execute sophisticated risk management algorithms directly within the Layer 2 environment, providing real-time risk calculations for liquidity providers and traders.

The future of decentralized derivatives relies on cryptographic solutions that allow for complex calculations without sacrificing trustlessness or economic viability.

The challenge of data availability remains. Even with efficient computation, the data required for real-time risk calculations must be available to the protocol. This includes real-time volatility data and price feeds. The integration of high-throughput data streams with ZK-EVMs will be essential to enable a truly robust and liquid decentralized options market. This integration will create a system where computational complexity is no longer a constraint on financial innovation, but a tool used to enhance security and efficiency. The end result is a market structure that offers the precision of traditional finance with the transparency and resilience of decentralization.

Glossary

Risk Sensitivity Analysis

Statistical Model Complexity

Market Microstructure Complexity Analysis

Delta Hedging Complexity

Computational Finance Adaptation

Complexity Vulnerability

Deterministic Virtual Machines

Computational Auctions

Computational Throughput Scarcity