Essence

Blockchain Throughput Limits define the maximum transactional capacity of a decentralized ledger within a specified time interval. This metric dictates the ceiling for asset settlement, derivative execution, and market activity on-chain. When a protocol reaches this saturation point, network congestion manifests as increased latency and escalating gas fees, creating a direct drag on the efficiency of financial applications.

The transactional ceiling of a network serves as the fundamental constraint on the velocity of capital and the viability of high-frequency decentralized financial strategies.

Financial systems rely on the predictability of settlement. In the context of Blockchain Throughput Limits, volatility is not merely a function of asset price but a consequence of network congestion. If a protocol cannot process order flow during periods of high market stress, participants face significant liquidation risks and slippage, effectively rendering sophisticated hedging instruments unusable when they are needed most.

Origin

The genesis of Blockchain Throughput Limits traces back to the architectural choices of early distributed ledgers, where security and decentralization were prioritized over rapid transaction processing.

Satoshi Nakamoto established a block size limit to prevent spam and ensure network propagation, inadvertently setting a hard cap on system throughput.

- Genesis Block Constraints established the initial parameters for block frequency and data size.

- Security Decentralization Tradeoffs forced developers to accept slower finality to maintain network integrity.

- Scalability Trilemma popularized the recognition that throughput, security, and decentralization exist in a state of tension.

This structural rigidity necessitated the development of secondary layers and alternative consensus mechanisms. Early crypto finance participants operated under the assumption that network capacity would expand linearly with demand, a premise that collapsed as decentralized exchange volumes surged. The subsequent realization that Blockchain Throughput Limits are a hard engineering constraint rather than a temporary hurdle shifted the focus of derivative design toward off-chain matching and state channels.

Theory

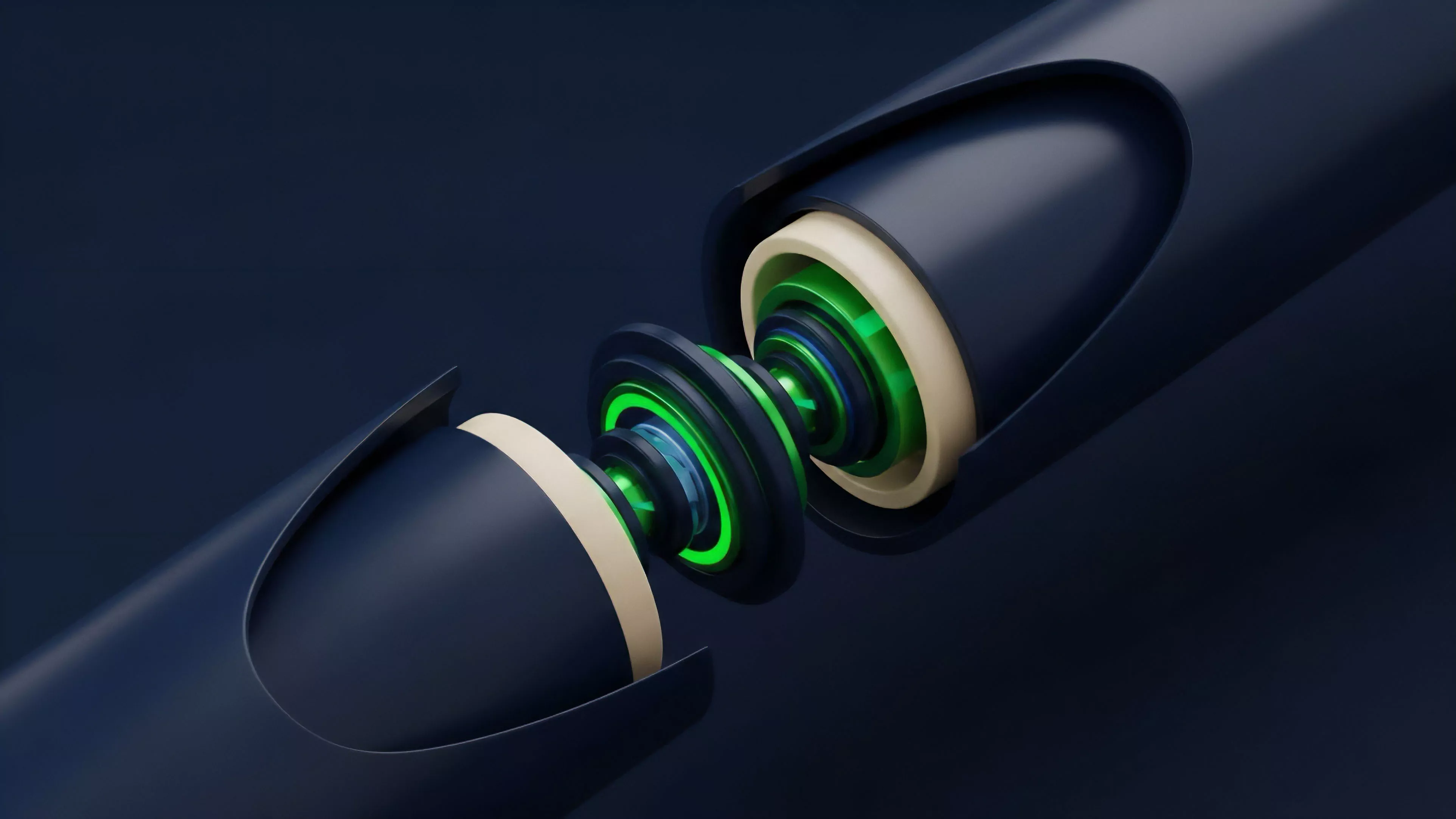

The mechanics of Blockchain Throughput Limits operate through the interplay of block time, gas limits, and state growth.

Each block represents a finite container for computation and data storage. When aggregate demand for state transitions exceeds the block gas limit, the network forces a market-based rationing mechanism, typically manifesting as fee auctions.

| Metric | Systemic Impact |

|---|---|

| Block Gas Limit | Defines maximum computational capacity per block |

| Transaction Finality | Determines time to irrevocable settlement |

| Network Latency | Impacts real-time derivative pricing updates |

From a quantitative perspective, the throughput limit acts as a bottleneck for the Greeks of an option. Delta, Gamma, and Vega calculations rely on accurate, low-latency price feeds. If the underlying protocol suffers from throughput saturation, the feed updates become stale, leading to mispricing and potential arbitrage opportunities that drain liquidity from the system.

The physical reality of code execution on a decentralized virtual machine remains constant ⎊ it requires cycles. While we theorize about infinite scaling, the actual limit is governed by the propagation speed of data across nodes. Physics reminds us that information cannot travel faster than light, and in the digital realm, the speed of consensus is bounded by the slowest participant in the validator set.

Systemic throughput bottlenecks transform predictable financial transactions into stochastic processes governed by congestion-induced latency.

Approach

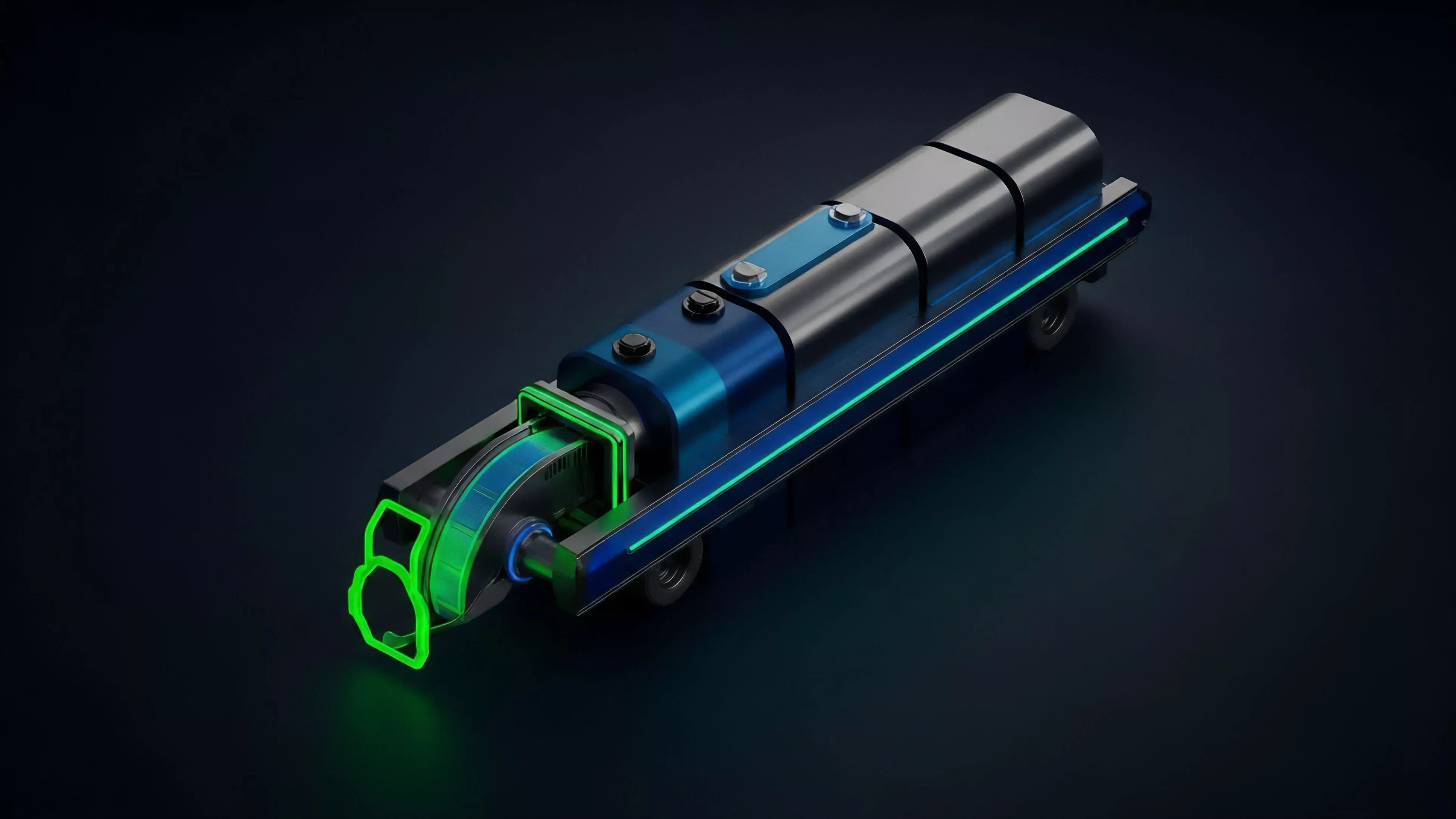

Current strategies for managing Blockchain Throughput Limits involve moving high-frequency derivative operations away from the main settlement layer. Protocols now utilize rollups and specialized app-chains to decouple order matching from global consensus. By aggregating thousands of transactions into a single proof, these systems circumvent the limitations of the primary ledger while maintaining the security guarantees of the base layer.

- Rollup Architecture bundles transactions to reduce the load on the main chain.

- Off-Chain Matching Engines enable high-speed order book updates without immediate on-chain settlement.

- State Channel Implementation allows participants to transact repeatedly without triggering on-chain events for every move.

Evolution

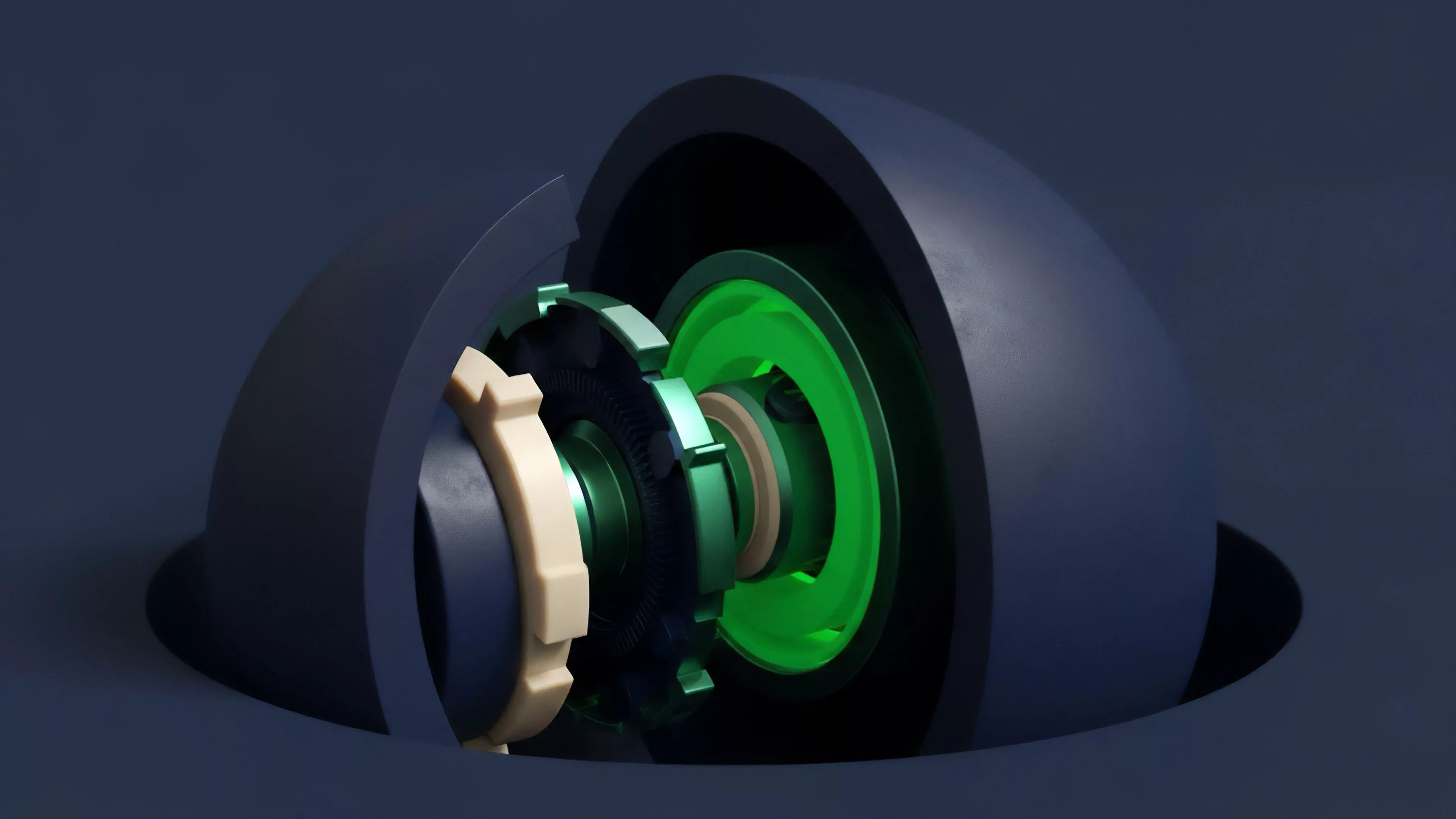

The evolution of Blockchain Throughput Limits has moved from simple block size increases to sophisticated modular designs. Early attempts at scaling focused on increasing block frequency or size, which led to centralization as only high-performance hardware could keep pace. The industry shifted toward modularity, where execution, data availability, and settlement are separated into distinct layers.

Modular design represents the transition from monolithic ledger constraints to a tiered architecture optimized for specialized financial workloads.

This shift reflects a maturation in understanding how to build resilient systems. We no longer expect a single protocol to handle all global financial activity. Instead, we architect interconnected networks where throughput is scaled through horizontal distribution.

The risk has evolved from simple congestion to complex cross-chain contagion, where a failure in one bridge or execution layer propagates across the entire derivative ecosystem. One might observe that the history of financial technology is a repeated cycle of moving from centralized clearing houses to distributed networks and back to specialized, high-performance hubs. The current movement toward modularity is merely the latest iteration of this recursive search for the optimal balance between trustless settlement and performance.

Horizon

Future developments in Blockchain Throughput Limits will center on hardware-accelerated zero-knowledge proofs and asynchronous execution models.

As protocols adopt parallel transaction processing, the focus will shift from raw throughput to the latency of state synchronization. The ultimate goal is a financial environment where the underlying ledger is invisible, providing instantaneous settlement regardless of global transaction volume.

| Innovation | Expected Outcome |

|---|---|

| Parallel Execution | Increased throughput via concurrent state updates |

| ZK Proof Acceleration | Reduced cost and time for verifiable settlement |

| Asynchronous Consensus | Elimination of global blocking during validation |

The strategic imperative for market participants is to build systems that are throughput-agnostic. Derivative protocols must incorporate adaptive fee mechanisms and robust circuit breakers to handle periods of network saturation. As we move toward a future of high-frequency decentralized finance, the ability to operate effectively under strict capacity constraints will differentiate the resilient protocols from those that fail during market volatility.