Essence

Blockchain Performance Optimization constitutes the systematic refinement of decentralized infrastructure to reduce latency, increase throughput, and minimize computational overhead for derivative execution. It represents the transition from monolithic, congestion-prone networks toward high-frequency capable environments where financial settlement occurs at speeds competitive with traditional centralized exchanges. The core objective involves reducing the time-to-finality for complex option pricing updates and liquidation triggers, which directly impacts the capital efficiency of liquidity providers and market makers.

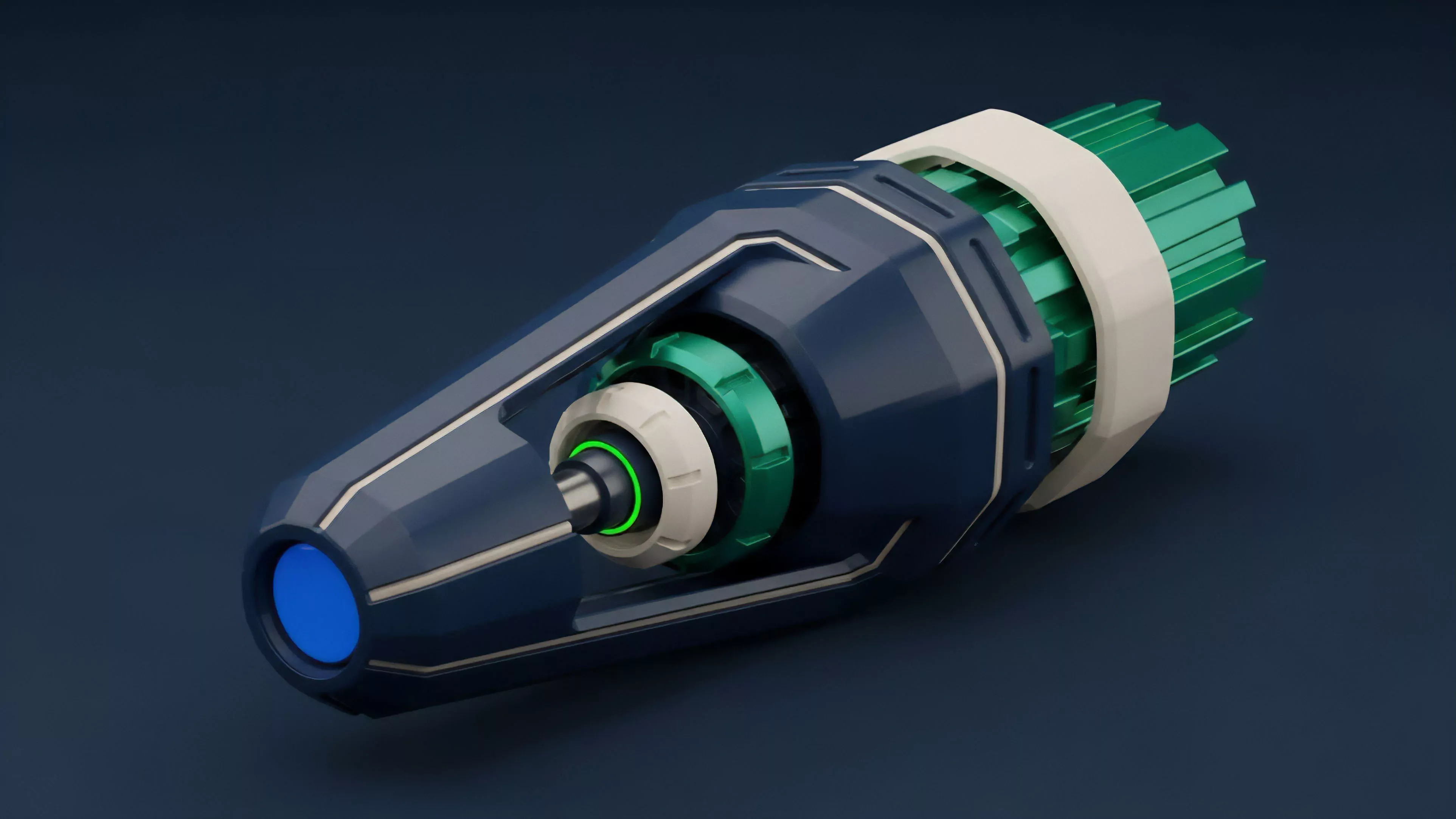

Blockchain Performance Optimization minimizes the temporal gap between market data ingestion and smart contract settlement to ensure robust derivative pricing.

At the architectural level, this optimization involves parallelizing transaction execution, implementing off-chain state channels, and utilizing specialized consensus mechanisms designed for rapid verification. By decoupling the consensus layer from the execution layer, developers create environments where the computational cost of calculating Greeks for thousands of open positions does not paralyze the underlying network. This structural separation is the primary mechanism for maintaining systemic stability during periods of extreme market volatility.

Origin

The necessity for Blockchain Performance Optimization arose from the fundamental limitations of first-generation distributed ledgers.

Early smart contract platforms operated under a single-threaded execution model where every node processed every transaction, creating a bottleneck that rendered high-frequency derivative trading impossible. During periods of high network activity, gas fees escalated exponentially, effectively pricing out arbitrageurs and liquidators who maintain the peg and solvency of derivative protocols.

- Transaction Congestion forced the industry to move beyond basic layer-one limitations.

- Latency Inefficiency necessitated the development of asynchronous processing models.

- Capital Inefficiency demanded faster settlement cycles to reduce collateral lock-up requirements.

This evolution was driven by the realization that financial primitives require deterministic performance. As decentralized finance grew, the disparity between the speed of traditional order books and the throughput of blockchain protocols became a systemic risk. Architects began focusing on modularity, splitting the responsibilities of data availability, execution, and settlement to overcome the constraints of the blockchain trilemma, specifically the conflict between decentralization and high-throughput performance.

Theory

The theoretical framework for Blockchain Performance Optimization rests on the principle of minimizing the total cost of state transitions.

In the context of crypto options, this involves optimizing the mathematical operations required to recompute option values, collateral ratios, and risk sensitivities. Because smart contracts execute code in a deterministic virtual machine, the gas cost of these operations is directly tied to the algorithmic complexity of the underlying pricing models, such as Black-Scholes or binomial tree approximations.

Algorithmic Efficiency

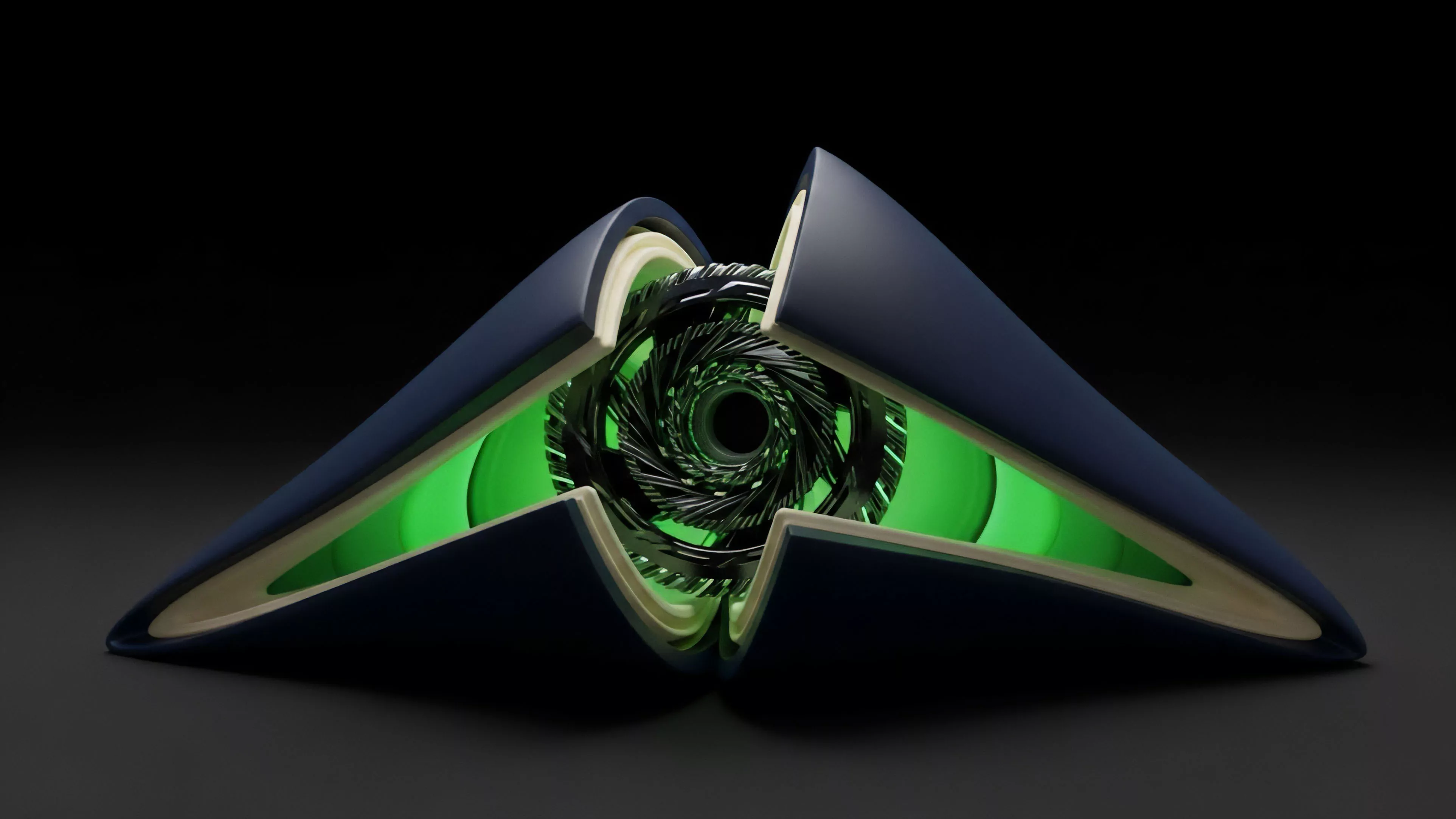

Optimizing these calculations requires moving away from heavy, on-chain computation toward pre-computed lookup tables or zero-knowledge proof verification. By shifting the intensive math off-chain and only verifying the result on-chain, protocols maintain trustlessness while achieving performance gains. The interaction between these off-chain solvers and the on-chain settlement engine is the most critical component of modern derivative design.

| Method | Latency Impact | Throughput Capacity |

|---|---|---|

| On-chain computation | High | Low |

| ZK-proof verification | Medium | High |

| Off-chain state channels | Ultra-low | Very high |

The optimization of derivative protocols hinges on the strategic migration of intensive mathematical workloads from the main consensus layer to verifiable off-chain execution environments.

This system functions as an adversarial arena. Participants compete to trigger liquidations or update positions first, meaning that even millisecond-level improvements in protocol latency provide a significant competitive advantage. The physics of the protocol ⎊ how quickly it can process a state update ⎊ dictates the maximum allowable leverage and the sensitivity of the risk engine to market shocks.

Approach

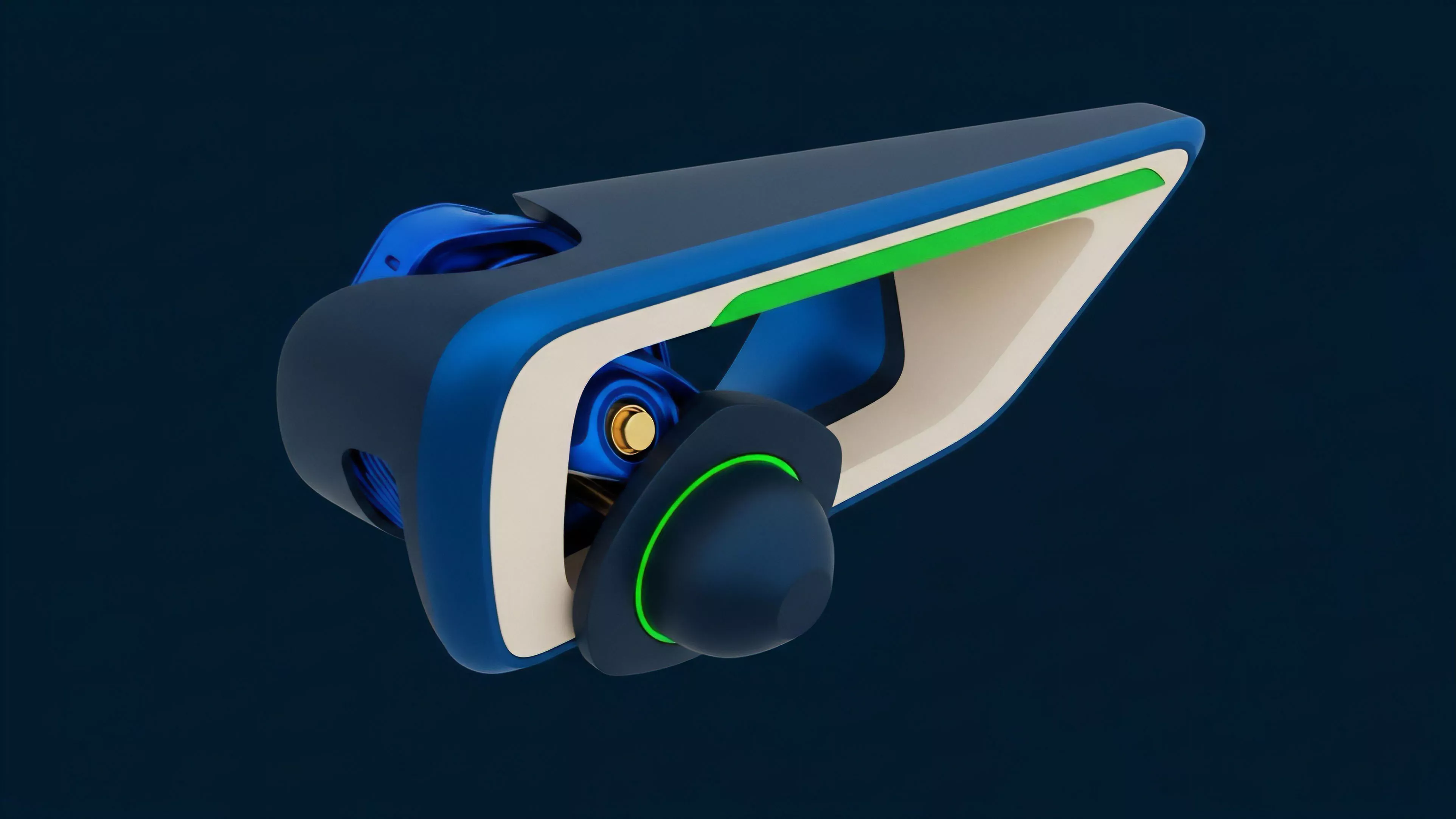

Current methodologies prioritize the construction of high-performance execution environments that function as modular extensions of the base layer.

These environments utilize specialized virtual machines designed to execute code faster than general-purpose platforms. Developers focus on reducing the number of state reads and writes, as these interactions with the global ledger are the most expensive and slowest operations in any decentralized system.

Technical Implementation

The contemporary approach involves three distinct layers of optimization:

- Parallel Execution enables the simultaneous processing of non-conflicting transactions, significantly increasing the number of orders a protocol can handle per second.

- Data Compression reduces the storage footprint of option metadata, ensuring that nodes can propagate information rapidly across the network.

- Optimistic Settlement allows for near-instantaneous trade execution, with finality achieved after a short challenge window, providing the speed required for competitive derivative markets.

This approach acknowledges that the network is under constant stress. Automated agents constantly scan for price discrepancies, and the protocol must be resilient enough to handle these bursts without experiencing state bloat or excessive latency. The design choices made here ⎊ whether to prioritize absolute decentralization or raw speed ⎊ define the protocol’s position within the broader market.

Evolution

The progression of Blockchain Performance Optimization reflects a shift from primitive, slow-settlement architectures to sophisticated, high-speed execution engines.

Early protocols were limited by the base layer’s throughput, often resulting in stale pricing and high slippage for options traders. As the market matured, the focus transitioned to layer-two rollups and app-specific chains, which provide dedicated resources for financial activity. Sometimes I think we are just trying to rebuild the entire history of high-frequency trading infrastructure, but this time with public keys instead of private wires.

The evolution of these systems mirrors the transition from manual, floor-based trading to the electronic, algorithm-driven markets of the late twentieth century, albeit at an accelerated pace.

| Era | Focus | Primary Constraint |

|---|---|---|

| Foundational | Security | Throughput |

| Intermediate | Scalability | Latency |

| Advanced | Capital Efficiency | State Management |

Evolution in decentralized finance is characterized by the systematic migration of computational load toward specialized layers that preserve security while maximizing transactional velocity.

This journey has been defined by the trade-offs between different consensus mechanisms. Early models relied on proof-of-work, which was inherently too slow for derivatives. The move to proof-of-stake and subsequent sharding or modular architectures has allowed for the throughput required to sustain complex derivative markets.

The industry is currently moving toward purpose-built chains that prioritize low-latency state updates over general-purpose smart contract flexibility.

Horizon

The future of Blockchain Performance Optimization lies in the development of hardware-accelerated consensus and execution. We are moving toward a state where specialized cryptographic hardware, such as FPGAs or ASICs, will be used by validators to verify zero-knowledge proofs in real-time. This will allow for derivative protocols to operate with the speed of centralized exchanges while retaining the transparency and censorship resistance of a decentralized ledger.

Strategic Directions

Future advancements will likely focus on:

- Asynchronous Messaging between disparate chains to allow for cross-protocol collateralization and liquidity aggregation.

- Predictive State Pre-fetching which allows nodes to prepare for upcoming transactions before they arrive in the mempool.

- Formal Verification of high-performance code to ensure that optimization does not introduce new attack vectors.

The ultimate goal is the creation of a global, permissionless derivative clearinghouse that functions with the efficiency of a unified, high-speed network. The ability to manage systemic risk in real-time, across thousands of distinct financial products, will be the final test of these optimized systems. The question remains whether the complexity required to achieve this performance will itself become the next systemic vulnerability. What is the ultimate limit of state-based computation speed when subjected to the strict requirements of verifiable, decentralized financial finality?