Essence

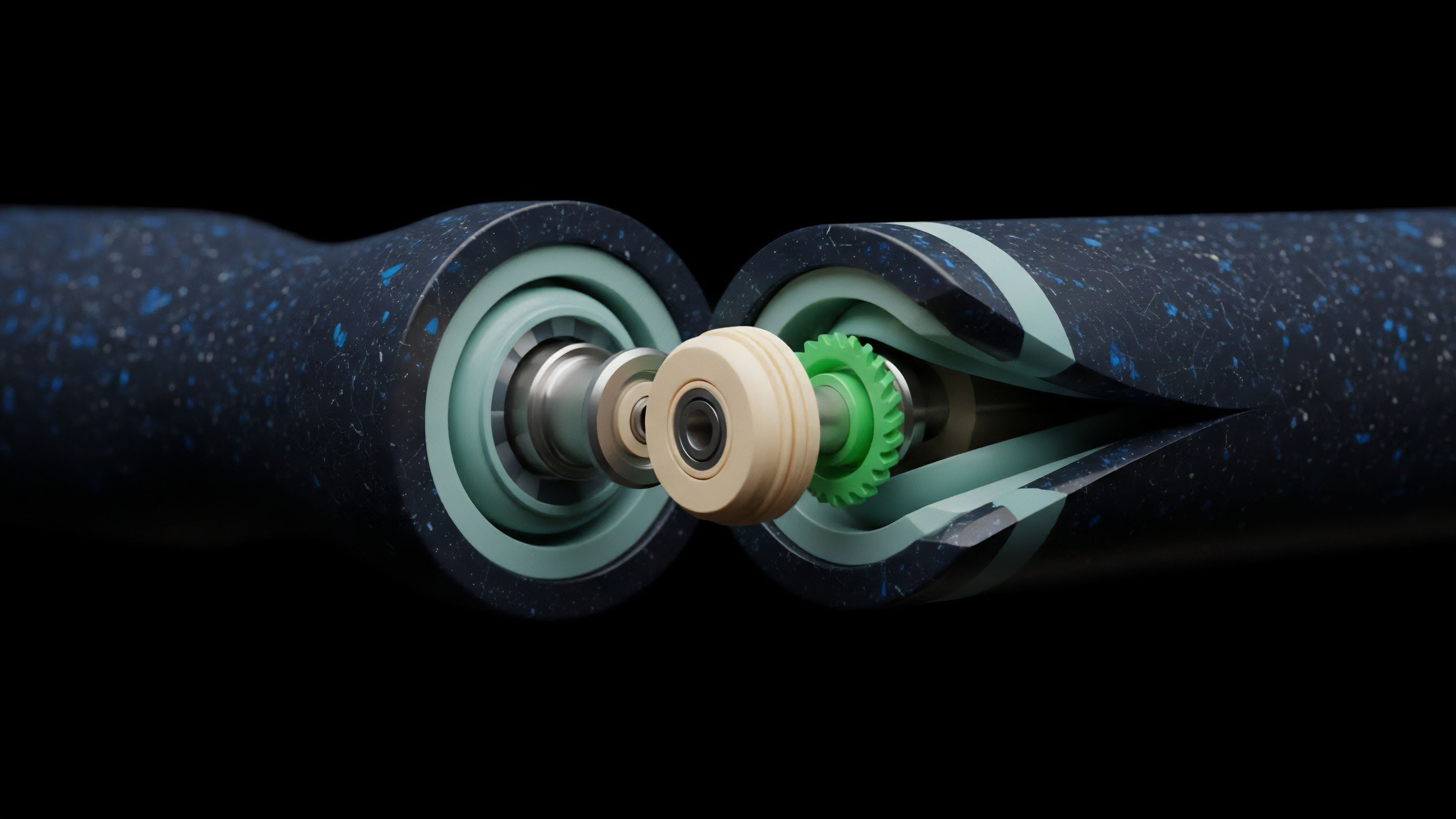

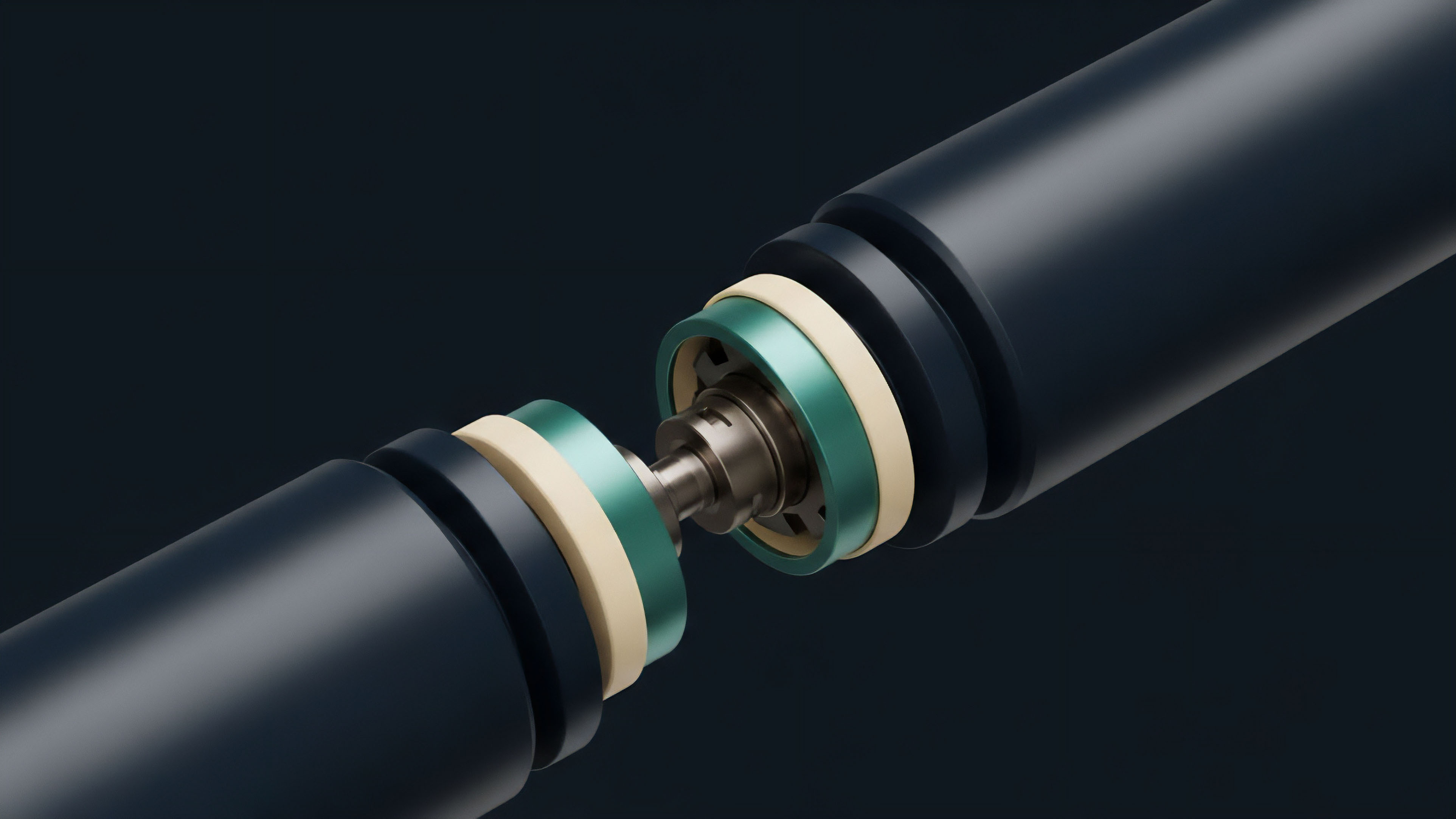

The formal verification of derivative protocol state machines represents the apex of security assurance in decentralized finance ⎊ it is the shift from empirical testing to mathematical certainty. This R&D domain treats the entire lifecycle of a derivative contract ⎊ from minting a position to a final settlement ⎊ as a finite state machine, a computational model where every valid operation is a defined transition between defined states. The critical insight here is that the security of a crypto options protocol rests entirely on the integrity of its state transitions ⎊ specifically, the logic governing collateral checks, margin requirements, and the most adversarial function, liquidation.

Formal Verification is the mathematical proof of a protocol’s adherence to its security specifications, eliminating entire classes of logic errors before deployment.

A system architect views the liquidation engine not as a simple function but as the most critical state transition rule. If this rule can be violated, even under extreme market conditions or manipulated inputs, the entire system is subject to catastrophic failure and capital loss. Formal Verification (FV) requires the protocol’s intended behavior to be written in a precise, unambiguous mathematical language ⎊ a specification ⎊ before the code itself is written or audited.

This specification then serves as the ground truth against which the actual smart contract code is rigorously proven to be equivalent. This process guarantees that certain critical properties, known as invariants , always hold true, regardless of the sequence of user actions or external inputs. An invariant might be: “Total collateral must always exceed total liabilities,” or “A user’s position can only be liquidated if their margin ratio is below the minimum threshold.”

Defining the State Machine

The state machine for a decentralized options vault is complex, defined by variables that include:

- System State Variables The collective set of all user positions, outstanding debt, and total collateral held within the protocol.

- Transition Functions The specific, permissioned operations that alter the state, such as depositCollateral, openPosition, closePosition, and initiateLiquidation.

- Invariants The set of mathematical properties that must remain true across every possible transition, ensuring systemic solvency and fairness.

This architectural rigor moves the assurance conversation beyond finding individual bugs ⎊ a reactive approach ⎊ to proactively proving the absence of entire classes of systemic vulnerabilities.

Origin

The genesis of formal verification as a core R&D imperative for decentralized finance is rooted in decades of systems engineering failures where human intuition proved inadequate for managing complex concurrency. This methodology did not begin in the financial sector; its origins lie in environments where failure carries a literal cost of life or catastrophic infrastructure loss. The aerospace and semiconductor industries ⎊ where hardware flaws are immutable once silicon is etched ⎊ were the first to adopt Formal Methods.

From Hardware to Programmable Money

The shift began with proving the correctness of microprocessors, operating systems kernels, and mission-critical software for aviation. For instance, the verification of the seL4 microkernel to ensure its security properties was a landmark achievement, demonstrating that large, complex codebases could be mathematically proven correct. The transition to DeFi was a natural, albeit urgent, evolution.

When Ethereum introduced the concept of programmable money ⎊ smart contracts that hold and manage billions in capital without legal recourse ⎊ the stakes became identical to those in aerospace. A bug is not just a software error; it is an economic exploit. The early, catastrophic failures of DeFi protocols, particularly those involving faulty accounting logic in lending and options platforms, cemented the necessity for a higher standard than conventional unit testing.

This realization was the cold, hard impetus for R&D groups to adapt the rigorous tools of formal computer science ⎊ TLA+, Coq, and Isabelle/HOL ⎊ to the unique constraints of the Ethereum Virtual Machine (EVM). It became clear that the financial systems being built required the same level of assurance as the systems controlling aircraft or nuclear reactors.

Theory

The theoretical underpinnings of Formal Verification are drawn from discrete mathematics and logic, specifically Model Checking and Theorem Proving. The Rigorous Quantitative Analyst sees this as the only sane way to model an adversarial environment where millions of agents interact with a single, shared state.

The entire theory is built on the concept of exhaustively exploring the protocol’s state space.

Invariants and State Space Exploration

The core theoretical challenge is the state space explosion ⎊ the number of possible states a complex protocol can be in is astronomically large, making exhaustive simulation impossible. Formal methods address this by defining invariants ⎊ properties that must hold true in every reachable state ⎊ and then using automated theorem provers to prove these invariants are preserved across all possible state transitions.

- Specification Language The protocol’s intended behavior is written in a formal specification language (e.g. TLA+ or an SMT-LIB dialect). This is the “What.”

- Implementation Code The actual Solidity or Rust code is then written. This is the “How.”

- Proof Generation A tool is used to generate a mathematical proof that the implementation code satisfies the formal specification, demonstrating that the invariants cannot be violated.

The systemic risk of a decentralized options protocol is a direct function of the unverified complexity within its state transition logic.

This is where the pricing model becomes truly elegant ⎊ and dangerous if ignored. A derivative’s payoff is path-dependent, meaning its value relies on the sequence of events. A flawed state machine can create an unpriced, path-dependent systemic vulnerability, allowing an attacker to manipulate the state to a point where a liquidation fails, triggering a cascade.

Our inability to respect the skew in the protocol’s operational risk is the critical flaw in our current models.

Comparative Verification Frameworks

The choice of framework dictates the scope and cost of the R&D effort.

| Methodology | Primary Focus | Assurance Level | Computational Cost |

|---|---|---|---|

| Model Checking (e.g. TLA+) | Liveness and Safety Properties of State Transitions | High | Moderate (Automated) |

| Theorem Proving (e.g. Coq) | Full Functional Correctness of Complex Logic | Highest (Human-Assisted) | Very High (Manual Effort) |

| Fuzz Testing (Conventional) | Finding Bugs in Specific Execution Paths | Low to Moderate | Low (Automated) |

Approach

The practical application of Formal Verification to live DeFi derivatives protocols demands a pragmatic, modular approach, focusing the immense R&D resources only on the most system-critical components. Full verification of a protocol the size of a major options exchange is computationally and economically prohibitive; therefore, the current approach is one of strategic triage.

Targeted Component Verification

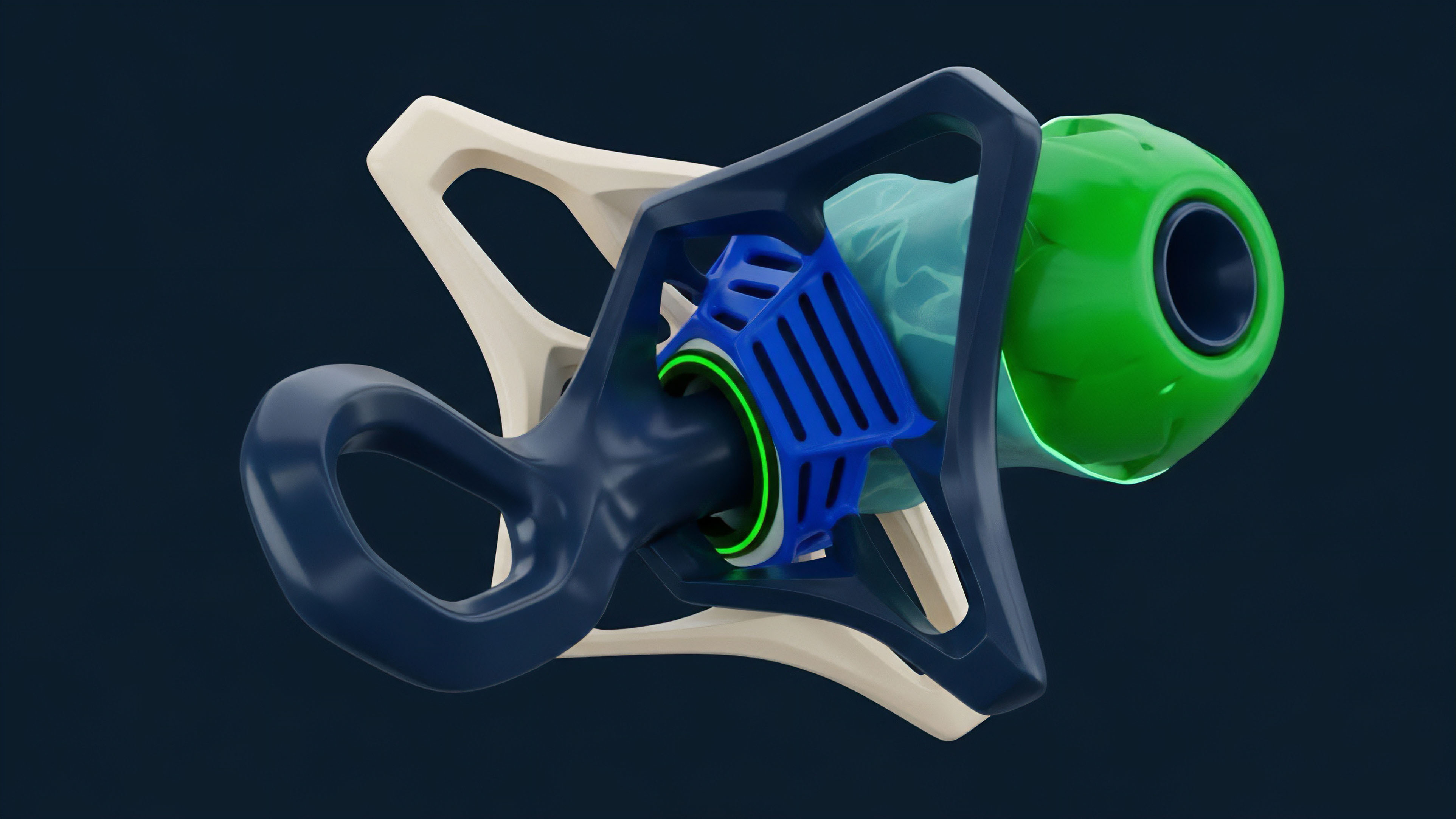

The Derivative Systems Architect isolates the components that hold the highest economic leverage and the greatest potential for cascading failure. These are the components where a single incorrect state transition can lead to insolvency.

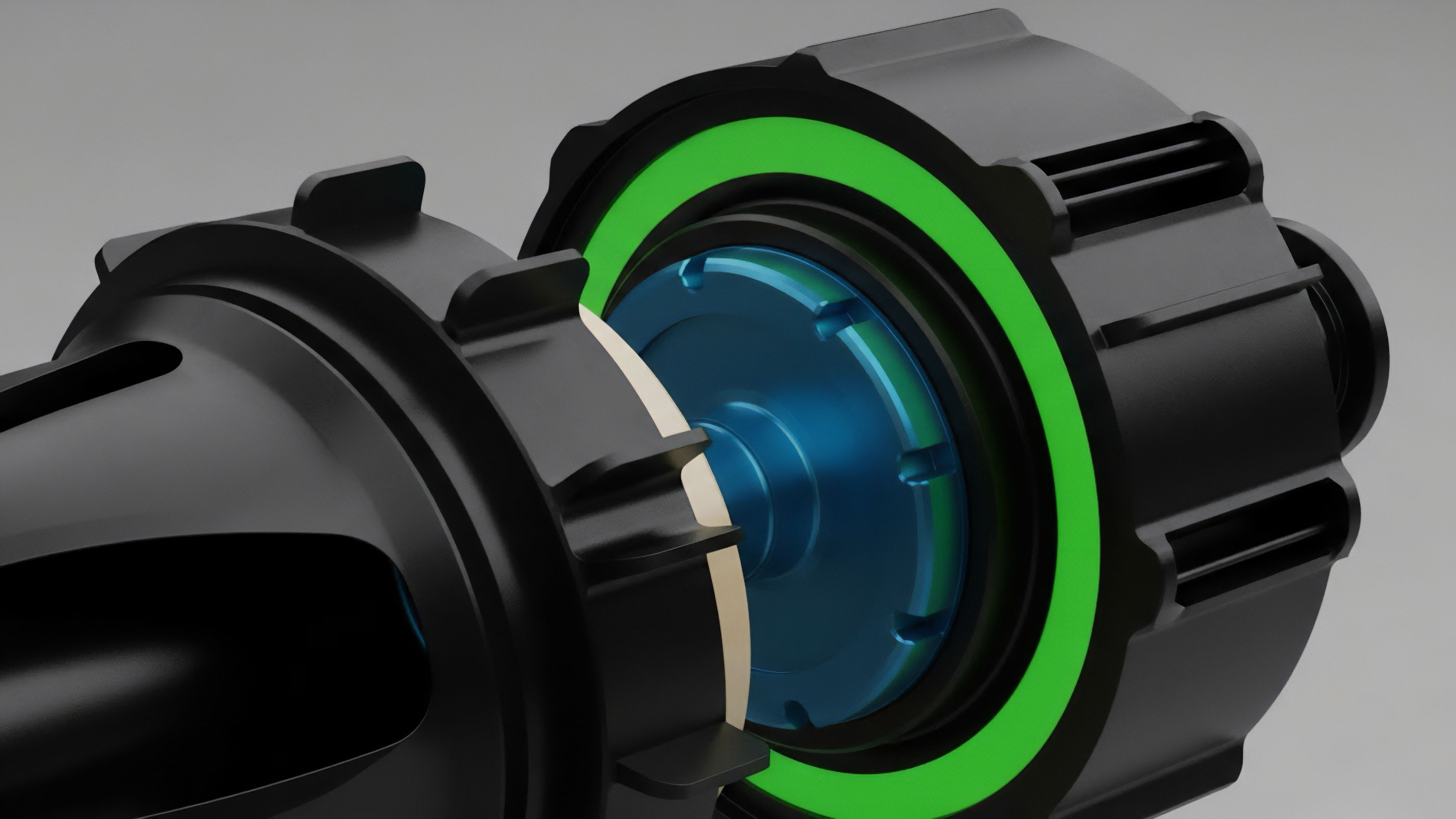

- Liquidation Engines This is the paramount target. Verification proves that the engine cannot execute a faulty liquidation ⎊ either liquidating a solvent user or failing to liquidate an insolvent one ⎊ and that the engine’s internal accounting for collateral and debt is mathematically sound.

- Collateral Accounting Logic Proving the invariants that govern how collateral is valued, deposited, and withdrawn, ensuring that no double-spending or under-collateralization can occur at the protocol level.

- Pricing and Oracle Integration Verification of the mathematical logic that takes an external price feed and translates it into an internal, risk-adjusted valuation, ensuring the protocol cannot be exploited by minor price feed deviations.

- Governance and Upgrade Mechanisms Formal proof that the process for changing system parameters ⎊ such as margin requirements or fees ⎊ adheres to predefined safety constraints and cannot be used to arbitrarily drain funds.

This selective verification is often executed using specialized domain-specific languages (DSLs) that abstract the low-level EVM code into a verifiable intermediate representation. This process requires a unique blend of cryptographers, formal methods experts, and financial engineers ⎊ a rare and costly R&D team. The result is a set of verifiable security properties that act as a shield against the most sophisticated economic exploits.

A formal proof of a liquidation engine’s solvency invariant is the only acceptable risk mitigation for a decentralized derivatives platform.

Verification Cost and Time Trade-Off

The time and capital investment for high-assurance verification is significant, often measured in months and seven figures, contrasting sharply with the weeks required for a traditional audit. This economic reality creates a significant barrier to entry, but it also establishes a clear quality signal: those protocols that commit to formal methods are signaling a non-negotiable dedication to systemic stability over speed of deployment. The market should ⎊ and eventually will ⎊ price this security assurance into the protocol’s systemic trust premium.

Evolution

The R&D trajectory for Formal Verification in DeFi has shifted from an all-or-nothing, post-hoc analysis to a continuous, integrated pipeline ⎊ a necessary evolution driven by the velocity of decentralized market development.

Early attempts focused on proving the entire smart contract system correct, which was brittle and impractical for frequently updated protocols. The current state is defined by modularity and the integration of runtime checks.

Modular Proofs and Runtime Verification

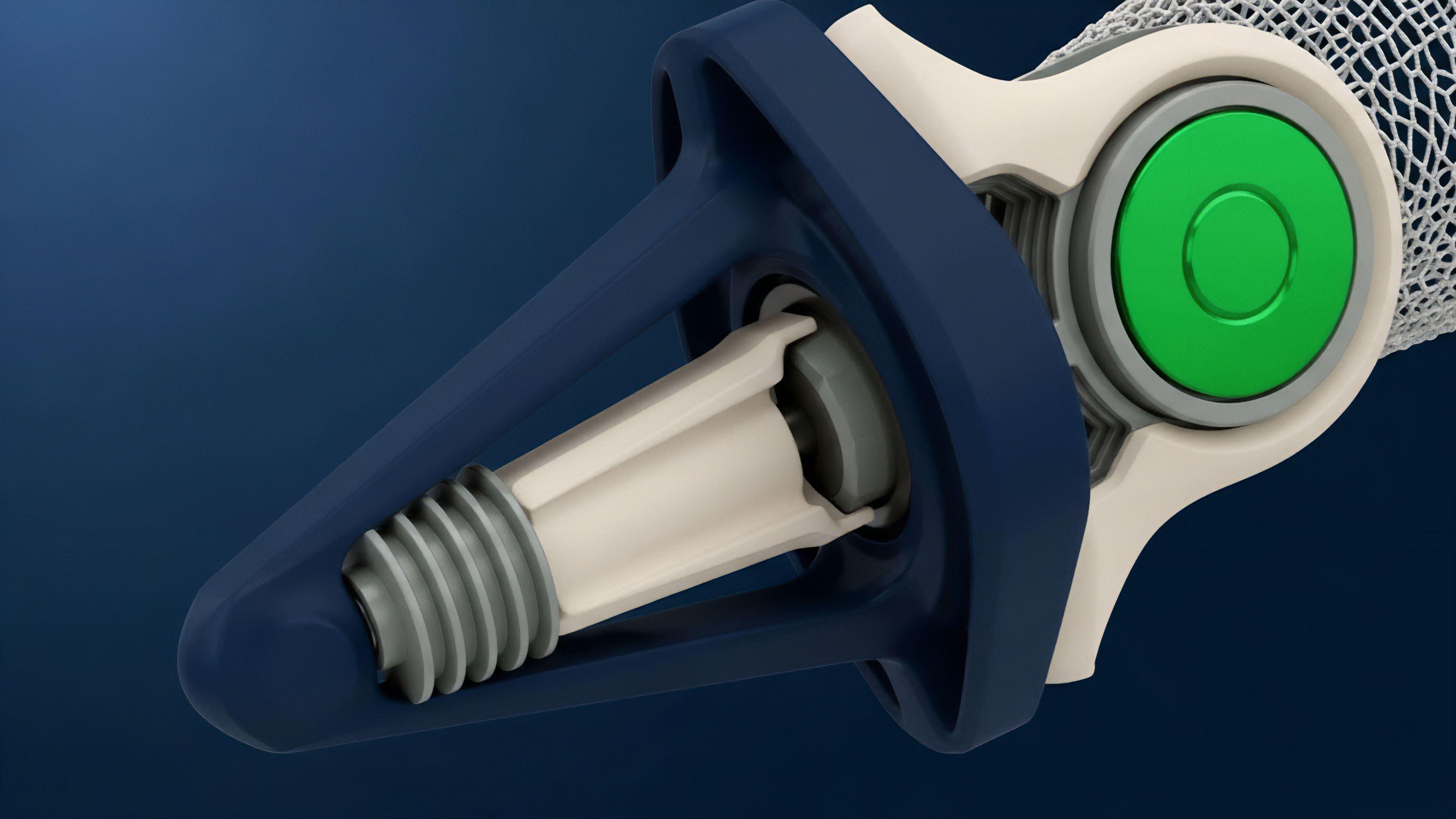

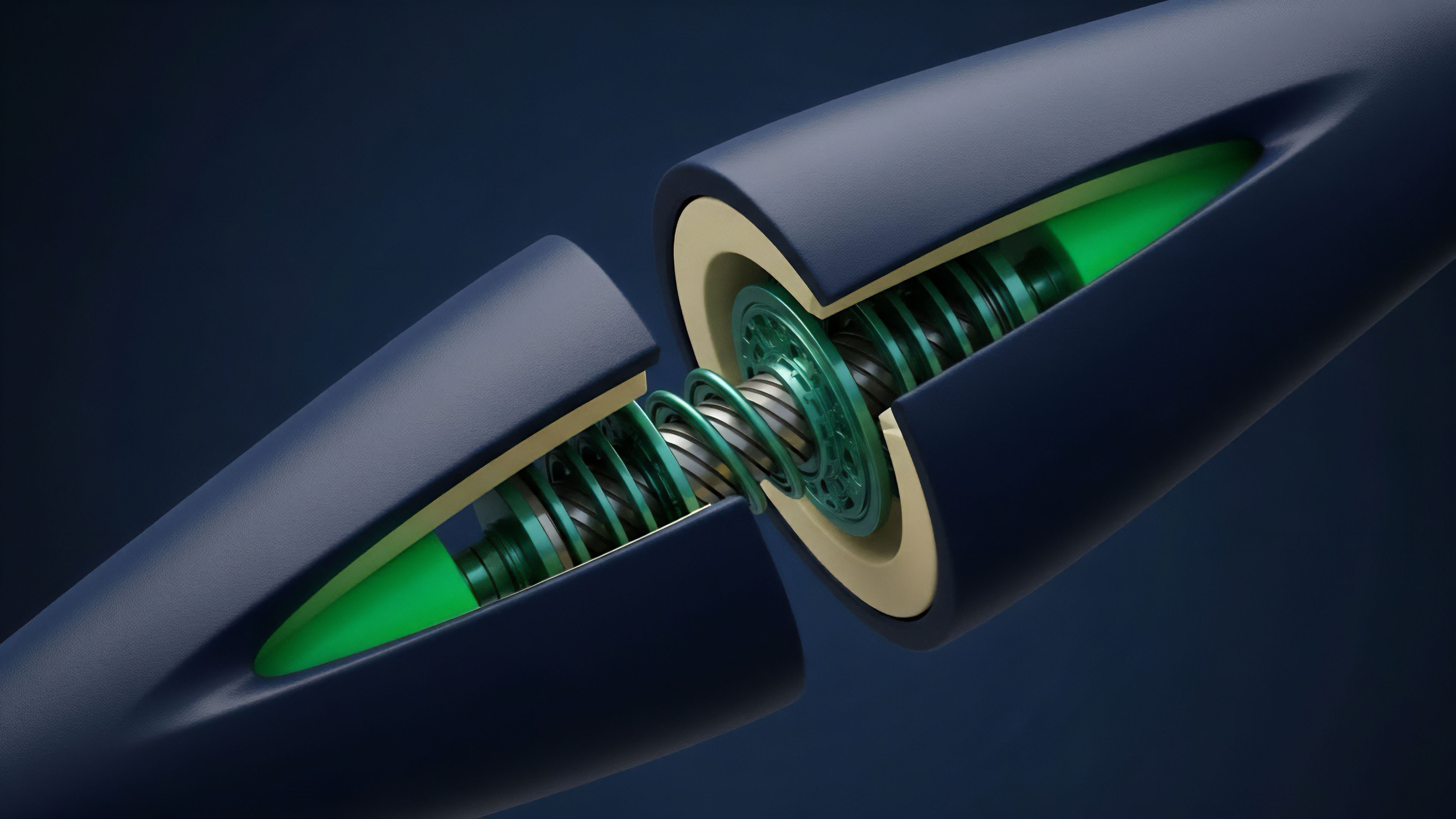

The industry is moving toward Modular Verification , where complex systems are broken down into smaller, mathematically manageable components. Each component’s formal specification includes assumptions about its environment and guarantees about its output. This allows for rapid iteration and partial re-verification when a small piece of code changes.

The true leap, though, is Runtime Verification (RV). RV is an R&D discipline that takes the formally verified security properties ⎊ the invariants ⎊ and compiles them into lightweight, on-chain monitors. These monitors do not halt the protocol; they observe every state transition in real-time.

If a transition is about to violate a critical invariant (e.g. if a transaction would cause total liabilities to exceed total collateral), the monitor can execute a pre-programmed, verified safety action, such as pausing the vulnerable function or triggering an emergency shutdown.

| Stage of Evolution | Verification Scope | Primary Toolset | Systemic Implication |

|---|---|---|---|

| Phase 1 (Initial) | Full System (Brittle) | Coq, Isabelle/HOL | Extremely slow iteration cycles |

| Phase 2 (Current) | Modular Components & Critical Functions | TLA+, Custom DSLs | Targeted security; higher confidence in liquidation logic |

| Phase 3 (Future) | AI-Assisted Proof Generation & L1 Integration | ZKP-Proof Systems, ML-Assisted Solvers | Near-instantaneous, automated assurance |

This integration of formal methods into the continuous deployment pipeline represents a maturation of the security posture. It is a recognition that static analysis alone is insufficient. The system must possess the ability to self-monitor against its own mathematically proven failure modes.

This shift from pre-deployment proof to in-situ self-monitoring is the defining characteristic of the latest R&D cycle. The Pragmatic Market Strategist knows that systems will eventually fail; the goal is to architect a system that fails safely and verifiably.

Horizon

The future of derivative protocol security R&D lies in the full integration of zero-knowledge proofs (ZKPs) and AI-assisted proof generation, fundamentally changing the trust assumptions for financial contracts. This is the final frontier: moving from a verified system to a provably correct and private system.

Zero-Knowledge Proofs for State Correctness

Imagine a world where the correctness of a complex, off-chain liquidation run ⎊ which must process thousands of positions and market data points ⎊ can be proven on-chain without revealing the underlying data. This is the promise of ZKPs applied to derivative state machines. A protocol could use a ZK-SNARK to prove that a batch of liquidations was executed according to the formally verified, public rules, while keeping the specific user balances and positions private.

The R&D challenge is building the cryptographic circuits efficient enough to handle the complex, floating-point arithmetic inherent in options pricing and margin calculation. The ability to generate a compact proof of correct execution for a massive off-chain computation ⎊ a Verifiable State Transition ⎊ is the ultimate game-changer for scalability and privacy.

- AI-Assisted Proof Generation The current manual effort required for theorem proving will be automated by machine learning models trained on vast corpora of formal specifications and verified code. This drastically reduces the cost and time barrier, making high-assurance verification accessible to all protocols.

- Layer 1 Formal Guarantees Future blockchain architectures will likely bake formal verification into the Layer 1 consensus. This means the underlying chain itself will enforce properties like “no transaction can cause a negative balance,” acting as a hard, cryptographic constraint on all deployed smart contracts.

- Trustless Audit Markets A decentralized market for formal verification will emerge, where automated tools compete to prove the correctness of a protocol, with the resulting proof being the auditable asset.

This convergence of Formal Verification with ZK-tech transforms the financial architecture from a trust-minimized environment to a trust-eliminated one. We are architecting a financial operating system where solvency is not an assumption based on a successful audit but a mathematical certainty enforced by cryptography. The resulting system will exhibit unprecedented resilience, allowing for leverage and complexity that would be reckless under current, empirically-tested security models. The only systemic risk remaining will be the underlying cryptographic primitives themselves ⎊ a far simpler and more stable risk profile to manage.

Glossary

Smart Contract Security

Margin Engine Security

Decentralized Finance Security

System Resilience Engineering

Systemic Solvency

Model Checking

State Transition

Zk-Snarks

Margin Call Logic