Essence

Blockchain Forensic Investigations represent the systematic process of reconstructing transaction history and identifying participant identities within distributed ledgers. This discipline serves as the primary mechanism for establishing transparency in otherwise pseudonymous environments, functioning as a high-fidelity audit trail for digital assets. Analysts utilize on-chain data to map the flow of capital, clustering addresses to attribute disparate wallets to single entities.

Blockchain Forensic Investigations serve as the definitive mechanism for reconstructing transaction provenance and entity attribution within decentralized ledgers.

The practice transforms raw, immutable data into actionable intelligence, enabling the tracking of illicit fund movements, verifying asset ownership, and assessing counterparty risk. By observing patterns in transaction velocity, gas consumption, and smart contract interaction, investigators detect anomalies that indicate systemic vulnerabilities or malicious activity.

Origin

The genesis of this field lies in the fundamental architectural requirement of public blockchains for transparency. As early decentralized networks gained traction, the inherent visibility of every transaction created an unexpected byproduct: a permanent, searchable database of all financial activity.

Early adopters realized that while addresses provided pseudonymity, the lack of true anonymity allowed for sophisticated pattern matching.

- Transaction Graph Analysis emerged from the need to de-anonymize Bitcoin transactions by linking inputs and outputs through shared spend patterns.

- Entity Clustering Heuristics developed as a method to aggregate multiple addresses controlled by the same wallet software or exchange infrastructure.

- Regulatory Mandates accelerated the professionalization of these techniques, as financial institutions required compliance tools to manage risks associated with digital asset exposure.

This evolution turned the ledger from a simple record of truth into a forensic instrument, shifting the focus from mere verification to active surveillance of capital movement across the global financial system.

Theory

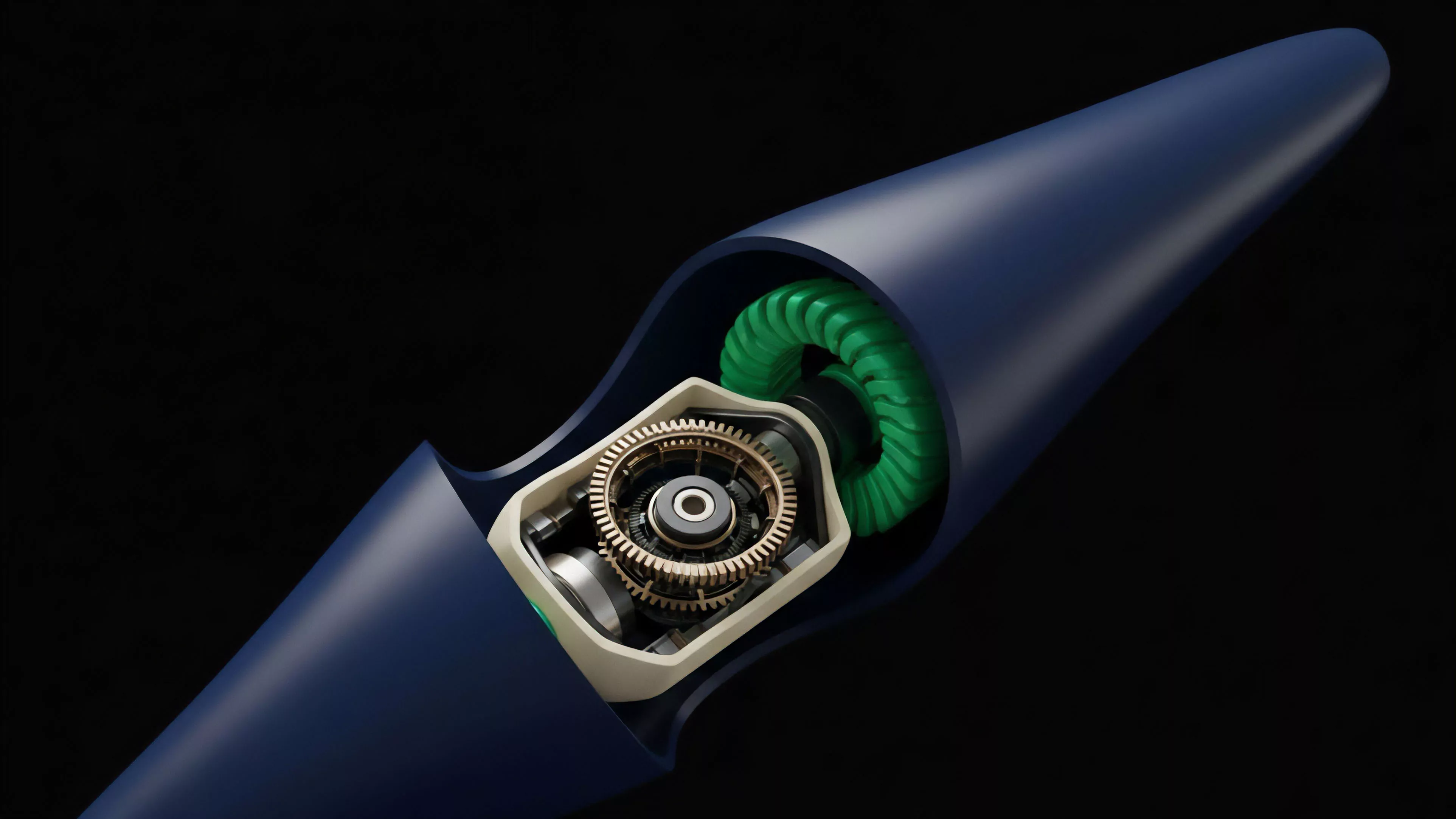

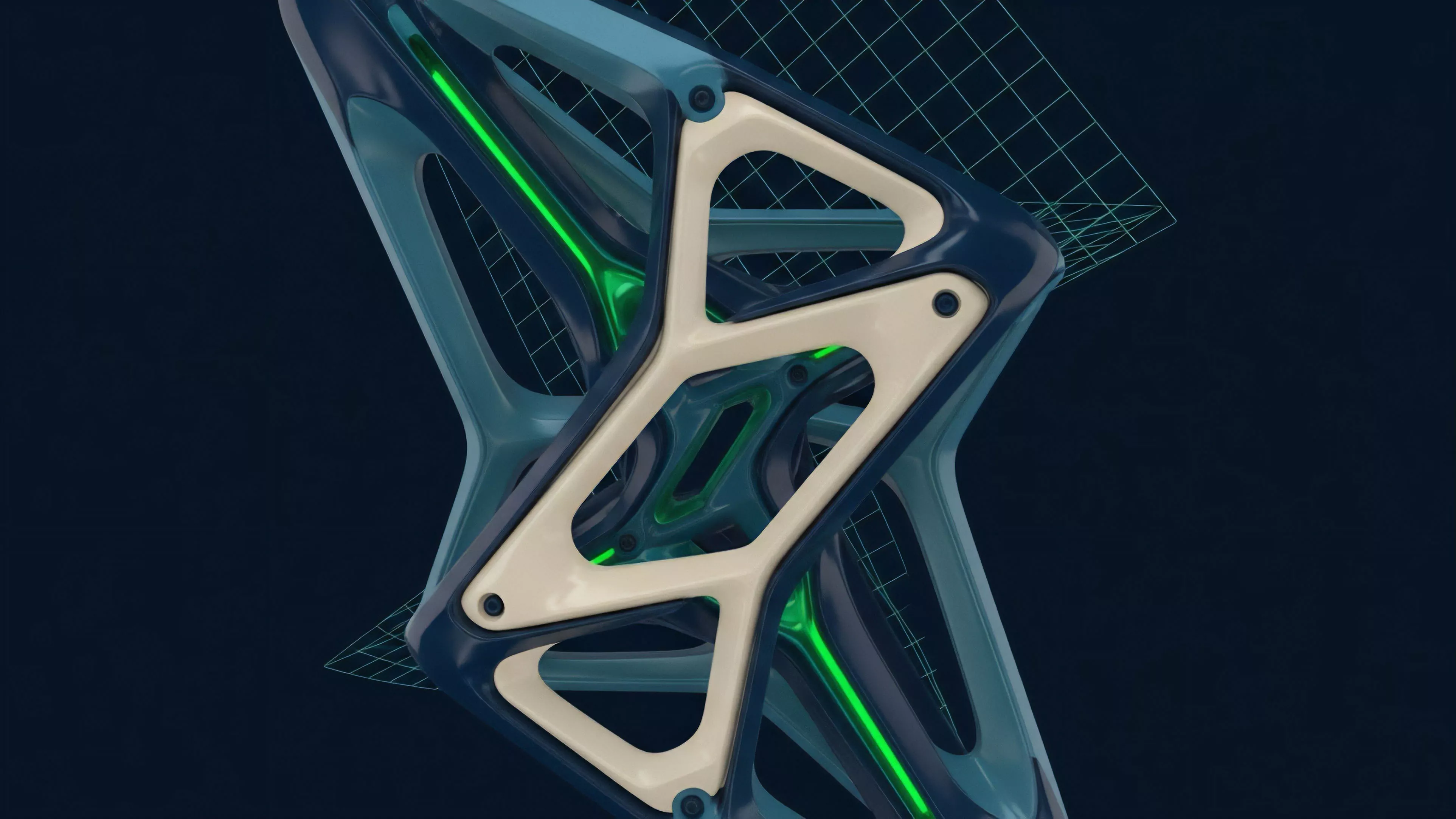

The theoretical foundation rests on the observation that blockchain protocols are deterministic, state-transition machines. Every state change is recorded with absolute precision, creating a reliable data structure for quantitative analysis. Investigators apply graph theory to model the network as a set of nodes and directed edges, where edges represent the transfer of value.

Protocol Physics

The technical architecture of the underlying network dictates the forensic constraints. For instance, account-based models like Ethereum facilitate different analysis patterns compared to the Unspent Transaction Output model used by Bitcoin. Analysts must account for protocol-specific features such as:

| Feature | Forensic Implication |

| Privacy Layers | Obfuscation of transaction paths |

| Smart Contracts | Complex logic concealing ownership |

| Cross-Chain Bridges | Liquidity fragmentation across networks |

The integrity of forensic analysis relies on the deterministic nature of state transitions, allowing for the rigorous mapping of capital across complex transaction graphs.

Adversarial participants often employ obfuscation techniques to break the graph. This creates a perpetual cat-and-mouse dynamic where investigators develop new heuristics to bypass mixing services or decentralized tumblers. The challenge is not identifying the movement, but accurately attributing the movement to a real-world legal identity.

Approach

Current methodologies prioritize the integration of on-chain telemetry with off-chain data sources.

This involves scraping public data from block explorers and combining it with proprietary databases of known entity addresses, such as those associated with exchanges, mixers, or known illicit actors.

- Data Ingestion involves synchronizing full nodes to obtain the raw, granular state of the ledger.

- Heuristic Application uses clustering algorithms to link addresses based on shared spending control.

- Risk Scoring assigns probability metrics to transactions based on their proximity to high-risk entities or suspicious behavioral patterns.

Quantitative analysts often apply Greeks-style sensitivity analysis to monitor systemic risk, assessing how the liquidation of large positions by a single entity might propagate through interconnected protocols. This approach treats the entire blockchain as a single, global order book where liquidity and risk are constantly re-evaluated.

Evolution

The field has matured from manual address tagging to automated, AI-driven surveillance systems capable of monitoring thousands of transactions per second. Initially, the focus remained on simple theft recovery; today, the scope includes complex market manipulation detection and systemic contagion analysis.

Market participants now utilize forensic insights to inform institutional-grade risk management. The shift toward decentralized finance necessitated a deeper understanding of smart contract interactions, as simple wallet tracking proved insufficient to monitor complex, multi-hop derivative positions. Sometimes I consider whether the pursuit of absolute transparency contradicts the original ethos of decentralized finance, yet the market demands these tools for survival.

Anyway, the transition toward sophisticated, cross-chain forensic capabilities indicates that the industry is moving away from experimental status toward institutional maturity.

Horizon

The future of the discipline involves real-time, on-chain risk mitigation integrated directly into protocol logic. Future forensic engines will likely operate as autonomous agents, monitoring for potential exploits or liquidity crises before they manifest as systemic failures.

Forensic intelligence is shifting toward proactive, real-time integration within protocol architecture to preemptively manage systemic volatility and risk.

As zero-knowledge proofs become more prevalent, the challenge for investigators will evolve from simple tracking to cryptographic verification of intent and compliance. This will necessitate a move toward probabilistic forensic models, where the certainty of attribution is balanced against the privacy guarantees inherent in next-generation cryptographic primitives. What remains unresolved is the tension between regulatory requirements for universal identification and the technical feasibility of maintaining privacy in a decentralized, borderless financial system.