Essence

Blockchain Data Validation constitutes the definitive mechanism for ensuring state transition integrity within distributed ledger environments. This process functions as the cryptographic gatekeeper, confirming that proposed state changes adhere strictly to protocol-defined consensus rules. Without this validation layer, the deterministic nature of decentralized finance would collapse into non-deterministic chaos, rendering asset ownership and derivative settlement impossible.

Blockchain Data Validation serves as the fundamental verification architecture that enforces protocol rules to ensure the absolute integrity of decentralized state transitions.

At its functional center, this process involves the rigorous verification of cryptographic signatures, transaction sequencing, and balance availability. Participants tasked with this function ⎊ whether validators, sequencers, or relayers ⎊ operate under adversarial conditions where economic incentives are designed to align rational behavior with network security. The resulting output is not just a data point, but a verifiable proof of validity that underpins the entire trustless financial stack.

Origin

The historical trajectory of Blockchain Data Validation traces back to the genesis of decentralized consensus models.

Early implementations utilized basic proof-of-work, where computational energy expenditure served as the primary proxy for validation integrity. This approach prioritized censorship resistance and network security above throughput, establishing a baseline for trustless verification that subsequent protocols have refined.

- Proof of Work established the initial paradigm where computational difficulty enforced transaction validity.

- Proof of Stake introduced capital-at-risk as the primary mechanism for ensuring validator honesty.

- Zero Knowledge Proofs shifted the focus toward mathematical verifiability without requiring full data exposure.

As market participants demanded greater capital efficiency, the focus transitioned from raw computational power to sophisticated stake-based and proof-based architectures. This evolution reflects a broader shift toward optimizing the trade-off between decentralized security and the latency requirements of high-frequency financial derivatives. The architecture has moved from monolithic verification to modular, tiered validation layers, facilitating the complex settlement requirements of contemporary decentralized options markets.

Theory

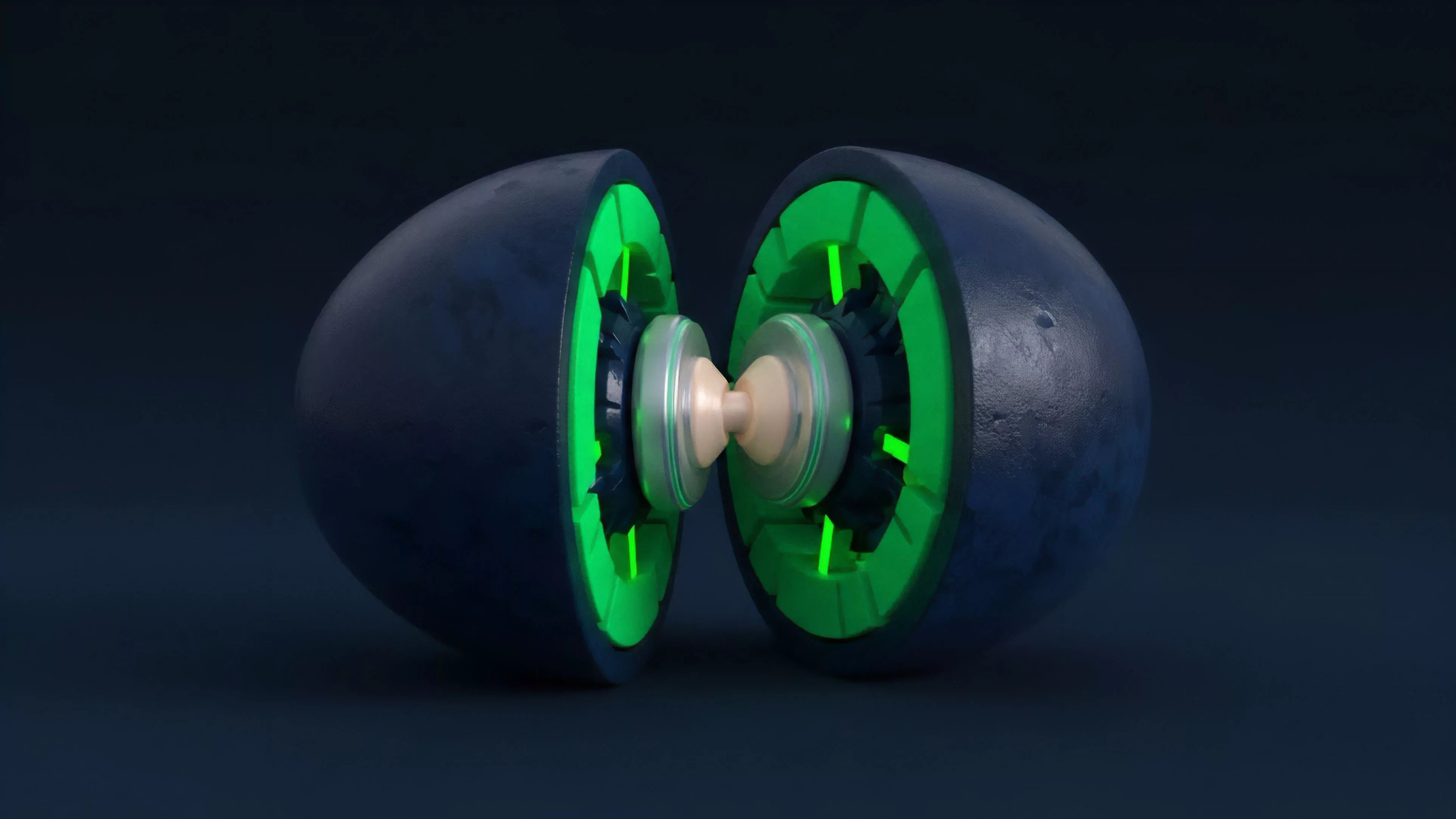

The theoretical framework governing Blockchain Data Validation relies heavily on the intersection of game theory and distributed systems engineering.

Validators must resolve the Byzantine Generals Problem, ensuring consensus despite potential malicious actors. In the context of derivatives, this validation process directly impacts margin engine accuracy and liquidation efficiency, as the state of the underlying asset must be reflected with high fidelity.

The integrity of decentralized derivative pricing depends entirely on the speed and accuracy of the underlying validation process to prevent oracle manipulation.

Mathematical modeling of this process often involves assessing the cost of corruption against the value of the network. If the cost to compromise validation exceeds the potential gain from malicious state manipulation, the system remains secure. This equilibrium is delicate, particularly in environments where liquidity fragmentation across multiple chains creates opportunities for arbitrage or coordinated attacks on the validation layer.

| Validation Mechanism | Latency Profile | Security Basis |

| Optimistic Rollups | High | Fraud Proofs |

| ZK Rollups | Low | Validity Proofs |

| Validator Sets | Moderate | Economic Stake |

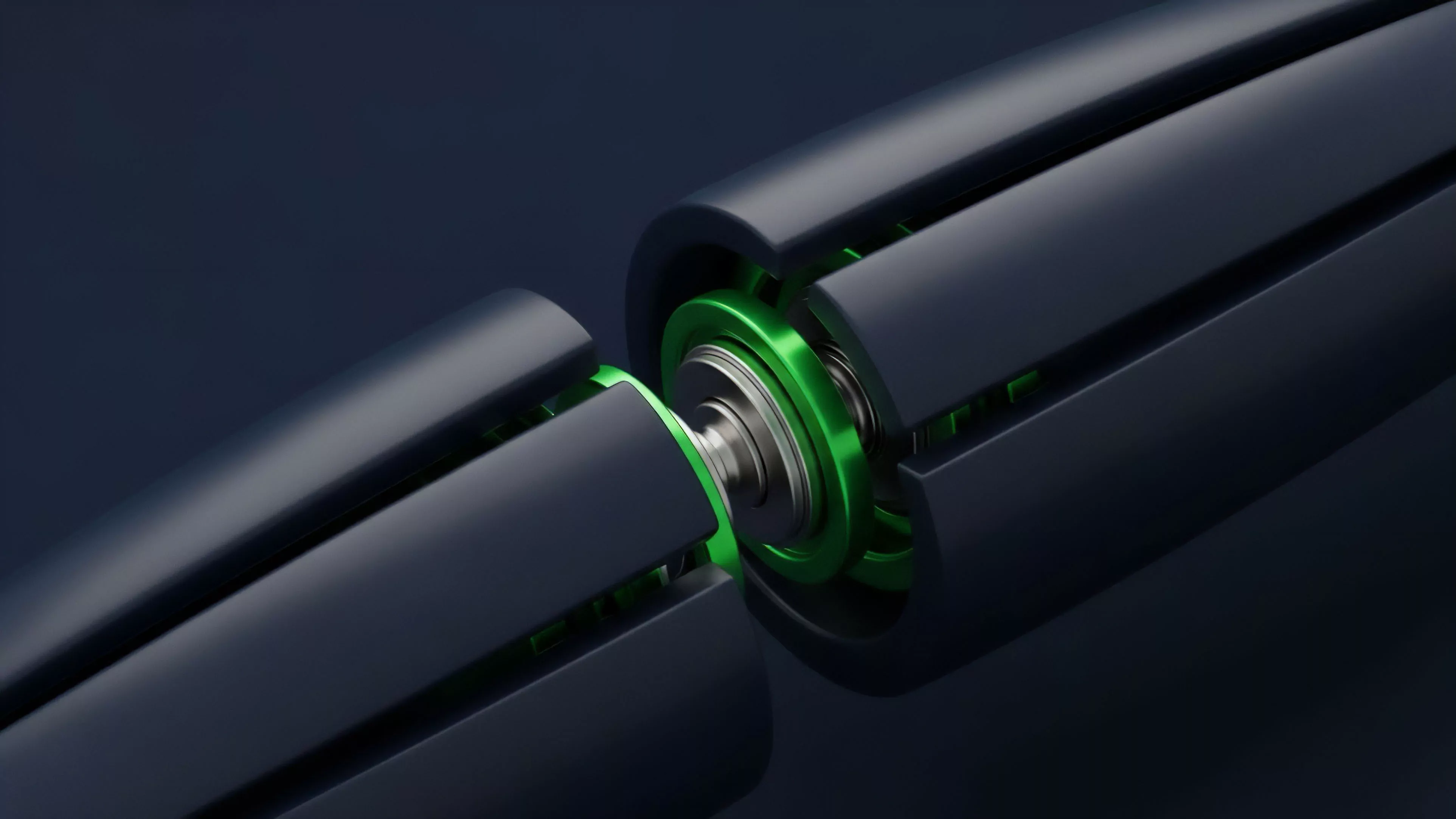

The internal mechanics of validation involve complex state machines where every transaction is treated as a function call modifying the global ledger. When one considers the physics of these protocols, the propagation delay of validation messages becomes the primary constraint on system performance. This reality forces architects to choose between synchronous settlement, which offers maximum security but limits throughput, and asynchronous settlement, which enhances performance while introducing unique systemic risks related to finality.

Approach

Contemporary implementations of Blockchain Data Validation prioritize modularity and interoperability.

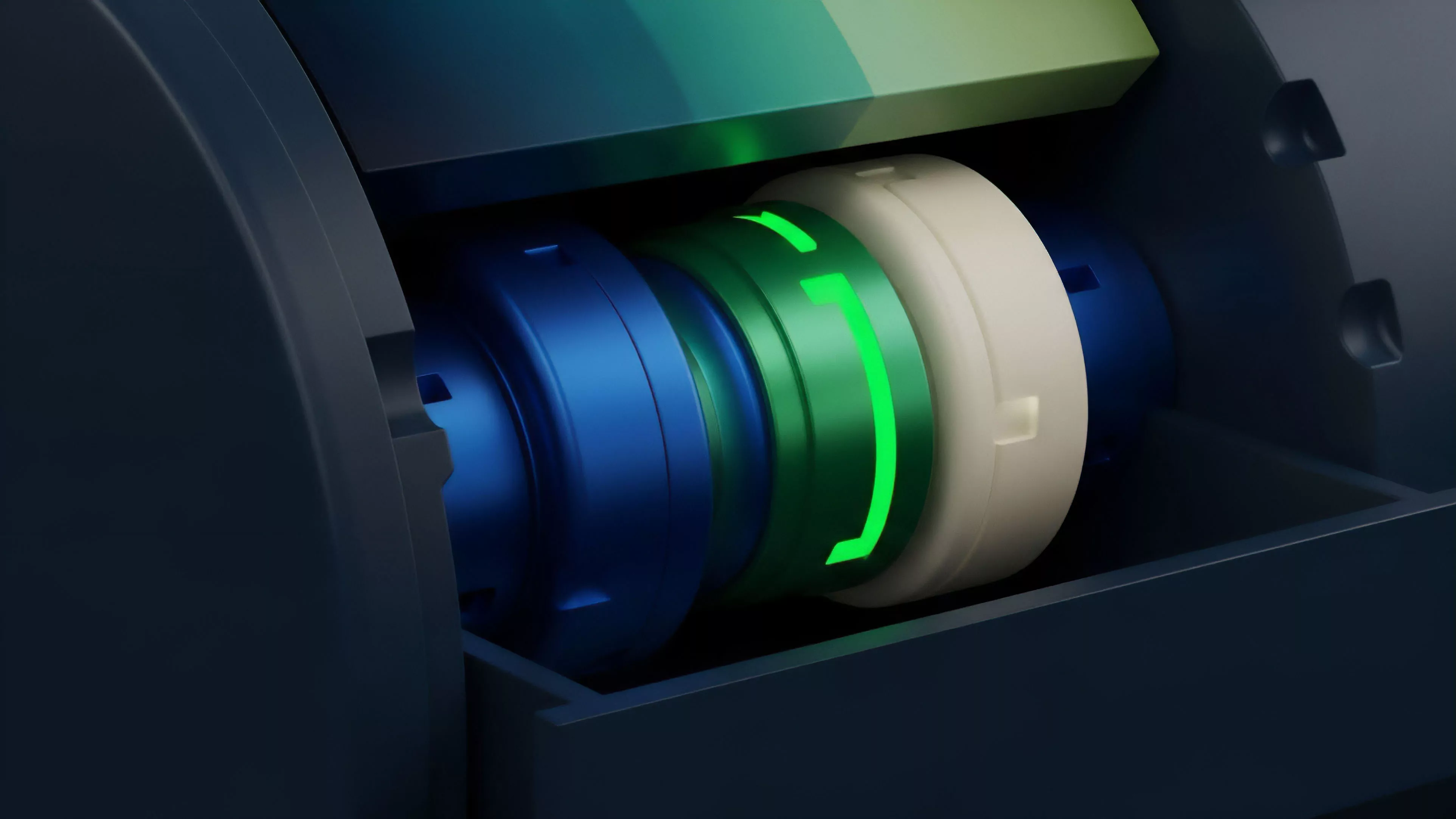

Current architectures utilize specialized execution environments where validation is decoupled from consensus, allowing for localized high-performance computation. This separation enables protocols to scale transaction throughput without sacrificing the foundational security guarantees of the underlying settlement layer.

- Sequencing involves the ordering of transactions before they are submitted for final validation.

- Verification checks the mathematical correctness of state transitions against current ledger parameters.

- Settlement updates the global state to reflect the validated changes permanently.

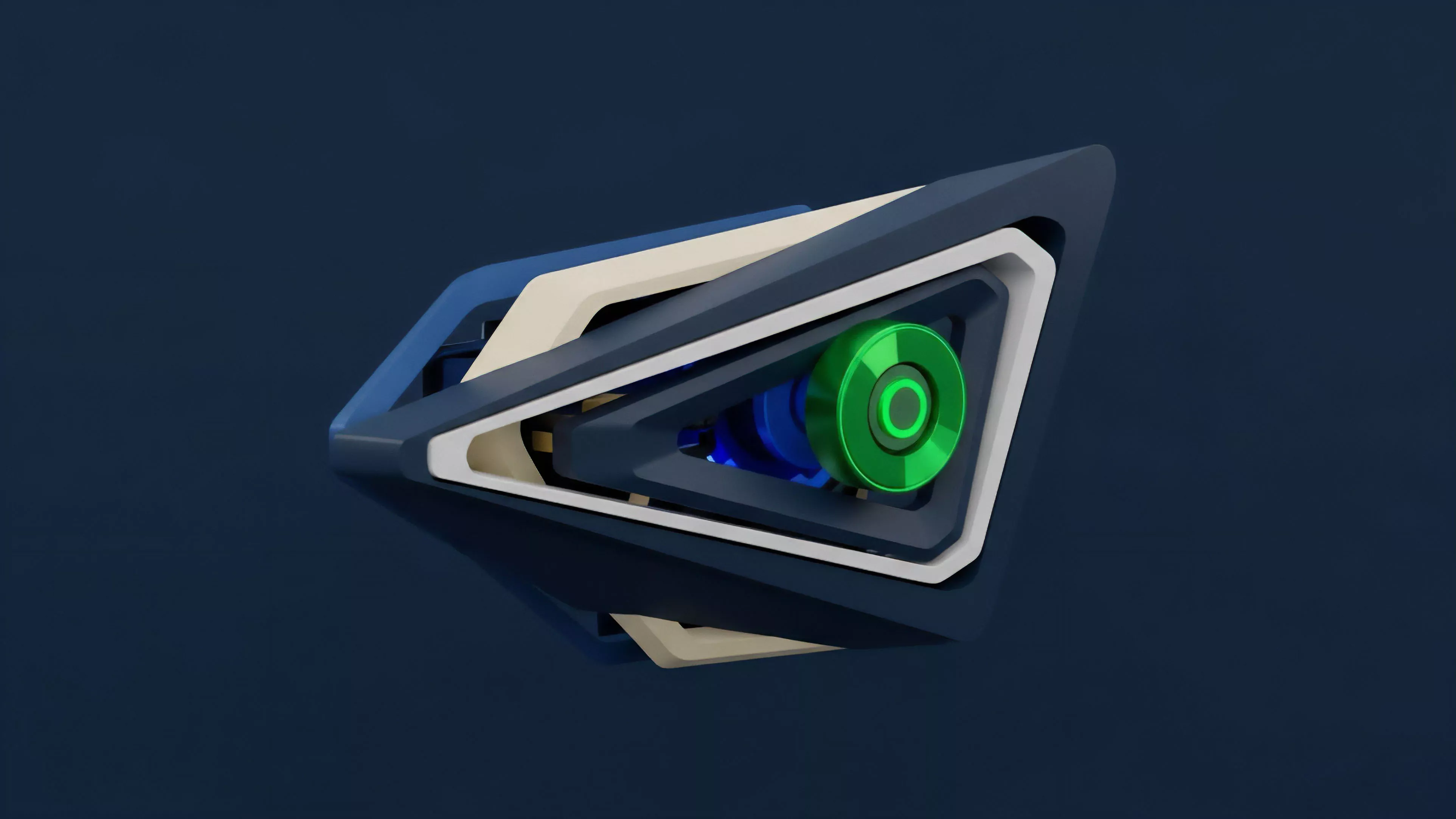

Modular validation architectures enable high-throughput derivative trading by decoupling execution speed from global consensus finality.

Market makers and derivative platforms currently leverage these validation layers to maintain accurate margin calculations and risk monitoring. The reliance on off-chain computation ⎊ later anchored by on-chain validity proofs ⎊ represents the state-of-the-art in balancing the requirements of professional-grade trading with the constraints of decentralized infrastructure. This architecture ensures that even during periods of extreme volatility, the validation of liquidation events occurs with the necessary speed to protect protocol solvency.

Evolution

The path toward current Blockchain Data Validation has been defined by the constant pressure to reduce latency and increase throughput.

Initially, validation was a slow, global process that hindered the development of complex financial instruments. The introduction of sharding and layer-two scaling solutions shifted the burden of validation to more efficient, specialized sub-networks. The move toward validity-based systems represents a significant departure from earlier models.

By utilizing complex cryptographic primitives, protocols can now verify entire blocks of transactions in milliseconds, a requirement for the sophisticated order flow of decentralized options markets. This shift also necessitates a change in how we view risk, as the security of a derivative contract now depends on the mathematical correctness of the proof system rather than the reputation of a centralized intermediary. Sometimes, the most significant technical breakthroughs arise from unexpected corners of the industry, such as the application of game theory to distributed oracle networks.

This constant iteration ensures that the validation layer remains resilient against both external market shocks and internal malicious activity. The focus has moved from simple transaction counting to the validation of complex, programmable financial logic that defines the future of decentralized derivatives.

Horizon

Future developments in Blockchain Data Validation will likely center on the total abstraction of validation complexity from the end-user. As protocols mature, the validation layer will become an invisible utility, providing near-instant finality for complex multi-asset derivatives.

This will allow for the integration of traditional financial products into decentralized venues with minimal friction.

| Development Area | Expected Impact |

| Hardware Acceleration | Reduced validation latency |

| Cross-Chain Validation | Unified liquidity pools |

| Recursive Proofs | Scalable global state |

The convergence of cryptographic security and high-speed execution will eventually render the distinction between centralized and decentralized validation negligible. This evolution points toward a future where financial settlement is universally verifiable, automated, and resistant to human interference. The ultimate objective remains the creation of a robust financial operating system where the validation of data is the primary, immutable foundation for all value exchange.