Essence

Blockchain Data Mining functions as the systematic extraction, indexing, and interpretation of raw ledger state transitions to derive actionable market intelligence. This practice transforms the immutable, transparent record of decentralized protocols into a structured information layer. By parsing blocks, transaction inputs, and contract interactions, participants reconstruct the underlying activity of decentralized exchanges and lending markets.

Blockchain Data Mining transforms raw immutable ledger data into structured intelligence for market participants.

This analytical process identifies liquidity concentrations, whale movement, and counterparty exposure that remain invisible to standard block explorers. The functional significance lies in its ability to quantify real-time risk, enabling participants to adjust positions before systemic instability manifests. It serves as the primary mechanism for auditing protocol health and verifying the actual distribution of risk across decentralized financial venues.

Origin

The genesis of Blockchain Data Mining resides in the early requirement for public node operators to index transactions for rapid retrieval.

As decentralized finance protocols matured, the necessity for sophisticated query engines surpassed simple block height tracking. Developers began constructing specialized indexing services to map complex smart contract events, allowing for the observation of granular interactions within automated market makers and collateralized debt positions.

- Transaction Graph Analysis enabled the tracing of capital flow across fragmented liquidity pools.

- Event Log Indexing provided the technical capability to monitor state changes within non-transparent smart contract functions.

- State Trie Reconstruction allowed analysts to snapshot protocol solvency at any historical block height.

This evolution was driven by the adversarial nature of decentralized markets, where participants needed to monitor liquidations and flash loan activity to protect their capital. The transition from basic data parsing to advanced market surveillance represents a fundamental shift in how decentralized systems are evaluated and traded.

Theory

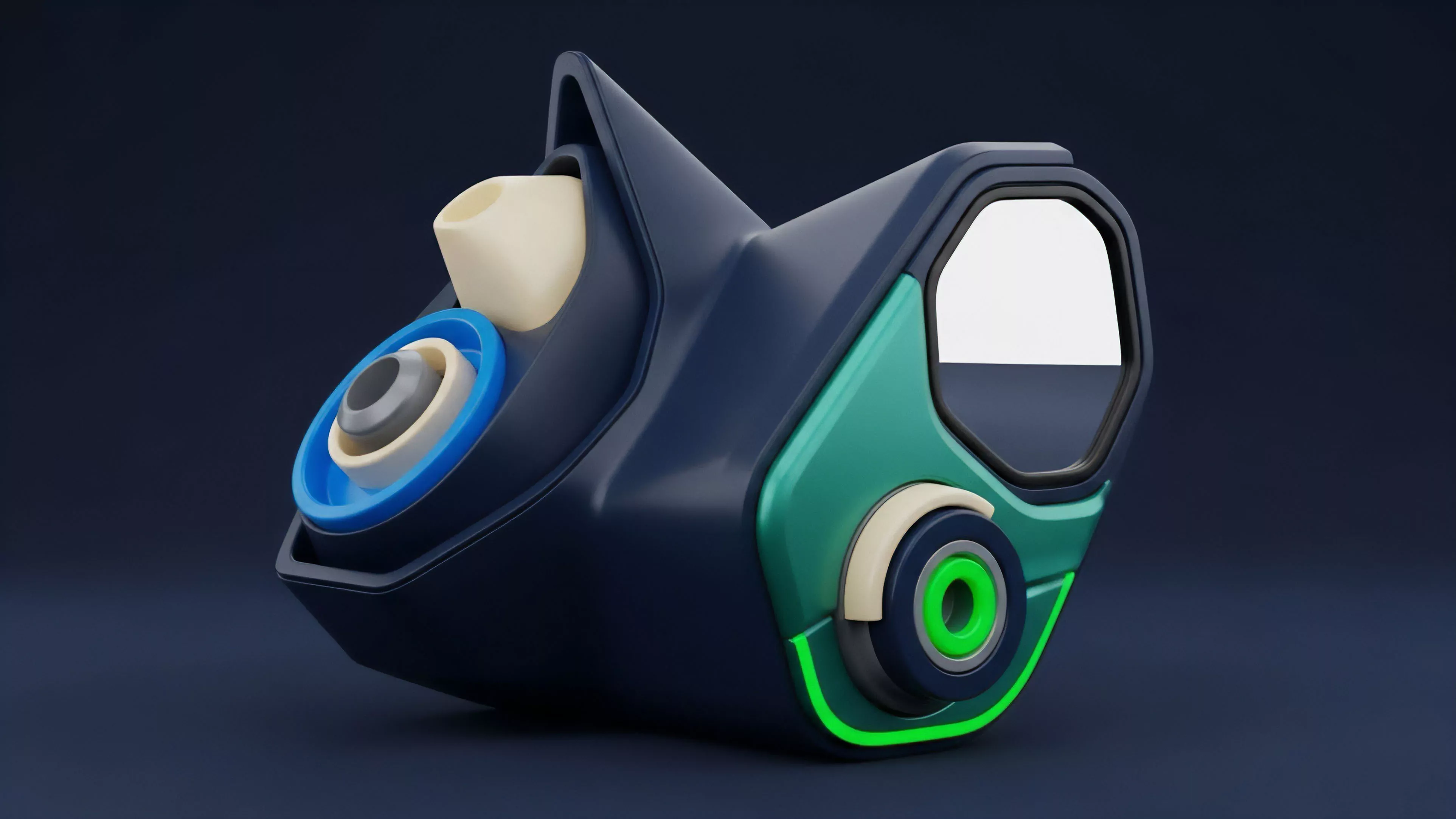

The theoretical framework of Blockchain Data Mining rests on the principle of protocol physics, where every state change is recorded and verifiable. Participants model market microstructure by aggregating these state changes into coherent time-series data.

This allows for the calculation of volatility skew, liquidity depth, and order flow toxicity, which are essential components for pricing derivative instruments.

Protocol physics dictates that all market activity remains recorded, allowing for precise reconstruction of trade flow.

Quantitative models utilize this data to calculate the Greeks ⎊ Delta, Gamma, Vega, and Theta ⎊ within decentralized options markets. By analyzing the frequency and size of contract interactions, analysts determine the probability of liquidation events or shifts in market sentiment. The strategic interaction between participants creates a game-theoretic environment where data asymmetry provides a distinct edge in executing arbitrage or hedging strategies.

| Metric | Financial Significance | Risk Implication |

|---|---|---|

| Liquidity Depth | Determines execution slippage | High impact on liquidation efficiency |

| Contract Utilization | Reflects protocol demand | Indicator of systemic leverage |

| Transaction Latency | Influences arbitrage opportunity | Proxy for network congestion risk |

Approach

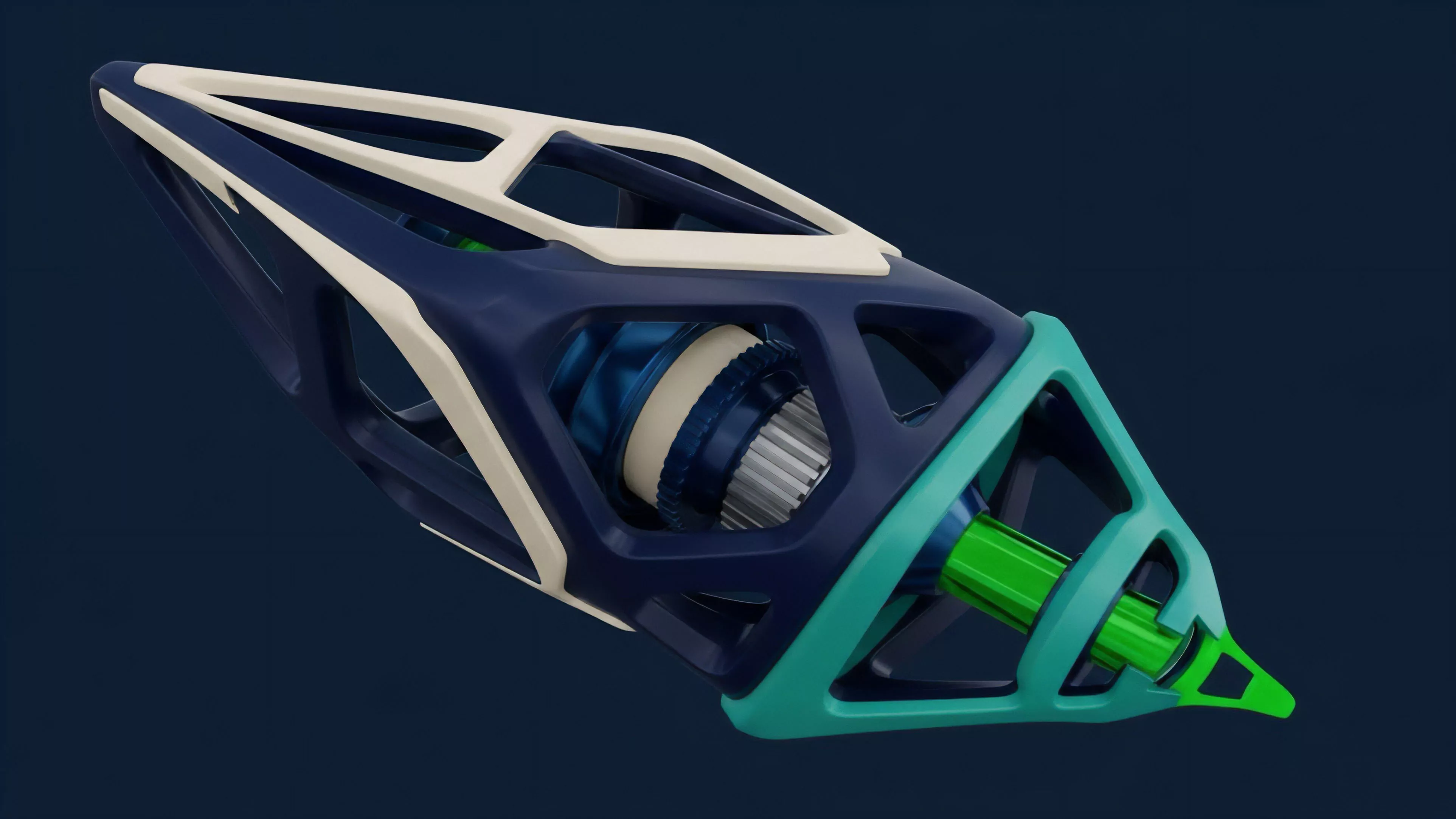

Current practitioners employ high-performance indexing engines to ingest raw node data, transforming it into optimized relational or graph databases. This allows for complex SQL or Cypher queries that reveal patterns in asset allocation and leverage. Analysts focus on identifying Liquidation Thresholds and Collateralization Ratios, which are the fundamental drivers of volatility in decentralized derivative markets.

Optimized indexing engines convert raw node output into actionable datasets for risk management.

The methodology involves constructing proprietary indicators that correlate on-chain activity with market price action. By filtering out noise from automated bot activity, analysts gain a clear view of genuine market participant positioning. This technical rigor ensures that derivative strategies are built on a foundation of verifiable data rather than speculation or sentiment-driven heuristics.

Evolution

The discipline has transitioned from basic block scanning to the deployment of decentralized oracle networks and modular data layers.

Early methods relied on centralized indexing, which introduced counterparty risk and latency issues. Current systems utilize distributed, verifiable compute nodes to ensure that the data processed is both accurate and censorship-resistant.

- Modular Indexing allows for faster synchronization with high-throughput chains.

- Zero-Knowledge Proofs now enable the verification of data integrity without exposing private transaction details.

- Predictive Analytics layers utilize historical state data to forecast potential liquidity crunches during periods of high volatility.

This trajectory reflects the broader push toward decentralizing the infrastructure layer itself. As protocols become more complex, the requirement for real-time, high-fidelity data feeds becomes the defining factor in competitive market participation. The focus is shifting toward predictive modeling that anticipates systemic failure points before they are triggered by automated liquidation agents.

Horizon

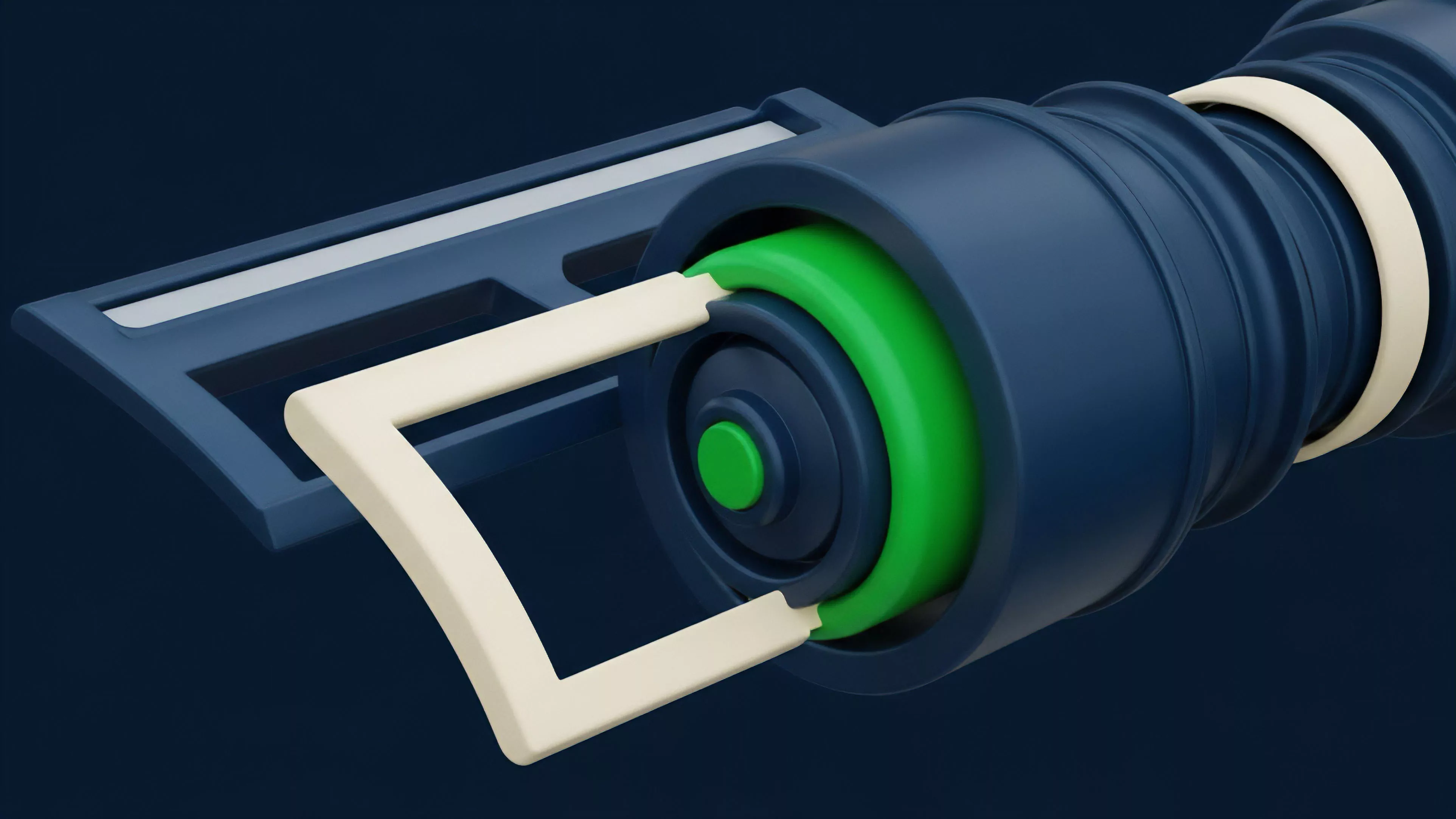

The future of Blockchain Data Mining points toward the integration of autonomous agents that execute hedging strategies based on real-time on-chain state updates.

These agents will operate with millisecond latency, utilizing predictive models to manage collateral risk dynamically. This represents the next phase of decentralized market efficiency, where liquidity is managed by code rather than manual intervention.

Autonomous agents will eventually replace manual risk management through real-time state analysis.

The convergence of Artificial Intelligence and Blockchain Data Mining will enable the automated detection of anomalous market behavior and potential exploit vectors. This will necessitate more robust protocol design, as the game-theoretic environment becomes increasingly automated and high-speed. The ability to process and act upon these data streams will determine the winners in the next cycle of decentralized financial innovation.

| Future Development | Systemic Impact |

|---|---|

| Autonomous Hedging | Reduced market impact of liquidations |

| Real-time Risk Oracles | Increased precision in margin requirements |

| Decentralized Data Verification | Elimination of reliance on centralized APIs |

The critical limitation remains the inherent latency between state confirmation and data processing, which continues to pose a challenge for ultra-high-frequency strategies. How will the next generation of consensus mechanisms solve the conflict between network throughput and the speed required for automated risk mitigation?