Essence

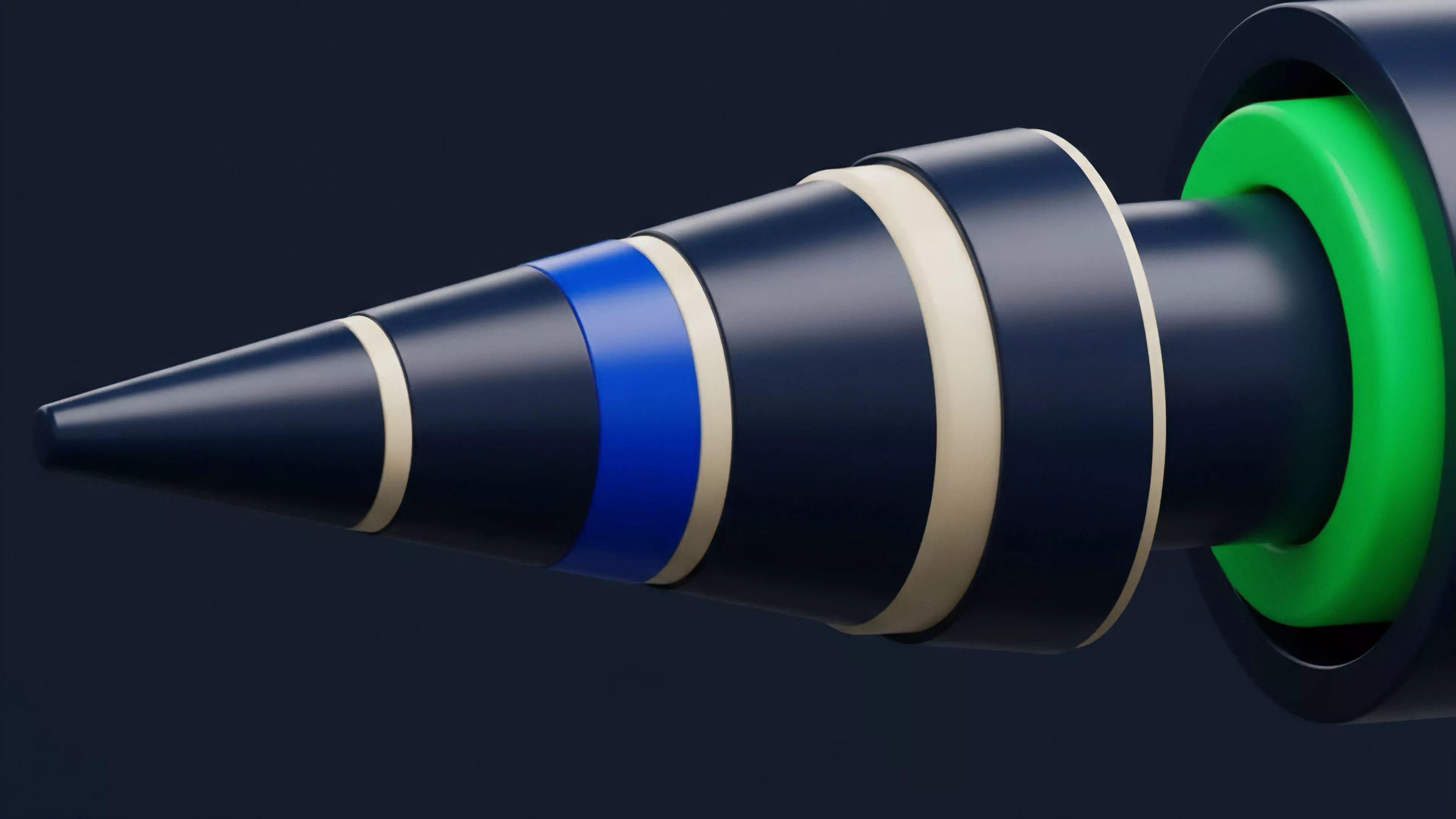

Blockchain Data Integration represents the technical architecture enabling the ingestion, normalization, and contextualization of distributed ledger information into traditional financial systems. It acts as the connective tissue between opaque, on-chain state transitions and the requirements of quantitative risk engines. By transforming raw, event-driven blockchain logs into structured time-series datasets, this process permits the application of standardized derivative pricing models to decentralized assets.

Blockchain Data Integration functions as the essential translation layer between raw decentralized ledger states and the structured inputs required for institutional derivative valuation.

The systemic relevance of this integration lies in its ability to mitigate the informational asymmetry inherent in permissionless networks. Without standardized data pipelines, market participants operate with fragmented views of liquidity, collateralization, and counterparty risk. This integration facilitates a unified view of asset velocity and protocol health, forming the basis for professional-grade risk management in decentralized finance.

Origin

The necessity for robust Blockchain Data Integration emerged from the limitations of early decentralized exchange models, which lacked the latency and data fidelity required for sophisticated trading.

Initial attempts at indexing relied on centralized, fragile scrapers that failed under the load of high-frequency on-chain activity. The evolution of dedicated indexing protocols and specialized node infrastructure addressed these structural vulnerabilities.

- Subgraphs provided the first standardized method for developers to define data schemas and query blockchain events using graph-based languages.

- Event Listeners evolved from simple polling mechanisms to sophisticated architectures capable of capturing deep-level smart contract state changes in real-time.

- Data Oracles established the bridge between off-chain pricing benchmarks and on-chain settlement, necessitating rigorous verification mechanisms to prevent data manipulation.

These developments shifted the focus from simple transaction history tracking to the reconstruction of complex protocol states. Market participants recognized that accurate price discovery in decentralized options requires more than just trade execution data; it demands a granular understanding of underlying liquidity pools, open interest distribution, and collateralization ratios across disparate protocols.

Theory

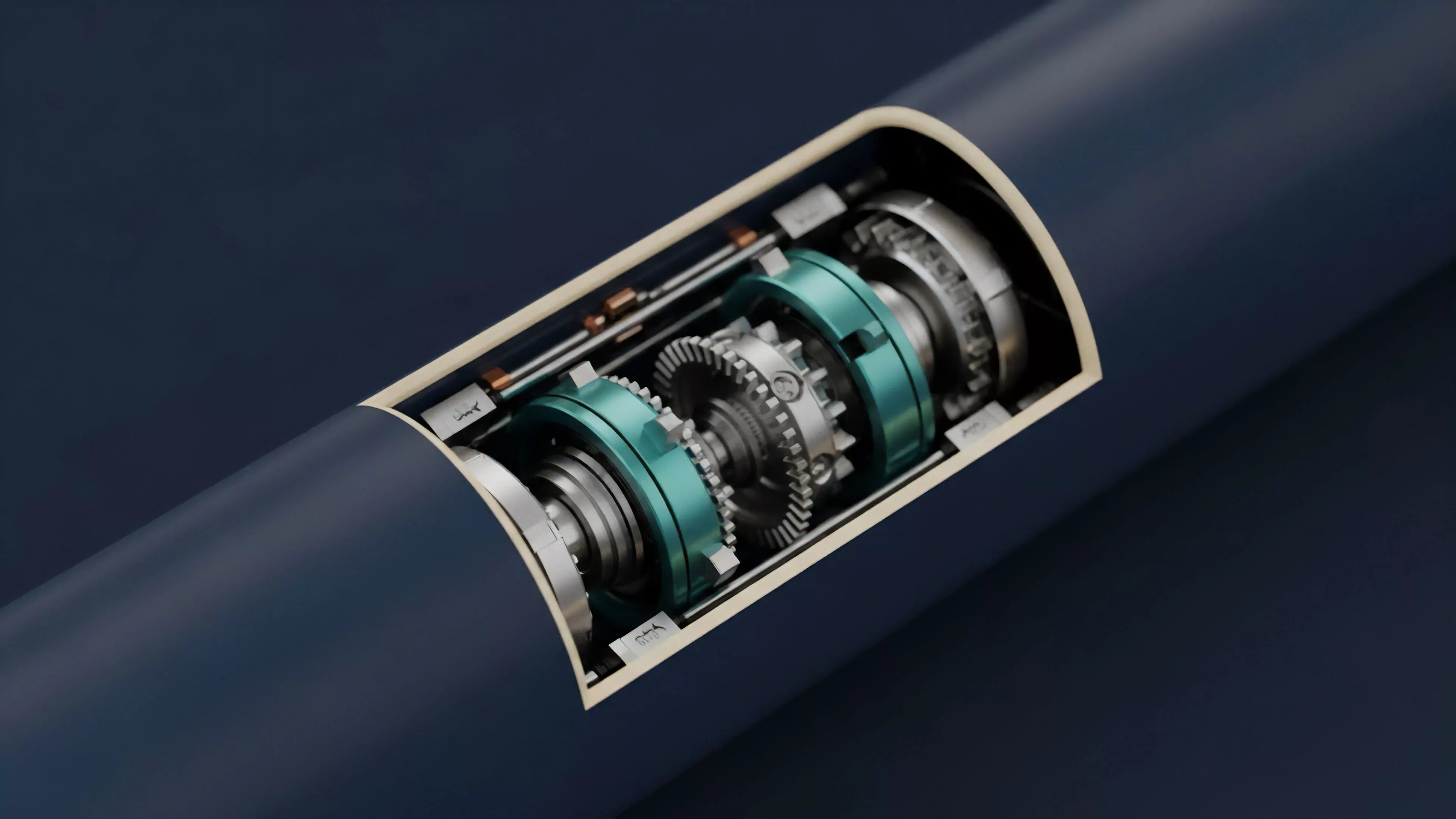

The theoretical framework governing Blockchain Data Integration rests upon the synchronization of off-chain quantitative models with on-chain state transitions. Effective integration requires a rigorous mapping of smart contract events to standard financial primitives.

This ensures that the inputs for models like Black-Scholes or local volatility surfaces remain consistent with the actual state of the decentralized protocol.

| Metric | Integration Challenge | Systemic Impact |

|---|---|---|

| Latency | Propagation delays between block finality and data availability | Increased risk of stale pricing in automated margin engines |

| Normalization | Inconsistent event logs across heterogeneous smart contract standards | Difficulty in cross-protocol risk aggregation |

| Authenticity | Potential for data corruption or manipulation by node operators | Compromised collateral valuation and liquidation thresholds |

Quantitative finance models rely on the assumption of continuous, liquid markets. Blockchain Data Integration attempts to replicate this continuity through sophisticated data sampling and interpolation techniques. The challenge involves managing the discrete nature of block-based updates while modeling the continuous volatility processes of underlying crypto assets.

Any failure to accurately capture these state changes introduces model risk, often manifesting as slippage or improper hedging during high-volatility events.

The accuracy of derivative pricing in decentralized markets depends entirely on the fidelity and temporal resolution of the integrated blockchain data streams.

This domain also intersects with game theory, where participants may attempt to manipulate protocol state visibility to trigger favorable liquidations. A secure integration architecture must therefore account for adversarial data submission, ensuring that the information utilized for derivative settlement remains resilient against such manipulation.

Approach

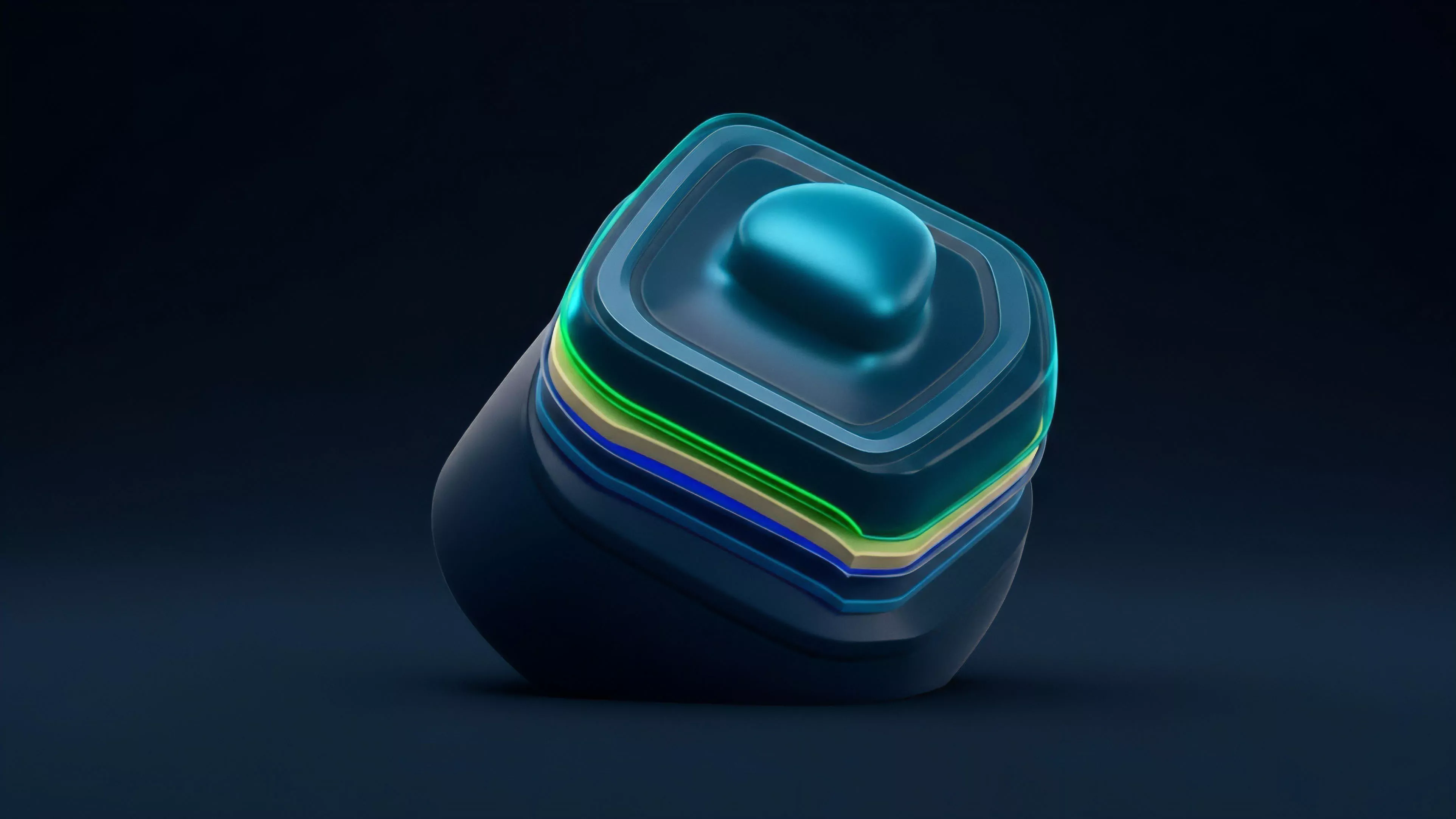

Current methodologies for Blockchain Data Integration prioritize high-throughput, low-latency pipelines that feed directly into algorithmic trading desks and risk management systems. Modern practitioners utilize distributed node clusters to ensure redundancy and data integrity, moving away from single-point-of-failure architectures.

- Streaming Data Architectures utilize messaging queues to process event logs as they are emitted, minimizing the time between transaction finality and data availability.

- Schema Mapping involves the rigorous definition of custom data structures that translate raw hexadecimal event data into human-readable, machine-analyzable formats.

- Verification Protocols employ cryptographic proofs to ensure the data delivered to the derivative engine matches the state recorded on the underlying ledger.

Market makers and hedge funds now treat Blockchain Data Integration as a proprietary advantage. The ability to parse mempool activity and anticipate state changes before they are finalized allows for superior positioning in decentralized options markets. This shift necessitates deep expertise in both smart contract architecture and distributed systems engineering, creating a barrier to entry that favors firms capable of maintaining high-performance data infrastructure.

Evolution

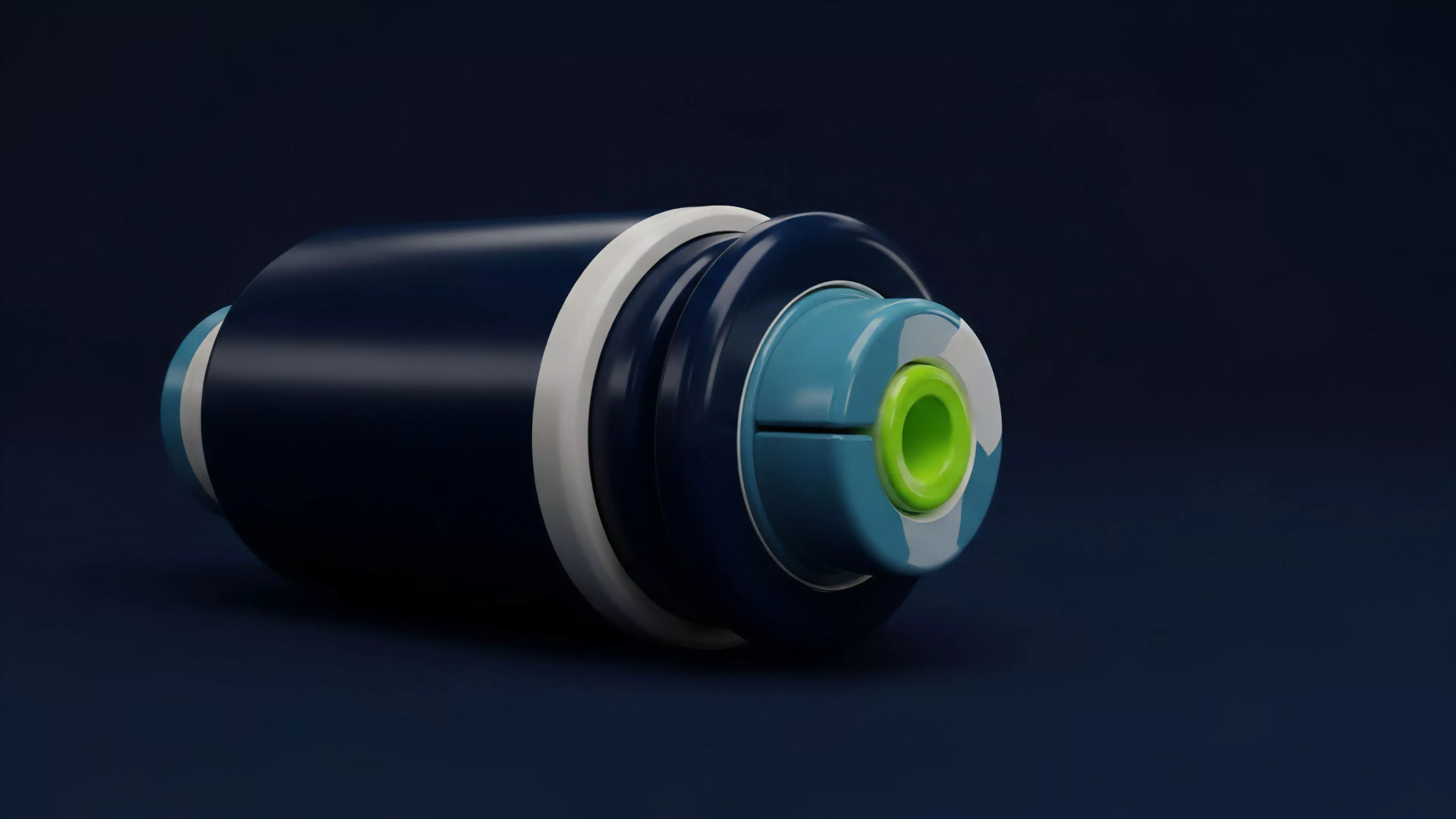

The trajectory of Blockchain Data Integration has progressed from rudimentary, centralized block explorers to decentralized, incentivized data networks.

Early systems focused on providing basic transparency, while current infrastructure is engineered for the high-performance demands of professional derivative trading.

Evolution in this sector has shifted from providing passive visibility to enabling active, high-frequency financial decision-making within decentralized protocols.

The industry has moved toward modular architectures, where data indexing is decoupled from protocol execution. This separation allows for specialized data services to emerge, providing highly optimized streams for specific derivative types, such as perpetual futures or exotic options. The rise of zero-knowledge proofs has also introduced a new dimension, where data can be integrated and verified without revealing the underlying private state, potentially solving the tension between transparency and user privacy.

- Era of Explorers: Focused on human-readable transaction history and basic wallet balances.

- Era of Subgraphs: Introduced programmable indexing and structured queries for decentralized applications.

- Era of Real-time Streams: Prioritizes low-latency, event-driven data ingestion for institutional-grade financial applications.

One might consider how this progression mirrors the historical development of market data feeds in traditional equities, where the transition from floor-based reporting to electronic ticker plants fundamentally altered the speed and efficiency of price discovery. The current landscape is witnessing a similar acceleration, where the speed of data ingestion dictates the viability of complex derivative strategies.

Horizon

Future developments in Blockchain Data Integration will center on the standardization of cross-chain data interoperability. As liquidity becomes increasingly fragmented across heterogeneous networks, the ability to synthesize data from multiple chains into a single, cohesive risk model will determine the next generation of derivative market leaders.

| Future Trend | Technical Driver | Strategic Goal |

|---|---|---|

| Cross-Chain Aggregation | Interoperability protocols and shared security models | Unified liquidity and risk management across ecosystems |

| Privacy-Preserving Feeds | Zero-knowledge proofs and secure multi-party computation | Institutional participation without exposing proprietary strategies |

| Autonomous Data Oracles | AI-driven validation of on-chain state transitions | Self-correcting data integrity and reduced latency |

The ultimate goal is the creation of a decentralized, trustless data layer that functions with the reliability of centralized exchanges but maintains the permissionless nature of blockchain networks. Success in this domain will define the capacity for decentralized markets to absorb the scale and complexity of traditional financial derivatives, effectively closing the gap between on-chain potential and real-world utility.