Essence

Block Validation functions as the definitive mechanism for ensuring the integrity and state-transition accuracy of a decentralized ledger. It represents the point where cryptographic proofs, consensus rules, and economic incentives converge to finalize transaction ordering. Without this rigorous verification, the deterministic nature of blockchain-based financial systems dissolves, rendering the settlement of any associated derivative or spot position impossible.

Block validation serves as the foundational consensus process that guarantees the state-transition integrity of a decentralized financial network.

The process involves nodes checking incoming transactions against the protocol’s specific rules, including signature verification, balance availability, and script execution. When these checks pass, the transaction enters the mempool before inclusion in a proposed block. The subsequent act of adding that block to the canonical chain signifies the finality required for complex financial instruments to function without centralized clearinghouses.

Origin

The concept emerged directly from the requirement to solve the double-spending problem in peer-to-peer networks without a trusted third party.

Satoshi Nakamoto codified this by linking block creation to proof-of-work, where the computational cost serves as a proxy for trust. Early systems prioritized simple transaction ordering, but as the technology matured, the focus shifted toward the security of the validation logic itself.

- Proof of Work: Initially required nodes to expend energy to propose valid blocks, establishing the first robust security model for decentralized validation.

- Proof of Stake: Evolved to replace energy-intensive computation with economic collateral, aligning the incentives of validators directly with the health of the network.

- Smart Contract Execution: Introduced the requirement for validators to process complex logic rather than simple value transfers, fundamentally changing the risk profile of block production.

This evolution reflects a transition from securing simple ledger entries to maintaining the security of programmable money. The shift towards more sophisticated consensus algorithms demonstrates an ongoing attempt to optimize for both decentralization and throughput.

Theory

The mechanical operation of Block Validation rests on the intersection of game theory and distributed systems. Validators operate in an adversarial environment where they seek to maximize profit while adhering to protocol constraints.

When these incentives align, the system achieves a state of liveness and safety.

| Component | Functional Impact |

| Mempool Priority | Influences transaction inclusion speed and gas pricing dynamics. |

| Consensus Rules | Dictate the validity of block headers and transaction data. |

| Slashing Conditions | Penalize malicious validation behavior to ensure system integrity. |

The robustness of a decentralized network depends on the alignment between validator economic incentives and the strict enforcement of consensus rules.

The mathematical models underpinning this process involve Byzantine Fault Tolerance, which ensures the system remains operational even when a subset of nodes acts maliciously. Validators must process transactions, update the global state, and broadcast the result to peers. This requires low-latency execution and significant bandwidth to prevent network partitioning, which could otherwise allow for temporary forks and double-spend attempts.

Sometimes I think of this as a game of high-stakes coordination, where the cost of failure is the total loss of confidence in the underlying asset. The technical constraints on throughput, often called the blockchain trilemma, force architects to make difficult trade-offs between speed, security, and decentralization.

Approach

Modern systems utilize advanced techniques to streamline the validation process while maintaining high security standards. This includes the use of zero-knowledge proofs to verify computation without re-executing it, and parallel execution engines to handle high transaction volumes.

- Optimistic Rollups: Defer the validation of transaction data to a later point, relying on fraud proofs to maintain security.

- Zero Knowledge Proofs: Allow validators to confirm the correctness of state transitions without requiring the full execution of every underlying transaction.

- Parallel Execution: Enables multiple transaction sequences to be validated simultaneously, significantly increasing the capacity of the network.

The current landscape prioritizes capital efficiency, leading to the rise of liquid staking and sophisticated MEV extraction techniques. Validators now act as strategic agents in a market, optimizing their inclusion strategies to capture maximum value, which in turn impacts the latency and cost of executing options and other derivatives on-chain.

Evolution

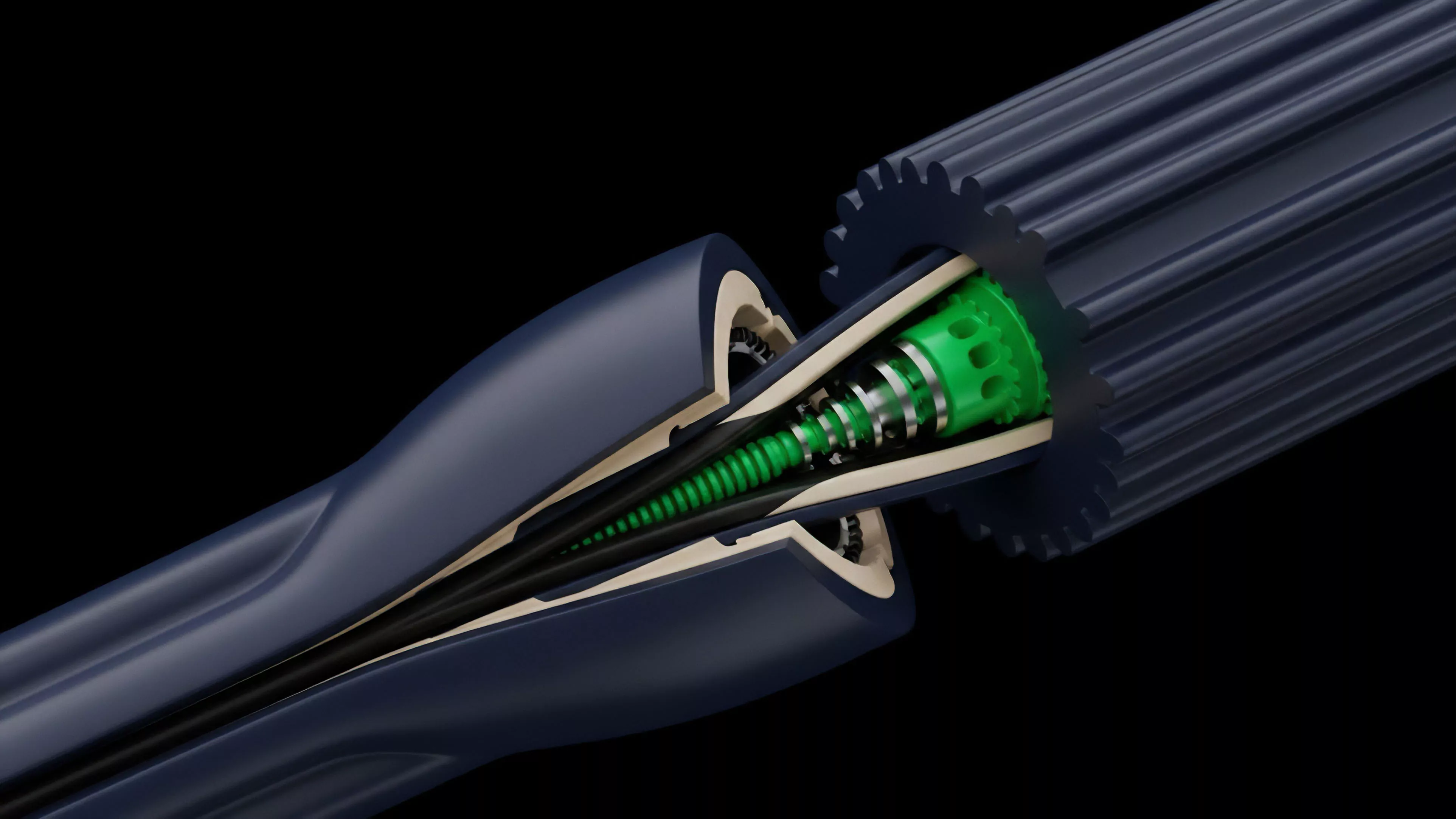

The transition from monolithic to modular architectures marks the most significant change in how blocks are validated today. Separating execution from data availability allows for specialized layers to handle distinct parts of the validation pipeline, reducing the load on any single node.

| Architectural Model | Validation Strategy |

| Monolithic | Single layer handles execution, consensus, and data availability. |

| Modular | Validation tasks are distributed across specialized protocol layers. |

This shift forces participants to rethink their risk models. When validation is spread across different protocols, the failure of one layer can have cascading effects on the others. The increasing complexity of these interactions suggests that future security models must account for multi-layer systemic risks rather than focusing solely on the base chain.

Horizon

The future of Block Validation points toward extreme optimization through hardware-accelerated consensus and decentralized sequencers.

We will likely see a shift where validation is no longer a human-managed activity but one performed by highly specialized automated agents operating on custom silicon.

Future validation architectures will prioritize hardware-level efficiency and decentralized sequencing to achieve sub-second finality.

This evolution will enable the creation of decentralized derivatives that can match the speed and complexity of traditional finance. However, this also introduces new risks, as the technical barrier to entry for validators increases, potentially leading to greater centralization among those with access to the most efficient infrastructure. The next stage of development will center on maintaining censorship resistance while scaling to meet global financial demands.