Essence

Automated Data Validation functions as the systemic verification layer within decentralized derivative protocols, ensuring that price feeds, collateral valuations, and contract state transitions align with verified market realities. This mechanism eliminates manual oversight in high-frequency environments, acting as a gatekeeper that prevents corrupted or stale information from triggering unintended liquidations or erroneous settlement outcomes.

Automated data validation serves as the deterministic arbiter of truth in decentralized financial environments by reconciling off-chain market signals with on-chain execution logic.

The architecture relies on cryptographic proofs and consensus-driven oracle networks to maintain the integrity of financial instruments. By automating the scrutiny of incoming data, protocols protect liquidity providers and traders from systemic exploits that target latency gaps or information asymmetry between fragmented exchange venues.

Origin

The requirement for Automated Data Validation arose from the limitations of early decentralized exchanges that relied on single-source price feeds, which proved highly susceptible to manipulation and flash-loan attacks. Initial implementations sought to replicate traditional finance standards of auditing, yet the speed of blockchain settlement necessitated a shift toward programmatic, real-time verification.

- Oracle Decentralization: Early attempts to distribute data sources created the need for validation logic to filter outliers and malicious actors.

- Smart Contract Constraints: The deterministic nature of execution required inputs to be sanitized before affecting margin engines.

- Market Efficiency: Traders demanded sub-second settlement times, rendering human-in-the-loop validation obsolete for competitive derivatives.

Theory

At the mechanical level, Automated Data Validation utilizes statistical thresholding and multi-sig consensus to confirm the validity of external data points. The process operates on the principle of minimizing the probability of bad data entry, which directly impacts the accuracy of Greeks and risk sensitivity parameters.

Quantitative Frameworks

The model incorporates volatility-adjusted filters to reject price updates that exceed defined standard deviations from the moving average. This protects the protocol from temporary market anomalies that could force unnecessary liquidations. The system architecture follows specific logic gates:

| Parameter | Validation Logic |

| Price Feed Integrity | Cross-reference across minimum three independent nodes |

| Liquidation Thresholds | Dynamic adjustment based on real-time volatility |

| Latency Check | Rejection of data older than one block interval |

Rigorous automated validation minimizes the impact of localized price spikes on systemic stability by enforcing strict consensus requirements on incoming market data.

The mathematical foundation involves calculating the weighted average of validated inputs, often applying Bayesian inference to update the reliability score of individual data providers over time. This creates a self-correcting loop where nodes providing consistently accurate data gain higher influence in the validation process, while malicious or faulty nodes are algorithmically penalized.

Approach

Modern protocols deploy Automated Data Validation through modular middleware that separates data ingestion from execution. This ensures that the validation logic remains upgradeable without requiring a full redeployment of the core derivatives contract. By utilizing zero-knowledge proofs, some systems now verify the origin of data without exposing sensitive trade flow information.

- Pre-Execution Filtering: Data points are checked against predefined boundaries before reaching the matching engine.

- Consensus Aggregation: Multiple decentralized sources are reconciled using a median-based or reputation-weighted algorithm.

- Post-Settlement Audit: Continuous monitoring of settlement prices against spot market benchmarks to identify drift.

This approach transforms the protocol into a self-defending system. When a data source attempts to inject an anomalous value, the validation engine detects the discrepancy against the broader market cluster and suspends the feed, preventing the contagion of incorrect pricing throughout the derivative chain.

Evolution

The transition from centralized, static data checks to dynamic, decentralized validation has redefined the operational boundaries of crypto derivatives. Early iterations struggled with the trade-off between speed and security, often resulting in high latency or frequent protocol pauses during periods of extreme market stress.

The evolution of validation mechanisms demonstrates a shift toward high-throughput cryptographic verification that prioritizes system uptime during volatility.

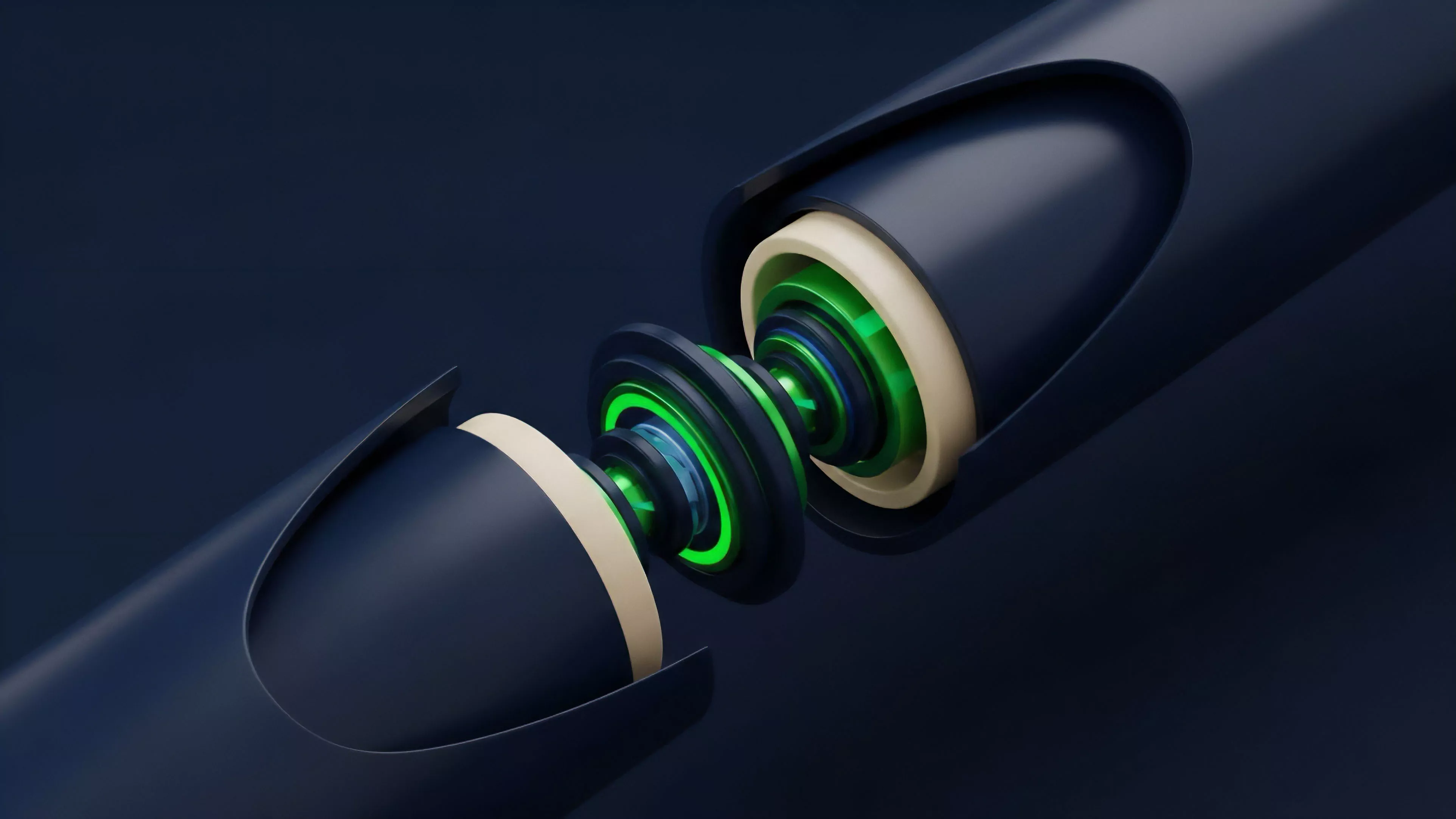

Current developments focus on integrating cross-chain validation, allowing derivatives protocols to source data from multiple networks while maintaining a unified security standard. The move toward modular, plug-and-play validation modules enables teams to swap out verification logic as new cryptographic primitives or faster consensus mechanisms appear. Occasionally, the complexity of these multi-layer validation paths introduces hidden failure points ⎊ much like a high-performance engine that becomes impossible to service without specialized tooling ⎊ necessitating a move toward simplified, auditable verification standards.

Horizon

Future iterations of Automated Data Validation will likely integrate machine learning models capable of predicting data manipulation attempts before they occur. By analyzing historical order flow patterns, these predictive filters will enhance the resilience of derivative markets against sophisticated adversarial strategies. The next phase involves the widespread adoption of hardware-based secure enclaves for data processing, further hardening the validation layer against software-level exploits.

| Development Phase | Primary Focus |

| Predictive Filtering | AI-driven anomaly detection |

| Hardware Integration | TEE-based secure data processing |

| Autonomous Governance | Dynamic parameter adjustment via DAO |