Essence

Automated Capital Allocation functions as the algorithmic orchestration of liquidity deployment across decentralized derivative venues. This mechanism replaces discretionary portfolio management with programmable logic, executing trades based on predefined risk parameters, volatility surface analysis, and yield optimization targets. By removing human latency from the rebalancing process, these systems maintain constant alignment with target delta, gamma, and vega exposures in high-frequency environments.

Automated capital allocation serves as the algorithmic bridge between static collateral and dynamic market participation in decentralized derivative markets.

The primary objective involves maximizing capital efficiency while mitigating liquidation risks. These protocols utilize on-chain data feeds and off-chain computational offloading to calculate optimal asset distribution, ensuring that collateral remains productive without compromising solvency. The architecture relies on deterministic execution, where smart contracts trigger rebalancing events based on realized volatility thresholds or predictive signal inputs.

Origin

The genesis of Automated Capital Allocation resides in the evolution of automated market makers and the subsequent demand for sophisticated risk management tools within decentralized finance.

Early liquidity provision models lacked the sensitivity to manage the non-linear risks inherent in options, leading to capital inefficiency and frequent impermanent loss. Developers recognized the need for a secondary layer that could dynamically adjust collateral ratios and hedge positions without requiring constant manual intervention.

Early DeFi iterations prioritized liquidity depth, whereas modern architectures focus on the precise, automated management of risk-adjusted capital deployment.

This development path mirrors the shift in traditional finance from manual floor trading to algorithmic execution platforms. As derivative protocols matured, the complexity of managing Greeks across multiple expiration dates and strike prices necessitated the integration of programmatic capital management. These early systems drew inspiration from institutional vault structures, adapting them for permissionless environments where code dictates settlement and risk enforcement.

Theory

The theoretical framework governing Automated Capital Allocation rests upon the continuous optimization of a portfolio’s risk-reward profile within a bounded constraint set.

Mathematically, this involves solving a multi-variable optimization problem where the objective function maximizes returns while maintaining the portfolio within specific Value at Risk limits. The system must account for transaction costs, gas volatility, and the latency of oracle updates, which often act as the primary constraints on rebalancing frequency.

Risk Sensitivity Modeling

The core engine utilizes real-time monitoring of Greeks to determine the necessity of a rebalancing event. When the portfolio’s aggregate delta deviates from the target threshold, the automated agent initiates a trade to restore the desired exposure. This requires a robust pricing model, such as Black-Scholes or binomial trees, adjusted for the specific volatility regimes of digital assets.

| Metric | Function in Capital Allocation |

| Delta | Determines directional hedge requirements |

| Gamma | Adjusts rebalancing frequency based on convexity |

| Vega | Dictates volatility exposure management |

Game Theoretic Constraints

Participants in these markets operate within an adversarial environment where Liquidation Thresholds act as the ultimate arbiter of failure. The allocation logic must anticipate predatory behavior, such as front-running or sandwich attacks, by obfuscating rebalancing signals or utilizing decentralized sequencers. The systemic integrity of the protocol depends on the ability of the allocation engine to maintain solvency during periods of extreme market dislocation.

Approach

Current implementation strategies focus on the integration of Smart Contract Security and off-chain computation to achieve high-fidelity execution.

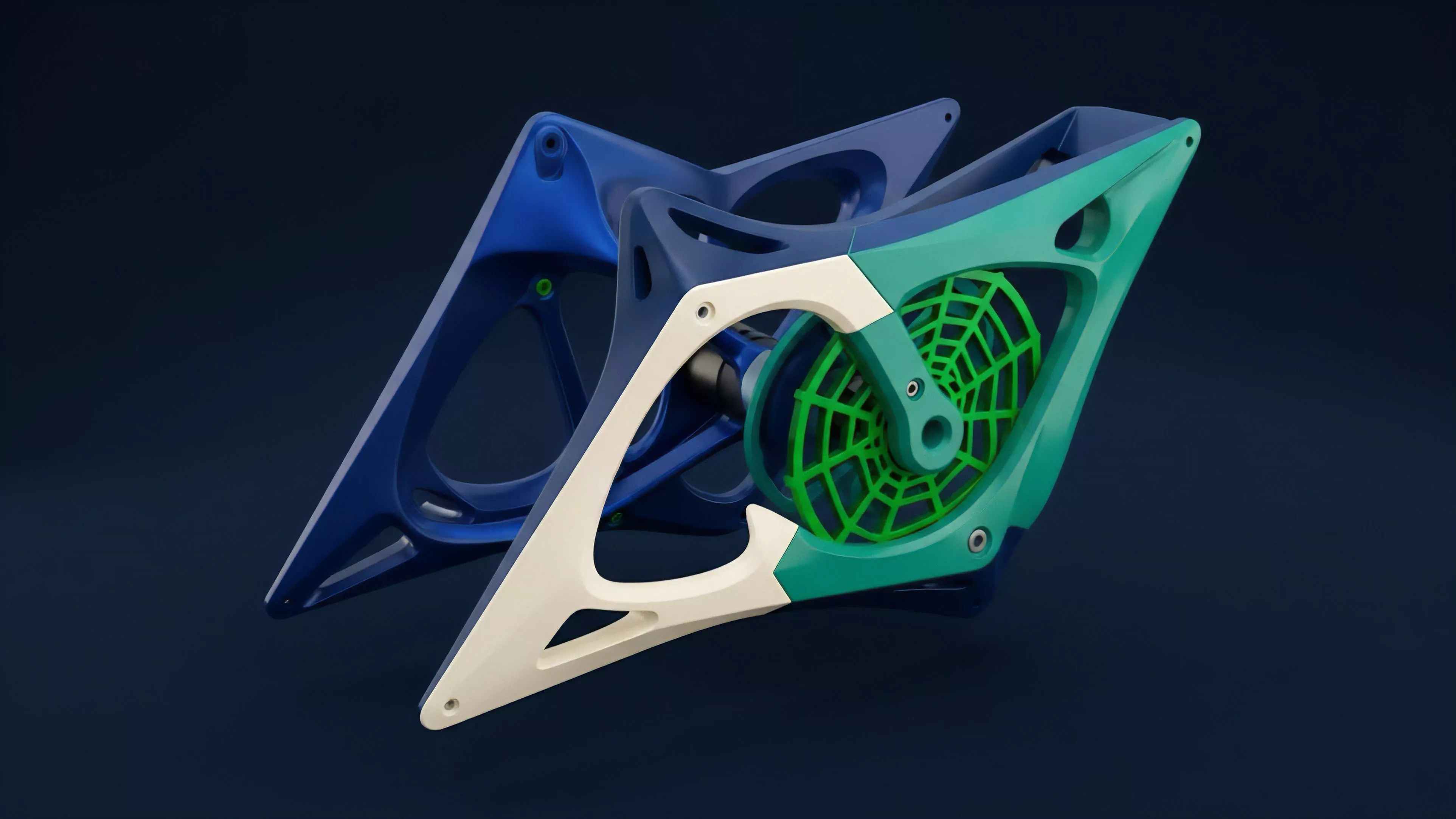

Most protocols utilize a hybrid architecture where the heavy computational load of option pricing and portfolio optimization occurs off-chain, with the resulting execution instructions validated and settled on-chain. This separation ensures that the protocol remains performant without sacrificing the trustless nature of decentralized settlement.

- Collateral Optimization strategies prioritize the movement of assets between yield-bearing protocols and derivative margin accounts to maximize returns.

- Dynamic Hedging protocols continuously adjust derivative positions to maintain a delta-neutral stance regardless of underlying price action.

- Liquidity Provisioning agents use predictive models to adjust strike price ranges based on expected volatility expansion.

Modern allocation strategies emphasize the synchronization of off-chain quantitative modeling with on-chain cryptographic enforcement.

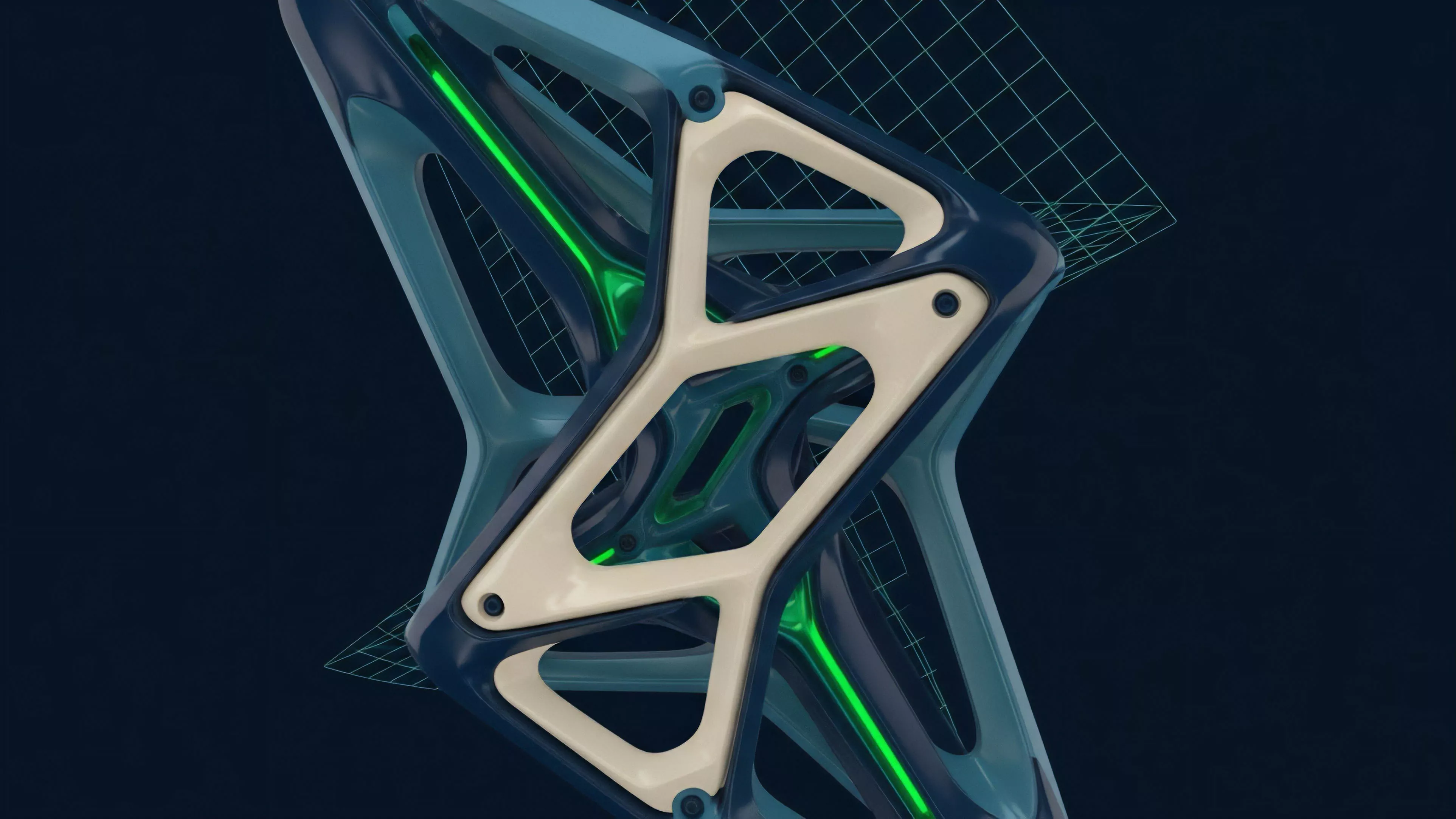

One might consider the protocol as a living organism that must balance the need for aggressive capital growth against the requirement for structural survival. This perspective shifts the focus from simple yield farming to the rigorous management of systemic risk, acknowledging that the most efficient allocation is the one that survives the next major volatility event.

Evolution

The trajectory of Automated Capital Allocation has shifted from rudimentary, rule-based rebalancing to sophisticated, AI-driven adaptive systems. Early iterations relied on simple, static thresholds that frequently resulted in over-trading during periods of high noise.

Modern systems now incorporate machine learning models to distinguish between transient price fluctuations and structural market shifts, allowing for more precise and cost-effective rebalancing.

Systemic Interconnection

The current state reflects a move toward cross-protocol integration, where capital moves seamlessly across different decentralized venues to capture arbitrage opportunities. This interconnectedness increases the speed of market discovery but also introduces significant contagion risks. If one major protocol experiences a technical failure, the automated nature of these systems may cause a rapid, multi-protocol liquidation cascade, as agents react to price signals in unison.

| Evolutionary Stage | Primary Characteristic |

| Static | Fixed threshold rebalancing |

| Adaptive | Volatility-based threshold adjustment |

| Predictive | AI-driven signal-based deployment |

The reality of these systems involves a constant struggle against the limitations of current blockchain throughput. Every attempt to increase the sophistication of the allocation model faces the reality of transaction costs and latency, forcing designers to prioritize simplicity over theoretical perfection.

Horizon

The future of Automated Capital Allocation points toward the total abstraction of risk management for the end user. We anticipate the emergence of autonomous, protocol-native agents that manage entire portfolios across fragmented liquidity pools without human input.

These systems will likely utilize zero-knowledge proofs to demonstrate compliance with risk mandates while keeping proprietary strategies private.

- Cross-Chain Orchestration will enable capital to move across disparate blockchain architectures to exploit global yield differentials.

- Autonomous Risk Engines will self-correct based on historical failure data, creating a self-healing financial infrastructure.

- Institutional Integration will demand higher standards of transparency and auditability, pushing protocols toward standardized reporting interfaces.

The synthesis of divergence between purely autonomous agents and regulated institutional frameworks will determine the path forward. We must address the inherent paradox where the desire for total automation creates a single point of failure in the form of code bugs or oracle manipulation. The ultimate goal is the creation of a resilient, decentralized financial system where capital allocation occurs as an emergent property of the market rather than a central decision. How can decentralized protocols reconcile the necessity for rapid, automated response times with the requirement for human-in-the-loop oversight to prevent systemic collapse during unforeseen black swan events?