Essence

Attribute Verification functions as the foundational mechanism for validating specific characteristics of an underlying asset or derivative contract within decentralized environments. It ensures that data points, such as strike price, expiration date, or collateral quality, align with predefined protocol constraints before execution or settlement occurs. By formalizing these checks, the system establishes trust without requiring intermediaries to manually confirm asset status.

Attribute Verification serves as the automated gatekeeper that guarantees the integrity of derivative contract parameters before market execution.

This process relies on cryptographic proofs to confirm that an asset possesses the required properties to meet margin requirements or settlement obligations. Without this, decentralized derivatives face systemic risks from invalid inputs or manipulated data, which could lead to cascading liquidations or incorrect payout calculations.

Origin

The necessity for Attribute Verification arose from the limitations of early decentralized exchange models, which lacked robust mechanisms to handle complex financial instruments. Initially, simple spot trades required minimal validation.

As protocols introduced options and perpetual swaps, the requirement to verify specific contract attributes ⎊ such as the delta-neutral status of a vault or the eligibility of a collateral token ⎊ became critical. Early systems relied on centralized oracles, which created single points of failure. The evolution toward decentralized, proof-based verification was a direct response to the vulnerability of these oracle-dependent architectures.

Developers identified that verifying the state of an asset, rather than merely its price, provided a more resilient foundation for complex financial products.

Theory

The theoretical framework for Attribute Verification rests on the intersection of formal verification and game theory. It treats contract parameters as inputs that must satisfy a set of Boolean conditions defined by the protocol. If the attributes do not match the expected state, the smart contract prevents the transaction, thereby protecting the protocol from toxic flow.

| Parameter Type | Verification Method | Systemic Impact |

| Collateral Quality | On-chain proof of reserves | Mitigates insolvency risk |

| Contract Expiration | Timestamp validation | Ensures timely settlement |

| Strike Price | Cryptographic oracle signature | Prevents invalid exercise |

The robustness of a decentralized derivative protocol is defined by the speed and accuracy with which it verifies the attributes of its underlying assets.

This structure ensures that market participants interact with valid, executable contracts. In adversarial environments, this verification prevents actors from submitting malicious data intended to exploit the margin engine. The system operates on the principle that verification must be continuous, as asset states change rapidly within volatile crypto markets.

Approach

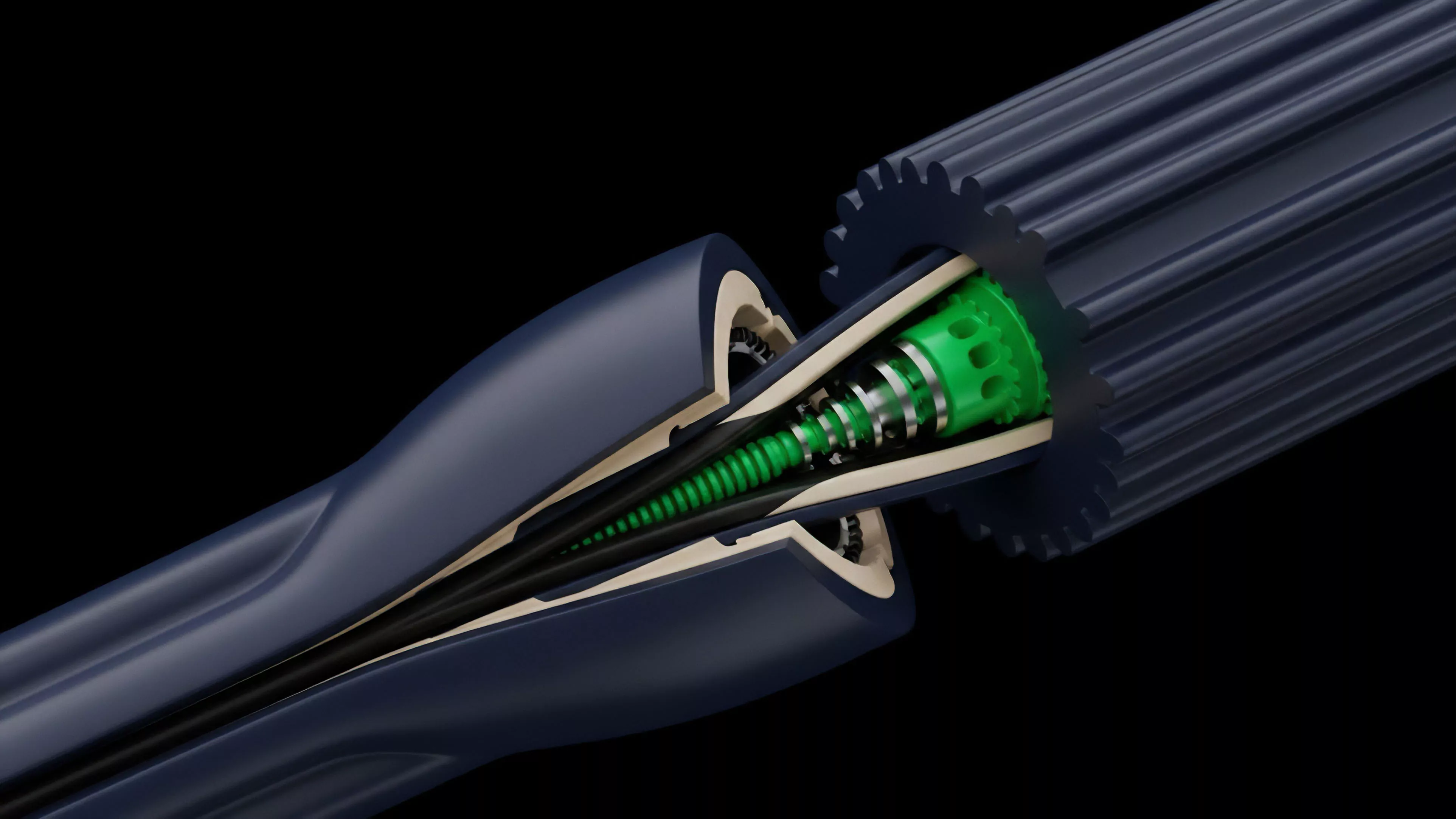

Current implementations of Attribute Verification utilize advanced cryptographic techniques such as zero-knowledge proofs to validate data without exposing underlying sensitive information.

This allows protocols to confirm that a wallet holds sufficient collateral for a specific option strategy while maintaining privacy.

- Merkle Proofs allow protocols to verify the inclusion of specific asset attributes within a larger, authenticated dataset.

- Multi-signature Schemes require multiple validators to attest to the validity of contract parameters before they are accepted by the settlement engine.

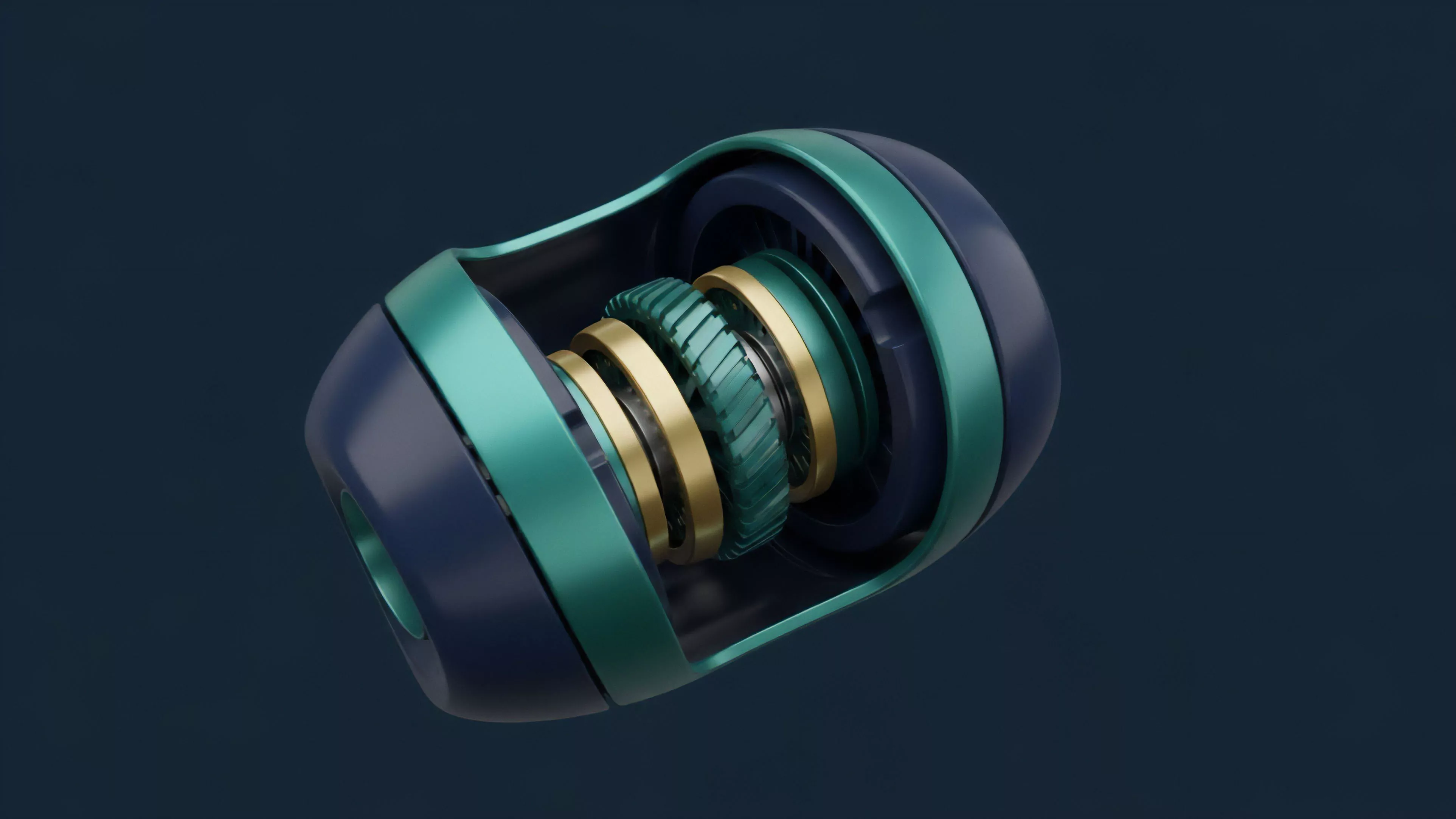

- State Commitment mechanisms lock the attributes of a derivative contract at the time of creation, preventing post-trade modification.

Market makers utilize these verification steps to manage their exposure efficiently. By automating the confirmation of collateral and contract status, liquidity providers reduce the capital drag associated with manual oversight. The focus remains on achieving sub-second latency in validation to keep pace with high-frequency trading requirements.

Evolution

The trajectory of Attribute Verification has moved from manual, centralized validation to fully autonomous, protocol-native checks.

Early iterations struggled with latency and scalability, often bottlenecking trading activity. Today, the integration of Layer 2 solutions and specialized execution environments has enabled these checks to occur at speeds comparable to centralized venues.

Decentralized finance has evolved from trust-based parameter setting to a model where mathematical proof dictates the validity of every derivative trade.

The shift reflects a broader maturation of the decentralized financial stack. As protocols handle larger volumes and more complex instruments, the tolerance for verification failure has vanished. Modern architectures now treat Attribute Verification as a core component of the consensus layer, ensuring that no trade is finalized until its attributes are cryptographically proven.

Horizon

Future developments in Attribute Verification will focus on cross-chain interoperability and the integration of off-chain data sources.

As derivatives move across disparate blockchain networks, verifying attributes consistently will require standardized communication protocols. This will lead to a unified standard for asset metadata, enabling seamless interaction between protocols that previously operated in isolation.

- Universal Asset Standards will provide a common language for verifying attributes across different blockchain ecosystems.

- AI-Driven Verification agents will monitor real-time market data to dynamically adjust the stringency of attribute checks based on volatility.

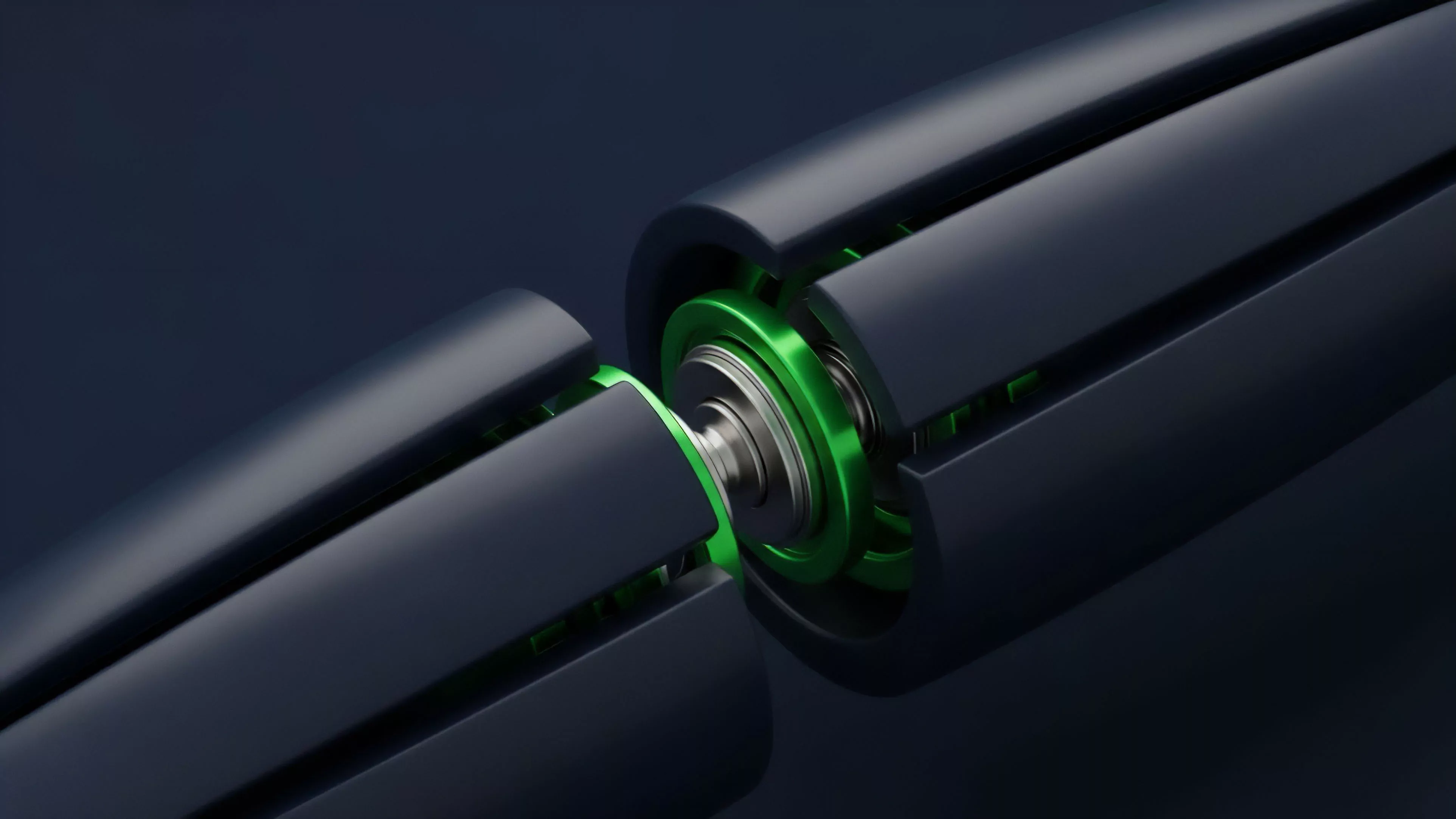

- Hardware-Level Validation will leverage trusted execution environments to verify sensitive attributes directly at the hardware layer.

The ultimate objective is to create a frictionless financial environment where verification is invisible, instantaneous, and mathematically certain. This shift will allow for the proliferation of highly customized derivative products that are currently hindered by the overhead of existing validation processes.