Essence

Automated Market Maker efficiency metrics quantify the relationship between liquidity provision and trade execution quality. These frameworks evaluate how effectively a protocol converts deposited assets into price discovery, minimizing slippage for participants while maximizing fee capture for liquidity providers. The core objective remains the reduction of capital intensity required to support a specific volume of trading activity.

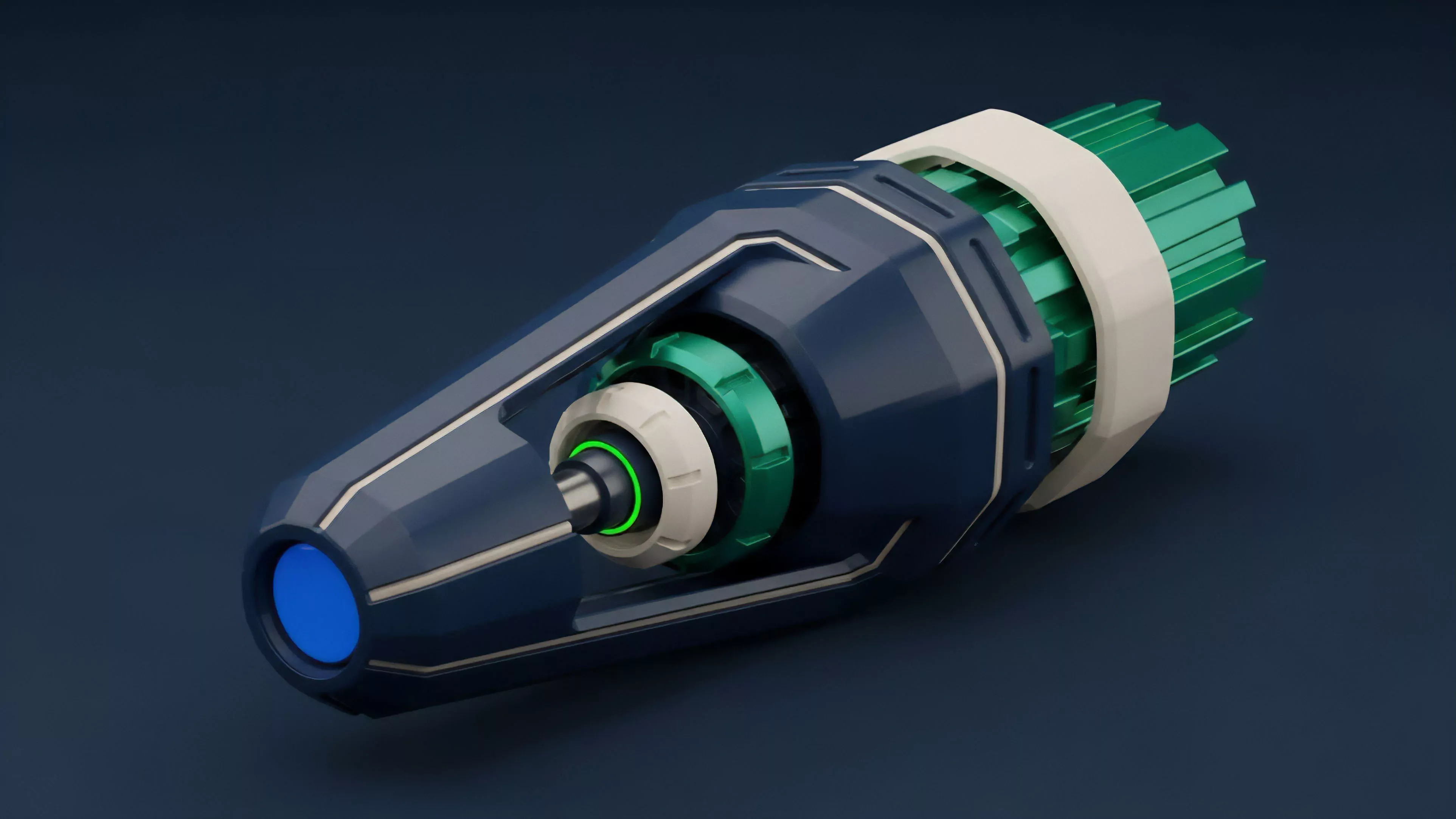

AMM efficiency metrics measure the conversion of stagnant liquidity into active trade execution quality.

Financial participants prioritize these metrics to assess the viability of liquidity pools, as higher efficiency directly correlates with lower transaction costs and increased protocol competitiveness. The metrics distinguish between theoretical depth and realized liquidity, identifying instances where capital is underutilized or misallocated within the automated price curve.

Origin

The genesis of these metrics traces back to the limitations inherent in early constant product market makers. Initial designs prioritized simplicity and censorship resistance over capital allocation optimization.

As decentralized finance matured, the requirement to manage impermanent loss and improve price impact forced developers to derive standardized methods for measuring protocol performance.

- Capital Utilization Ratio emerged as the primary indicator for assessing how much of a pool is actively participating in trade execution versus remaining idle.

- Price Impact Analysis evolved from basic slippage observations into rigorous models calculating the cost of execution against the total liquidity depth.

- Fee Generation Velocity was adopted to track the return on capital, contrasting earned yield against the volatility risk borne by liquidity providers.

These tools were created to bridge the gap between abstract mathematical models and the realities of adversarial market environments where arbitrageurs constantly exploit pricing inefficiencies.

Theory

The mathematical structure of these metrics relies on the interaction between the bonding curve and the order flow. Efficient protocols minimize the deviation of the realized price from the oracle price, a phenomenon often analyzed through the lens of local convexity within the pricing function.

| Metric | Mathematical Focus | Systemic Utility |

| Slippage Coefficient | Order size relative to liquidity | Execution quality assessment |

| Pool Concentration | Liquidity density near spot price | Capital efficiency optimization |

| Arbitrage Latency | Response time to price updates | Protocol safety and synchronization |

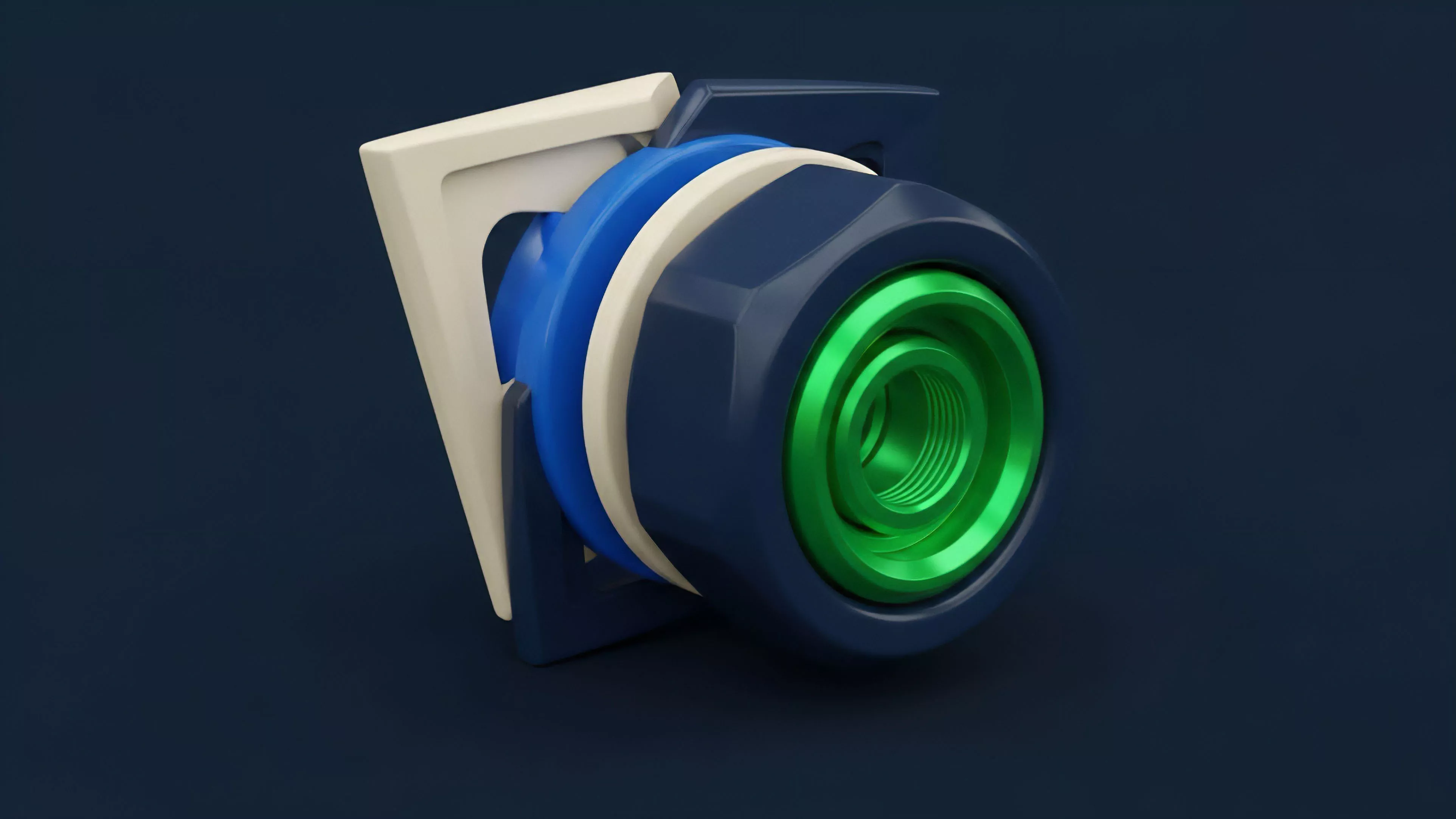

Efficient protocols align liquidity density with expected trade volume to minimize execution variance.

The theory posits that liquidity is a function of time and price. A pool that holds massive assets far from the current market price provides little utility, rendering those assets inefficient. Consequently, sophisticated metrics now prioritize the concentration of liquidity within relevant price ranges to maximize the yield per unit of capital.

Approach

Current strategies involve real-time monitoring of on-chain state transitions to compute efficiency in high-frequency environments.

Analysts now utilize granular event logs to reconstruct order books, identifying how liquidity is drained or added in response to market volatility.

- Concentrated Liquidity Monitoring requires tracking the active tick ranges where the majority of trades occur.

- Volatility-Adjusted Yield accounts for the impermanent loss incurred during periods of high price swings, providing a more accurate net return.

- Execution Path Analysis involves tracing how large trades move through multiple liquidity tiers to determine the true cost of liquidity.

These methods move beyond static snapshots, favoring dynamic tracking that adapts to shifting market conditions. This requires constant calibration of risk parameters, as the efficiency of a pool is intrinsically linked to the volatility of the underlying assets.

Evolution

The trajectory of these metrics shifted from passive observation to active management. Early iterations focused on simple volume metrics, whereas contemporary systems incorporate complex risk-adjusted performance indicators.

This transition reflects the increasing sophistication of liquidity providers who demand transparent data to justify their capital allocation.

Liquidity management has evolved from passive provision toward high-precision, range-based capital deployment.

The market has moved away from uniform liquidity distribution toward segmented, specialized pools. This evolution necessitated the development of metrics that can isolate the performance of specific liquidity tranches, enabling a more nuanced understanding of how protocol architecture influences risk and reward. The integration of off-chain data feeds with on-chain execution has further refined these metrics, allowing for predictive modeling of liquidity needs.

Horizon

Future developments will center on the automated recalibration of liquidity based on predictive volatility modeling.

As protocols gain the ability to adjust their own bonding curves in response to external data, efficiency metrics will become embedded directly into the consensus layer.

- Predictive Liquidity Allocation uses machine learning to anticipate order flow and adjust pool concentration before trades arrive.

- Cross-Protocol Efficiency Benchmarking will allow participants to compare liquidity performance across different blockchain architectures and standards.

- Automated Risk Hedging links efficiency metrics to derivative products, allowing providers to offset impermanent loss in real time.

This trajectory suggests a future where liquidity is managed by autonomous agents that optimize for efficiency without human intervention. The ultimate objective remains the creation of a seamless, high-velocity financial system where capital is always deployed at the point of highest utility.