Essence

Algorithmic Trading Latency defines the temporal gap between the generation of a trading signal and the successful execution of the corresponding order within a decentralized or centralized exchange architecture. This duration encompasses message propagation, network consensus, and order matching engine processing. In the high-stakes environment of digital asset derivatives, this period acts as a silent tax on capital, where even microsecond deviations determine the profitability of arbitrage, market making, and directional hedging strategies.

Algorithmic trading latency functions as the fundamental temporal constraint determining the efficacy of automated execution within decentralized markets.

The systemic relevance of this metric extends beyond individual PnL calculations. It dictates the efficiency of price discovery mechanisms. When latency is non-uniform across participants, it creates an asymmetric informational advantage, enabling predatory behaviors such as front-running or sandwich attacks.

Understanding this concept requires shifting focus from theoretical model pricing to the physical reality of data transmission across distributed networks.

Origin

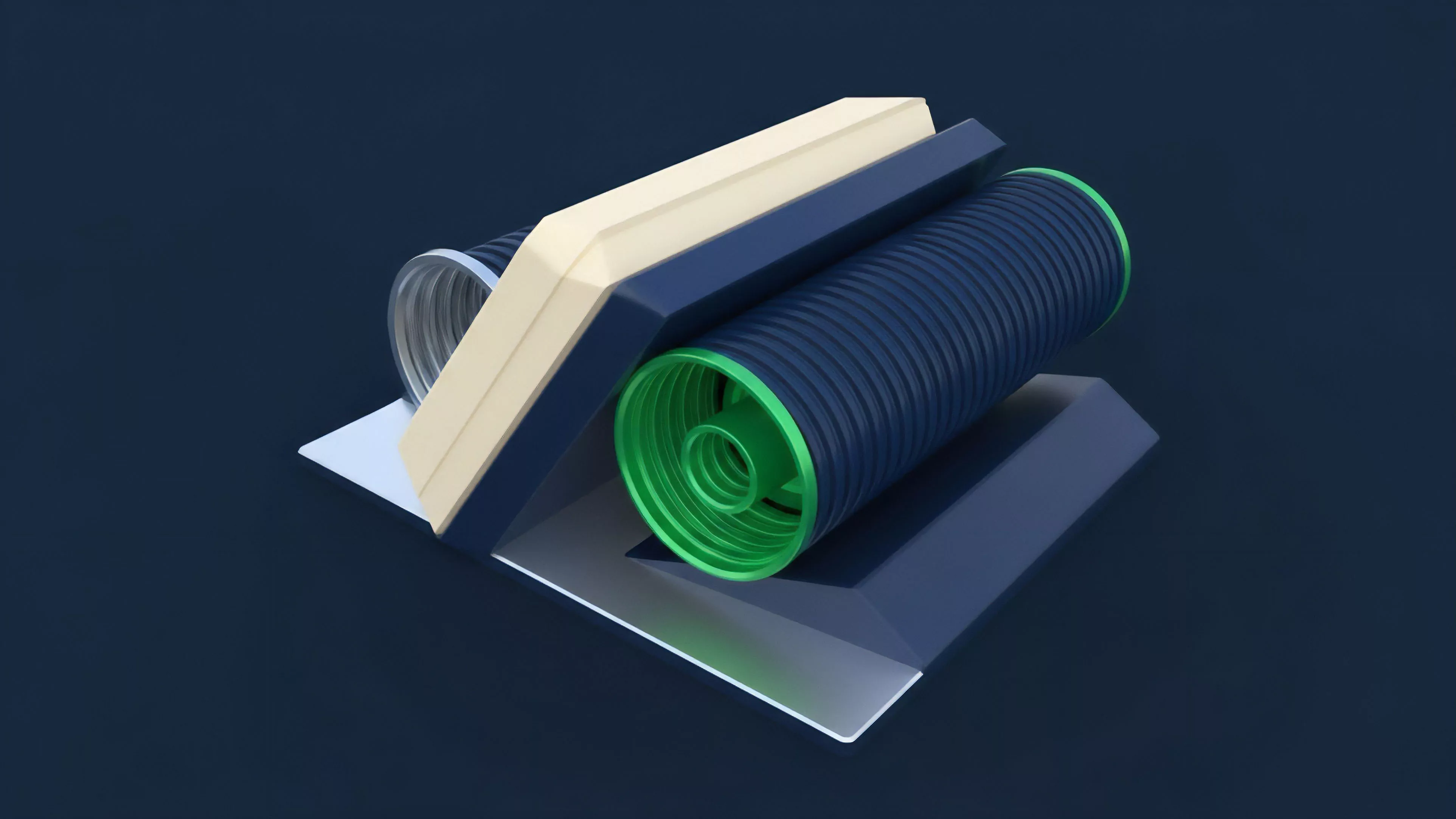

The genesis of Algorithmic Trading Latency in digital assets mirrors the evolution of high-frequency trading in traditional equity markets but introduces unique cryptographic bottlenecks. Early decentralized exchanges relied on simple order books that lacked sophisticated matching engines, resulting in high overhead for every transaction. As derivatives protocols gained traction, the necessity for sub-second settlement led to the development of off-chain order books and on-chain settlement layers.

- Protocol Physics: The shift from monolithic blockchains to modular architectures highlights the trade-offs between security and execution speed.

- Consensus Mechanisms: The transition from Proof of Work to Proof of Stake introduced deterministic block times, altering the predictable nature of order confirmation.

- Liquidity Fragmentation: The proliferation of cross-chain bridges created additional layers of delay, as state synchronization across heterogeneous environments became a primary hurdle.

These architectural choices reflect a broader attempt to reconcile the trustless nature of distributed ledgers with the performance requirements of professional financial instruments.

Theory

The mathematical modeling of Algorithmic Trading Latency involves evaluating the total time cost function, often represented as the sum of network transit time, validation delay, and matching engine throughput. Quantitatively, this is expressed through stochastic modeling of block arrival times and network congestion. Participants must account for these variables when calculating the Greeks of their option portfolios, as the decay of an option premium is compounded by execution slippage during high-volatility events.

Quantifying latency involves modeling the stochastic intersection of network propagation delays and protocol-specific consensus confirmation intervals.

Adversarial agents within these markets exploit these delays through strategic order placement. By analyzing the mempool, automated bots identify pending transactions and inject higher-gas-fee orders to preempt the original trade. This behavioral game theory application transforms Algorithmic Trading Latency into a competitive resource, where the ability to minimize transmission time correlates directly with the capture of economic rent.

| Latency Component | Impact Factor |

| Network Transit | High |

| Consensus Finality | Extreme |

| Matching Engine | Moderate |

Approach

Current strategies for mitigating Algorithmic Trading Latency center on the deployment of sophisticated infrastructure, including co-location near validator nodes and the use of private mempools. Professional market makers utilize specialized hardware and custom networking stacks to ensure their orders reach the sequencer or matching engine ahead of retail flow. This pursuit of speed necessitates a deep understanding of the underlying protocol architecture, including the specific gossip protocols used for transaction propagation.

- Private Mempools: These venues allow traders to bypass public transaction broadcasting, reducing the exposure to front-running bots.

- Sequence Optimization: Advanced protocols now utilize centralized sequencers to order transactions before submitting them to the base layer, effectively standardizing latency for participants.

- Batch Processing: Aggregating multiple orders into a single transaction minimizes the per-order impact of network congestion and gas fee volatility.

This landscape is not static; it is a constant arms race between those who optimize for raw speed and those who design protocols to equalize execution access.

Evolution

The trajectory of Algorithmic Trading Latency has moved from simple, unoptimized broadcast models to highly engineered, low-latency infrastructure. Initial designs treated all transactions as equal, leading to congestion and unpredictable settlement times. Today, the industry prioritizes the separation of execution and settlement.

By offloading the order matching process to high-performance off-chain environments, protocols achieve throughput levels comparable to centralized exchanges.

Evolution in market structure shifts the burden of latency management from the individual trader to the protocol architecture itself.

Sometimes, the obsession with reducing latency obscures the risk of centralization, as only well-capitalized entities can afford the necessary infrastructure to compete. This creates a feedback loop where the most performant protocols attract the most liquidity, which in turn necessitates even more robust infrastructure to manage the increased transaction load.

Horizon

The future of Algorithmic Trading Latency lies in the maturation of zero-knowledge proofs and hardware-accelerated cryptographic verification. These technologies promise to allow for near-instantaneous verification of complex derivative trades without sacrificing the decentralization of the settlement layer.

As these tools become standard, the focus will shift from minimizing transmission time to optimizing for capital efficiency and risk management under stress.

| Emerging Tech | Latency Benefit |

| ZK-Rollups | Scalable Execution |

| FPGA Accelerators | Hardware-Level Speed |

| Proposer-Builder Separation | Fair Order Flow |

Ultimately, the goal is a financial system where latency is a transparent, predictable variable rather than a source of hidden rent. The ability to model and manage these temporal risks will define the next generation of successful market participants and protocol architects.