Essence

Algorithmic trading failures within crypto derivatives represent systemic malfunctions where automated execution logic diverges from intended market outcomes. These failures manifest when programmed strategies encounter unforeseen liquidity constraints, protocol latency, or unexpected price action that triggers unintended order execution. Such events often lead to cascading liquidations, as the automated nature of these systems removes human judgment from risk management during periods of extreme volatility.

Automated trading failures occur when execution logic breaks down under extreme market stress leading to unintended and often catastrophic financial consequences.

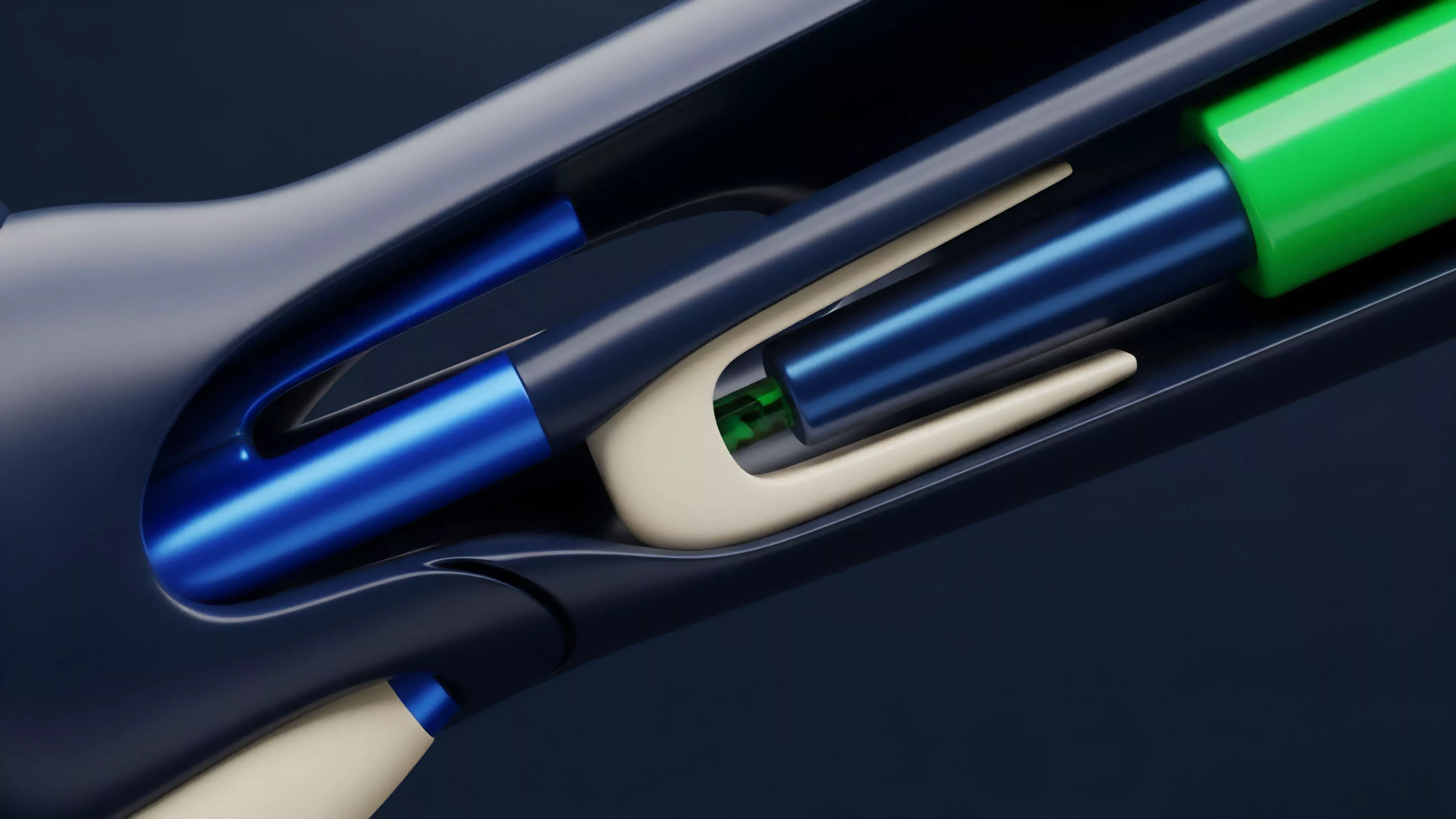

The core mechanism of these failures involves a breakdown in the feedback loop between market data ingestion and order execution. When a system relies on stale pricing data or fails to account for the depth of an order book, it initiates trades that exacerbate price slippage. This creates a self-reinforcing cycle where the algorithm’s own actions drive prices further away from the expected equilibrium, eventually triggering liquidation engines and causing widespread collateral damage across the protocol.

Origin

The genesis of these failures lies in the rapid transition from manual order management to high-frequency, protocol-native execution within decentralized finance.

Early market makers utilized rudimentary scripts to maintain tight spreads, but these tools lacked the sophistication to handle the idiosyncratic risks inherent in blockchain settlement. As decentralized derivatives grew, developers imported traditional quantitative finance models without adapting them to the realities of non-deterministic block times and transparent, front-runnable mempools.

- Latency sensitivity in order matching engines leads to execution delays during periods of high network congestion.

- Liquidity fragmentation across decentralized exchanges prevents algorithms from effectively hedging positions in real time.

- Oracle manipulation allows external actors to feed false pricing data to automated strategies, forcing unintended liquidations.

These architectural limitations were initially overlooked in the rush to capture yield and provide liquidity. The assumption that market efficiency would mirror traditional finance proved faulty, as the absence of centralized circuit breakers meant that errors propagated at the speed of consensus. The resulting landscape is one where code vulnerabilities and market microstructure flaws intersect to create unique failure vectors.

Theory

Quantitative modeling of algorithmic failures requires an analysis of risk sensitivity and state-space transitions.

When an algorithm functions, it operates within a defined parameter set, often assuming Gaussian distribution of returns. In decentralized markets, volatility exhibits heavy tails, rendering standard models ineffective. These failures are essentially state-space transitions where the system moves from a stable equilibrium to a chaotic, uncontrolled liquidation state.

| Failure Vector | Mechanism | Systemic Impact |

| Flash Crash | Rapid liquidity withdrawal | Cascading margin calls |

| Oracle Failure | Stale or manipulated price feeds | Incorrect collateral valuation |

| Feedback Loop | Algorithmic sell-pressure | Market-wide price depression |

The mathematical vulnerability stems from the reliance on deterministic execution in a non-deterministic environment. When an algorithm triggers a market sell order during low liquidity, the price impact function is non-linear. The resulting slippage consumes collateral faster than the system can rebalance, leading to insolvency.

This is where the pricing model becomes truly elegant ⎊ and dangerous if ignored. My professional stake in this analysis comes from observing how these models consistently underestimate the correlation between liquidity withdrawal and volatility spikes during periods of stress.

Approach

Current management of algorithmic failure focuses on robust stress testing and the implementation of circuit breakers within smart contracts. Developers now incorporate more sophisticated volatility estimators that adjust position sizing based on real-time order book depth.

Furthermore, protocols utilize multi-source oracle aggregators to mitigate the risk of a single point of failure in pricing data. These approaches seek to introduce latency-resistant logic that can pause execution when market conditions deviate from established safety thresholds.

Risk management in decentralized derivatives requires moving beyond static models to embrace dynamic, liquidity-aware execution strategies.

Despite these advancements, the adversarial nature of blockchain environments means that strategies are under constant pressure from predatory agents. Sophisticated actors monitor mempools to front-run these automated strategies, forcing them into disadvantageous positions. This reality demands a shift toward defensive programming, where the protocol itself assumes that all participants, including the algorithm, operate with the intent to maximize gain at the expense of system stability.

Evolution

The trajectory of these systems has shifted from simple execution scripts to complex, autonomous agents capable of adjusting to multi-chain liquidity environments.

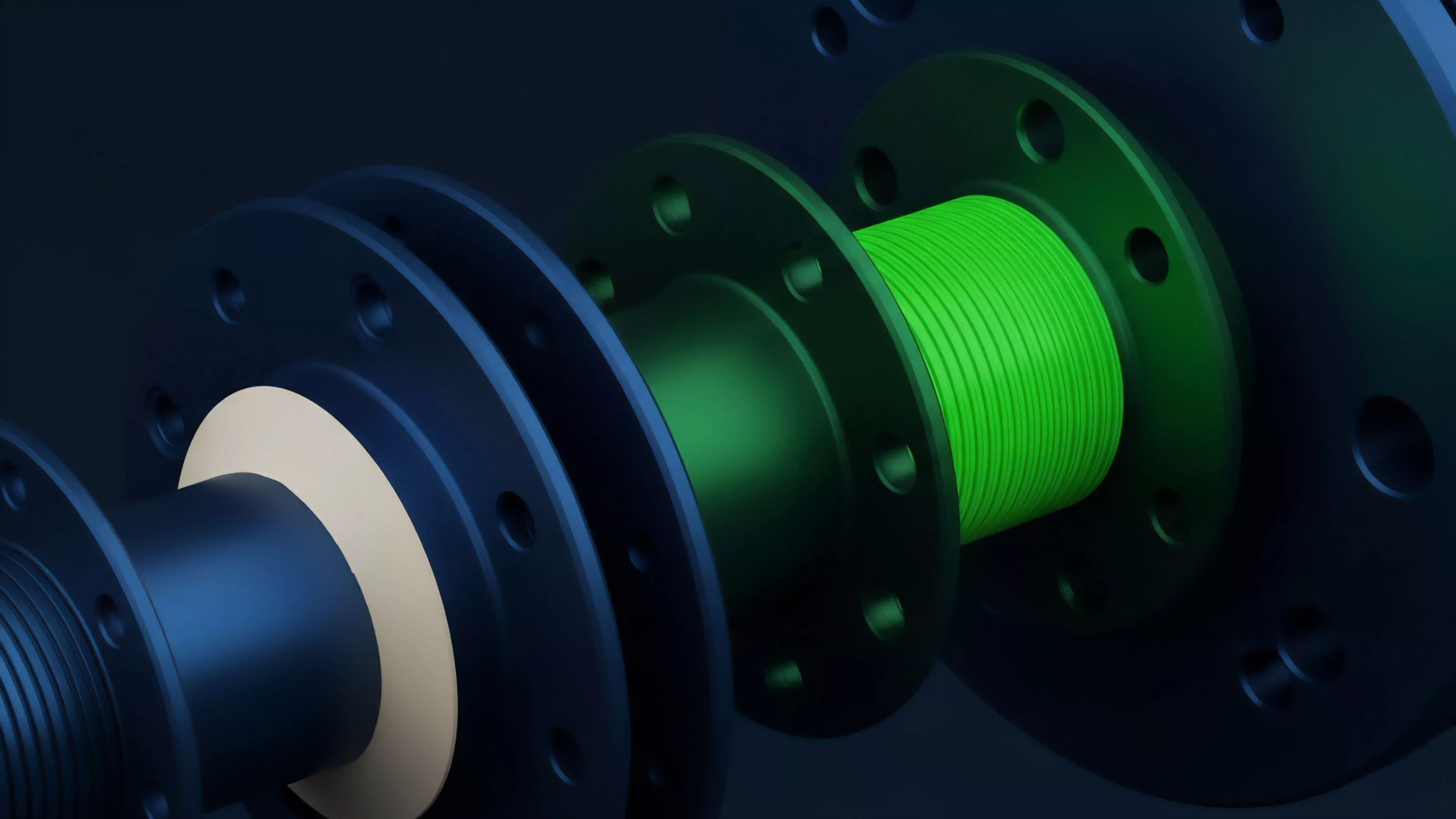

Early iterations relied on centralized APIs, which created a bottleneck and a single point of failure. Modern architectures leverage decentralized off-chain computation, such as zero-knowledge proofs and decentralized oracle networks, to ensure that the logic driving the trade is as transparent and immutable as the settlement layer itself. The evolution reflects a broader shift toward protocol-level risk mitigation rather than relying on individual user prudence.

By baking liquidation thresholds and volatility dampeners directly into the smart contract code, the system becomes self-healing. This change has moved the responsibility of stability from the trader to the protocol architect, ensuring that the market structure can survive even when individual algorithms fail. We are essentially building a financial machine that learns from its own malfunctions, though the cost of this learning remains high for participants caught in the crossfire.

Horizon

Future developments will likely involve the integration of predictive artificial intelligence that anticipates liquidity shifts before they manifest in price action.

These systems will not only execute trades but also simulate the impact of their own orders on the broader market microstructure before committing capital. This capability to perform internal, real-time stress testing will reduce the frequency of catastrophic failures. The next phase will see the standardization of risk parameters across disparate protocols, creating a more cohesive and resilient derivative ecosystem.

- Autonomous hedging agents will replace static risk management models to better handle tail-risk events.

- Predictive liquidity modeling will enable algorithms to avoid periods of market fragility.

- Cross-protocol settlement layers will standardize risk and collateral requirements across decentralized markets.

The ultimate goal is the creation of a market structure where algorithmic failure is a localized event rather than a systemic crisis. This will require a deeper understanding of the physics of decentralized finance, where code, incentives, and human psychology are treated as a single, interconnected system. My concern remains whether the pace of innovation will outstrip our ability to audit these increasingly autonomous financial agents.