Essence

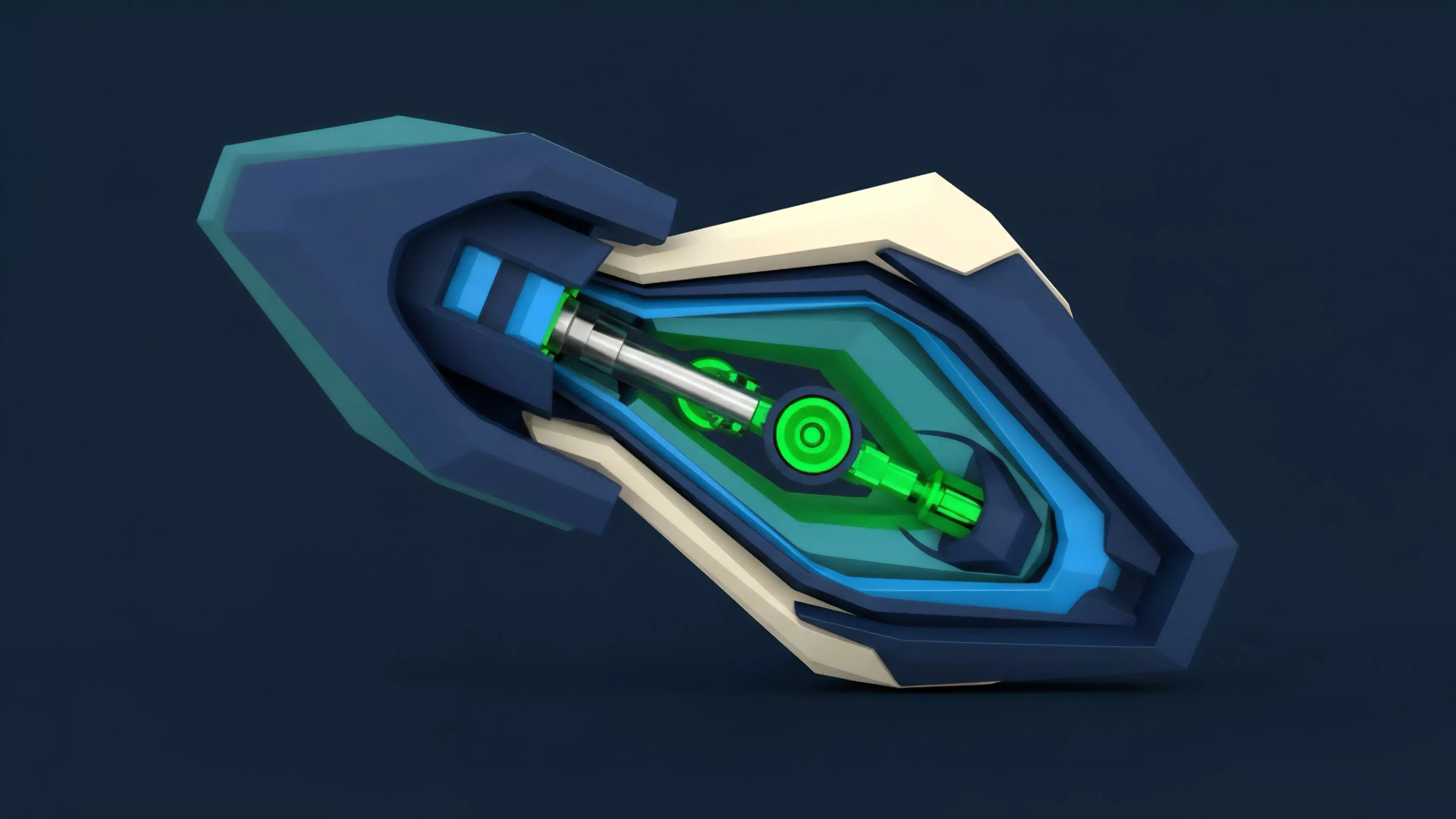

Algorithmic Performance Metrics function as the diagnostic architecture for automated trading systems, quantifying the efficacy of execution strategies within decentralized order books. These metrics distill high-frequency market data into actionable signals regarding capital efficiency, latency sensitivity, and risk-adjusted returns. By evaluating how an algorithm interacts with liquidity depth, slippage, and volatility, these indicators reveal the true capability of a strategy to generate alpha under adverse conditions.

Performance metrics quantify the interaction between automated execution logic and the underlying liquidity landscape of decentralized exchanges.

The primary utility of these metrics lies in their ability to strip away market noise, isolating the impact of specific order-routing decisions. Without precise measurement, strategy optimization remains speculative, leaving portfolios vulnerable to systemic inefficiencies inherent in automated market-making and arbitrage.

Origin

The genesis of these metrics traces back to the integration of traditional quantitative finance models with the unique constraints of blockchain-based settlement. Early participants adapted Sharpe ratios and Sortino ratios to assess returns, but these proved insufficient for the non-linear, 24/7 nature of crypto markets.

The shift toward specialized metrics occurred as protocols evolved to prioritize Order Flow Toxicity and Liquidity Fragmentation, demanding a granular view of how trade execution impacts price discovery.

- Transaction Latency measures the duration between order submission and finality, highlighting bottlenecks in consensus mechanisms.

- Slippage Quantification tracks the variance between expected execution price and the actual realized price during volatile periods.

- Fill Rate Analysis determines the percentage of limit orders executed relative to total order placement, reflecting market depth.

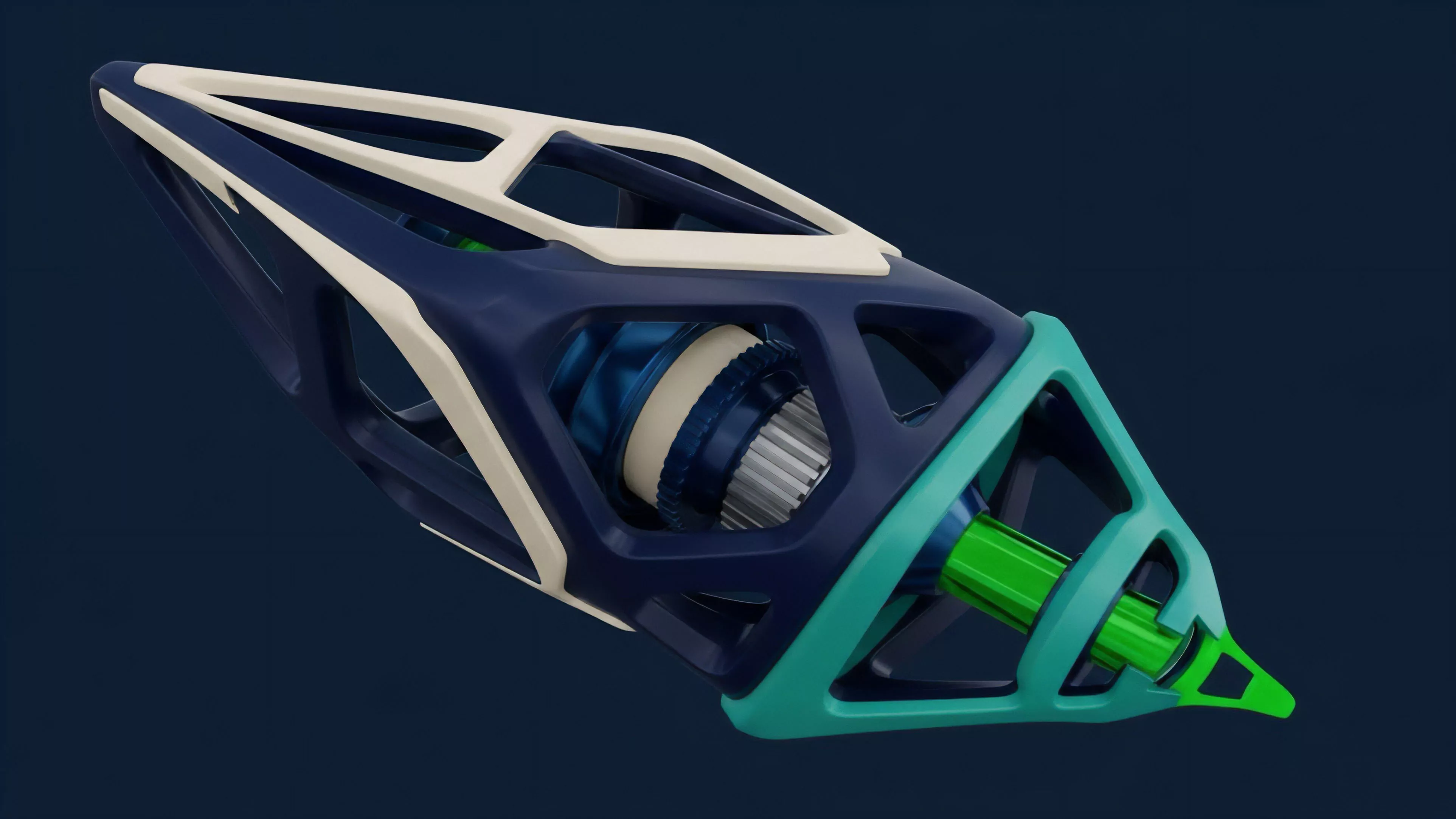

Market makers required deeper visibility into their interactions with decentralized infrastructure, forcing a departure from centralized exchange paradigms. The resulting metrics now serve as the foundation for evaluating how algorithms navigate the inherent latency of distributed ledgers.

Theory

The theoretical framework rests on the intersection of Market Microstructure and Protocol Physics. An algorithm operates within a space defined by specific consensus rules, where transaction ordering and block time directly influence the success of a strategy.

Analysts utilize Greeks ⎊ specifically delta, gamma, and theta ⎊ to model how option-based strategies respond to shifts in underlying asset volatility.

Risk management relies on the precise calibration of execution parameters against observed volatility and protocol-specific transaction constraints.

The adversarial nature of decentralized markets mandates that performance metrics account for potential front-running and MEV extraction. Strategy designers must weigh the cost of gas against the speed of execution, creating a multi-dimensional optimization problem.

| Metric Category | Primary Focus | Financial Implication |

| Execution Efficiency | Slippage and Latency | Direct impact on realized PnL |

| Risk Sensitivity | Delta and Gamma exposure | Portfolio stability under stress |

| Liquidity Utilization | Order book depth interaction | Capital efficiency and drawdown |

The mathematical rigor applied here mirrors the complexity of traditional derivative pricing, yet it accounts for the unique settlement delays of decentralized environments. Sometimes, the most effective models ignore standard volatility assumptions, favoring real-time order flow dynamics to predict short-term price shifts. This perspective acknowledges that market reality often diverges from theoretical models during high-stress liquidity events.

Approach

Modern strategy development involves continuous backtesting against historical order flow data to refine execution logic.

Analysts employ Automated Agents to simulate various market conditions, measuring performance across diverse liquidity scenarios. This approach prioritizes Real-time Monitoring, where dashboards track metrics like Volume Weighted Average Price (VWAP) against actual execution to detect drift in strategy performance.

- Dynamic Hedging adjusts portfolio exposure based on real-time sensitivity metrics to maintain neutral positioning.

- Liquidity Provision Analysis assesses the profitability of providing capital to decentralized pools by factoring in impermanent loss.

- Gas Cost Optimization integrates network fee fluctuations directly into the algorithm’s decision-making process.

This rigorous data-driven methodology ensures that strategies remain robust despite the rapid evolution of protocol designs. Decisions are driven by empirical evidence rather than theoretical expectation, emphasizing the importance of adaptability in volatile environments.

Evolution

Development has moved from basic profit tracking to complex, multi-factor analysis of system health. Initially, strategies focused solely on price capture, but the rise of complex derivative protocols necessitated the inclusion of Collateral Efficiency and Liquidation Risk metrics.

This transition reflects the maturing of the sector, where capital preservation through sophisticated risk modeling is as critical as profit generation.

Sophisticated metrics now account for the interdependency between protocol security and the liquidity of underlying derivative assets.

As the industry matures, the focus has shifted toward Cross-Protocol Contagion, where the performance of an algorithm is measured not only by its own results but by its resilience to failures in external, interconnected systems. This broader view of systemic risk represents a significant advancement in how we define and manage financial stability in decentralized finance.

Horizon

Future developments will center on the integration of Artificial Intelligence for predictive execution, where algorithms learn to anticipate liquidity shifts before they manifest in the order book. We are moving toward Autonomous Risk Engines capable of rebalancing portfolios instantaneously across fragmented liquidity venues.

This trajectory points to a highly efficient, yet increasingly complex, financial landscape where the gap between automated strategy and market reality narrows significantly.

| Emerging Trend | Technological Driver | Market Impact |

| Predictive Execution | Machine Learning Agents | Reduced impact of price slippage |

| Cross-Chain Arbitrage | Interoperability Protocols | Convergence of global asset pricing |

| Automated Governance | On-chain Risk Parameters | Adaptive protocol-level risk control |

The ultimate goal remains the creation of systems that survive and prosper within adversarial environments, leveraging data to maintain edge while minimizing exposure to systemic failure. The evolution of these metrics will define the winners in the next cycle of decentralized financial growth.