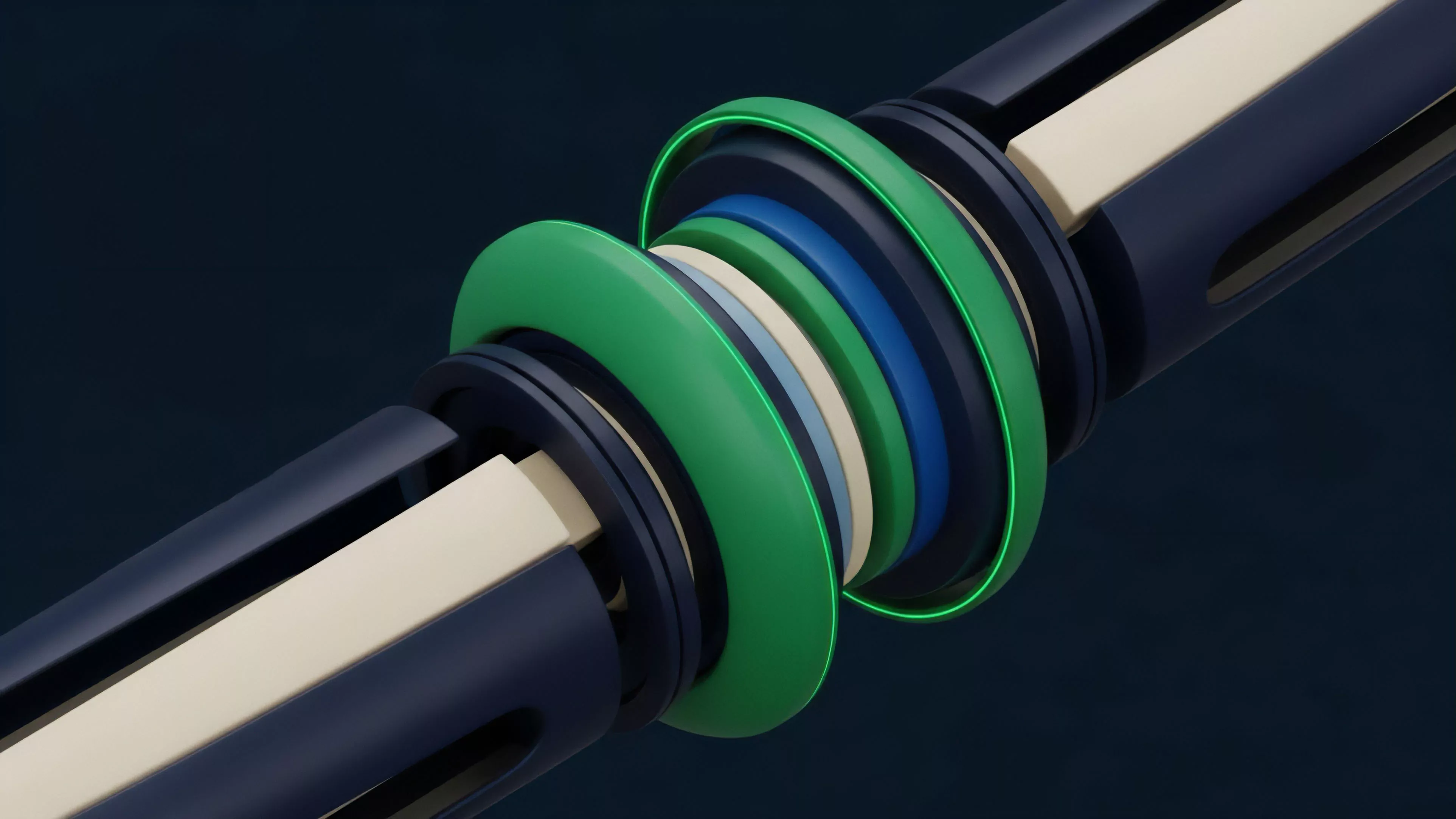

Pipeline Parallelism

Meaning ⎊ A hardware design technique that breaks tasks into simultaneous stages to increase data processing throughput.

FPGA Trading Acceleration

Meaning ⎊ Hardware-based logic implementation that allows for ultra-fast, parallelized execution of complex trading algorithms.

Hardware Performance Standards

Meaning ⎊ Benchmarks defining the computational speed and network efficiency required for low-latency financial execution.

Latency Compensation

Meaning ⎊ Techniques used to neutralize the speed advantage gained by traders physically closer to exchange matching engines.

Engine Transparency

Meaning ⎊ Open visibility into internal logic and execution rules to ensure verifiable fairness and trust in trading systems.

Exchange Connectivity Risks

Meaning ⎊ Operational hazards related to the stability, speed, and reliability of connections between trading systems and exchanges.

Network Interface Cards

Meaning ⎊ Network Interface Cards provide the essential low-latency hardware foundation for high-frequency execution in competitive crypto derivative markets.

Low Latency Drivers

Meaning ⎊ Software drivers specifically engineered to minimize delay when communicating with hardware components.

Resource Contention

Meaning ⎊ Competition between system processes for shared hardware resources, leading to potential performance degradation.

Scheduler Tuning

Meaning ⎊ Adjusting operating system scheduling parameters to optimize performance for specific high-priority workloads.

NUMA Architecture

Meaning ⎊ A computer memory design where memory access speed depends on the physical distance between the processor and memory.

Cache Locality

Meaning ⎊ Designing data structures and access patterns to keep frequently used data in high-speed CPU caches.

CPU Affinity

Meaning ⎊ Binding a software process to a specific processor core to improve cache performance and stability.

Process Scheduling

Meaning ⎊ The operating system logic that determines which tasks are executed by the CPU and in what order.

SoftIRQ Processing

Meaning ⎊ Handling deferred tasks in the kernel that were triggered by hardware events but do not require immediate response.

Hardware Interrupts

Meaning ⎊ Signals from hardware devices that force the CPU to pause current tasks to handle immediate requests.

CPU Core Isolation

Meaning ⎊ Reserving specific processor cores for critical tasks to eliminate interference from other background processes.

Interrupt Latency

Meaning ⎊ The delay between a hardware signal and the start of its processing by the operating system.

High Frequency Trading Hardware

Meaning ⎊ Advanced, specialized computing equipment built to execute trading algorithms and orders with maximum speed and efficiency.

Penny Jumping

Meaning ⎊ The act of placing orders at slightly better prices to gain execution priority in the queue.

Arbitrage Window Reduction

Meaning ⎊ The shrinking of the time frame during which price inefficiencies can be exploited by arbitrageurs due to market maturation.

Intent-Based Trading Systems

Meaning ⎊ Intent-based trading systems automate complex execution pathways to achieve user-defined financial objectives within decentralized market architectures.

Memory Management Techniques

Meaning ⎊ Memory management techniques define the latency and scalability of decentralized derivative protocols by optimizing state and order book processing.

Privacy-Preserving Order Flow

Meaning ⎊ Architectural design ensuring trade intent and order size remain hidden from public view until final execution.

Latency Monitoring Tools

Meaning ⎊ Latency monitoring tools quantify network propagation delays to manage execution risk and optimize strategy performance in decentralized derivatives.

Real-Time Operating Systems

Meaning ⎊ Deterministic computing environments ensuring microsecond-level task completion for high-frequency financial execution.

Direct Memory Access Transfers

Meaning ⎊ Hardware-to-memory data transfer without CPU intervention, enabling high-speed data ingestion and processing.

Cache Locality Optimization

Meaning ⎊ Organizing data to maximize CPU cache hits, significantly reducing latency by avoiding slow main memory access.

Shared Memory Inter-Process Communication

Meaning ⎊ A method where multiple processes share a memory region for ultra-fast, zero-copy data exchange.