Automated Risk Control

Meaning ⎊ Automated Risk Control maintains decentralized protocol solvency by programmatically enforcing collateral and liquidation standards in real-time.

Stochastic Oscillator

Meaning ⎊ A momentum indicator comparing a closing price to a range of prices to identify overbought or oversold market levels.

Vega Exposure Control

Meaning ⎊ Vega Exposure Control manages portfolio sensitivity to volatility shifts, ensuring stability and risk mitigation within decentralized derivative markets.

Slippage Control Mechanisms

Meaning ⎊ Slippage control mechanisms define the critical boundary between intended trade strategy and the mechanical reality of decentralized liquidity.

Capital Efficiency Problem

Meaning ⎊ Capital efficiency problem addresses the optimization of collateral utility within decentralized derivatives to maximize liquidity and market resilience.

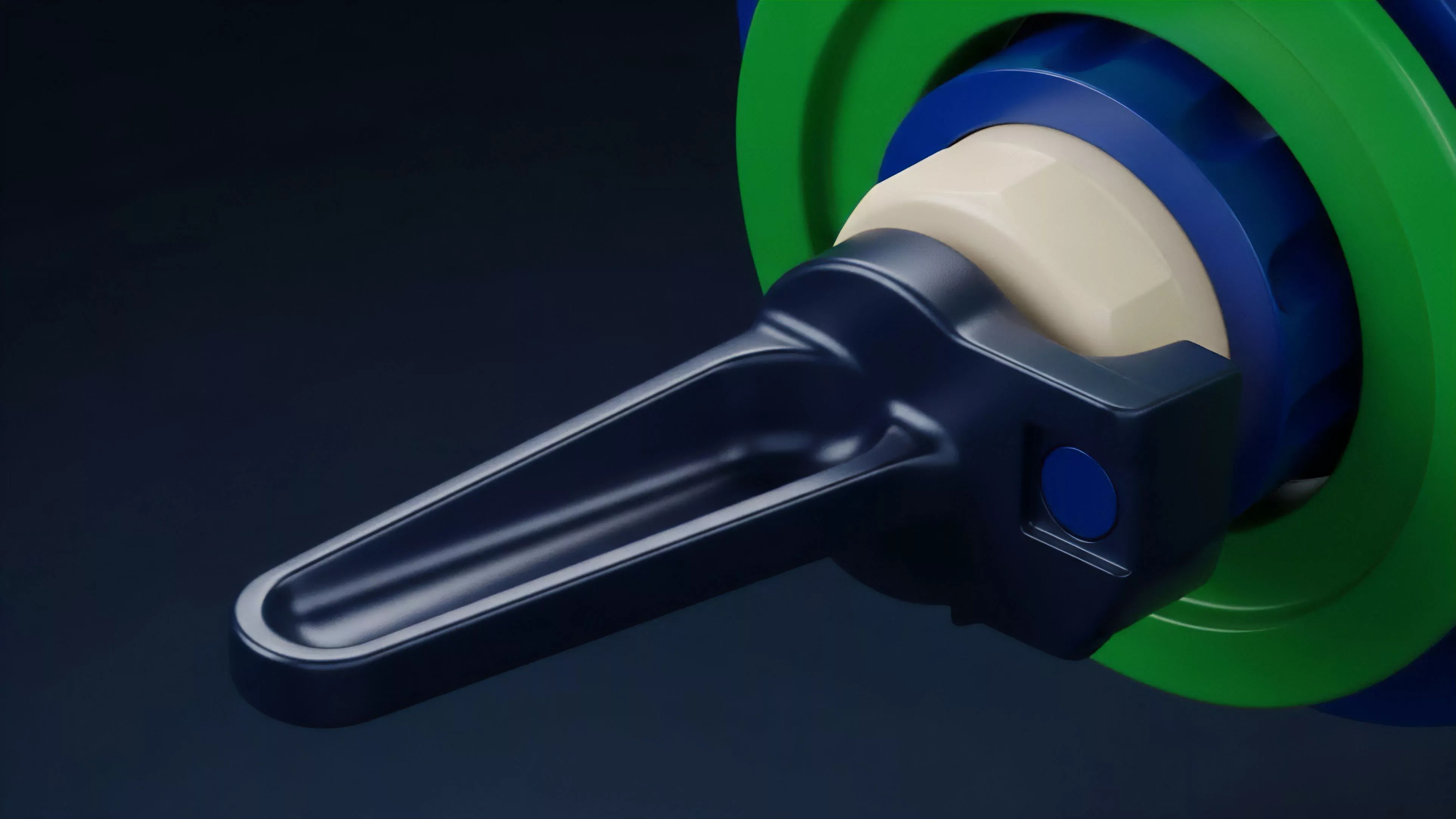

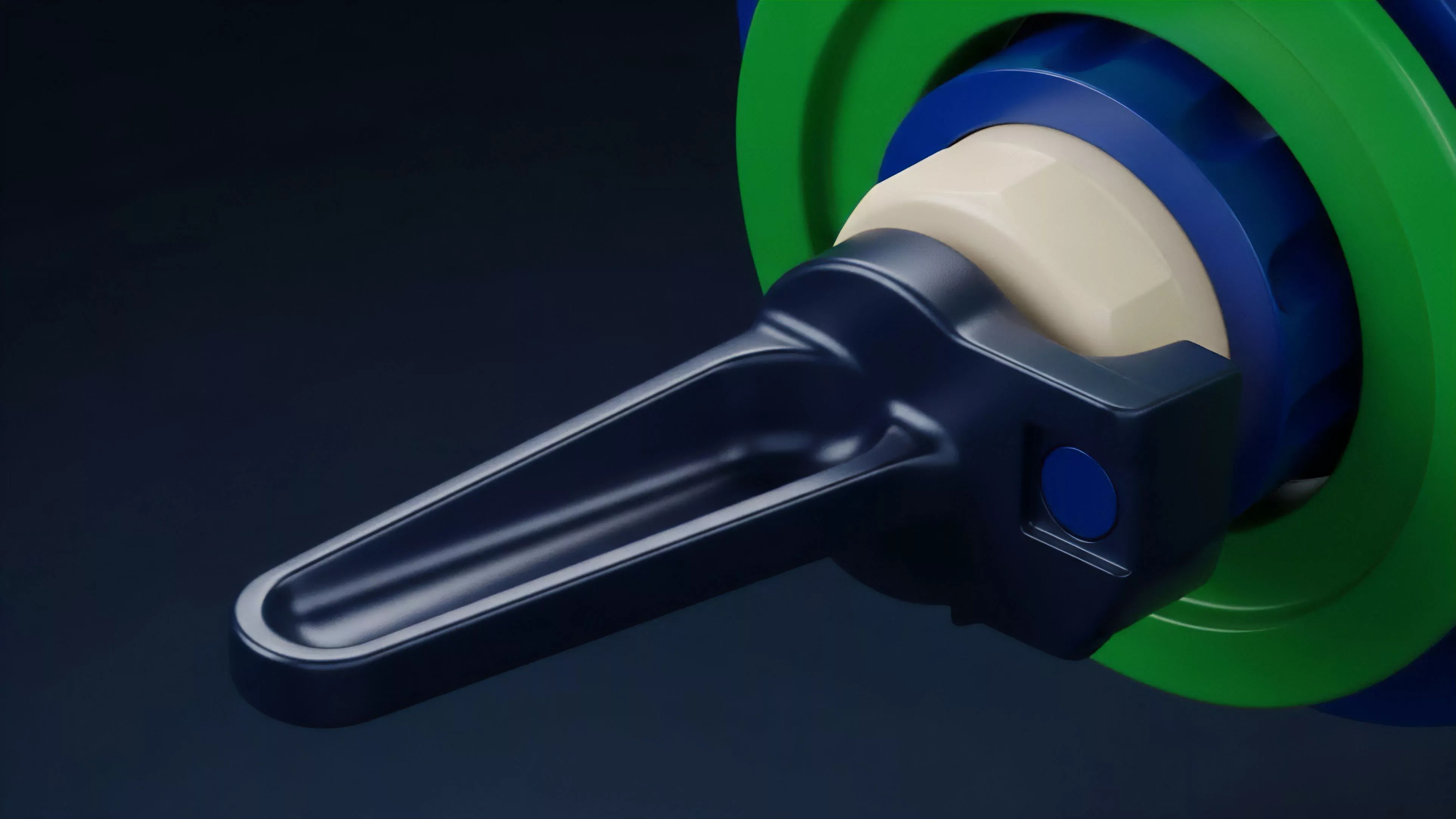

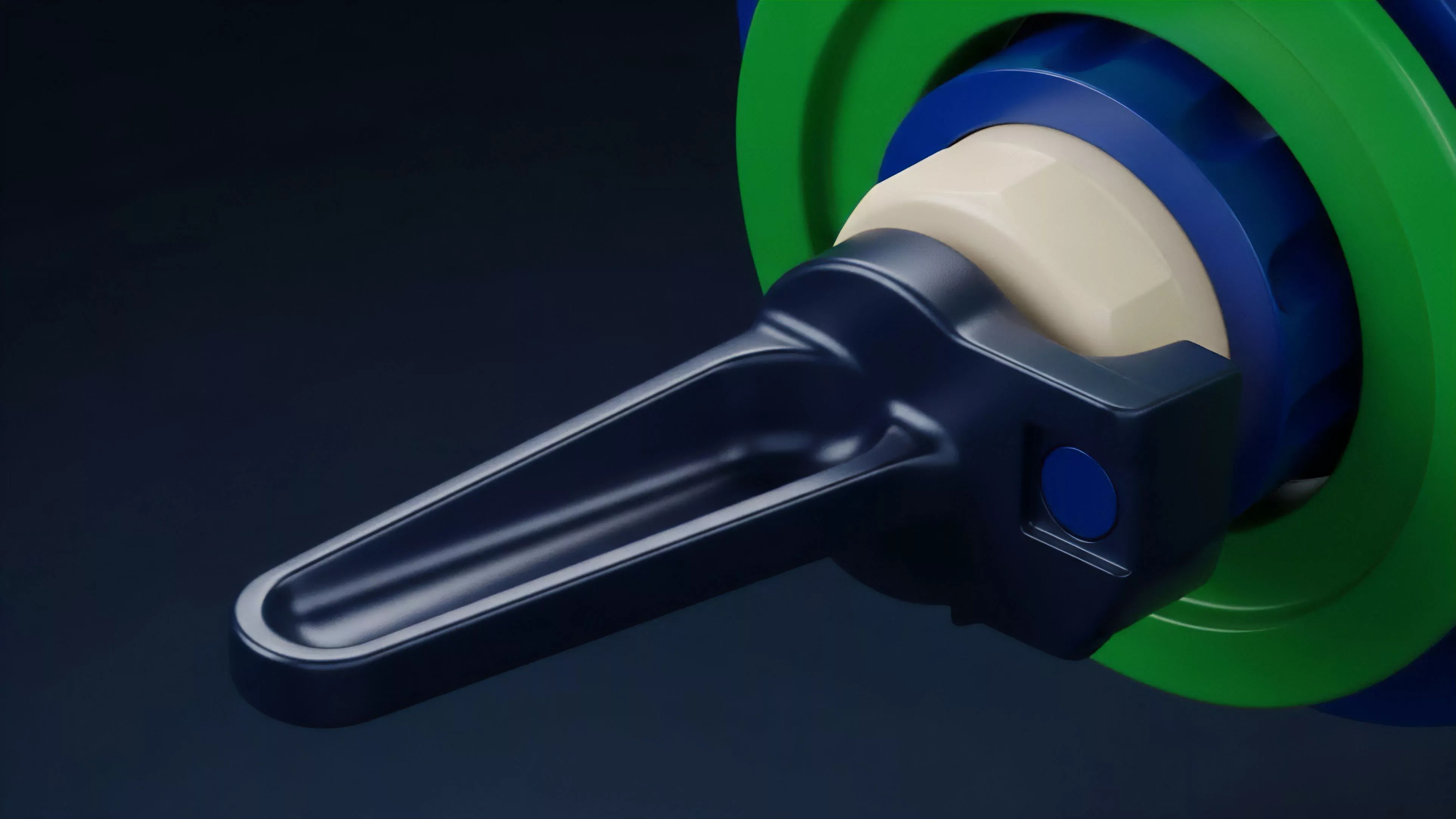

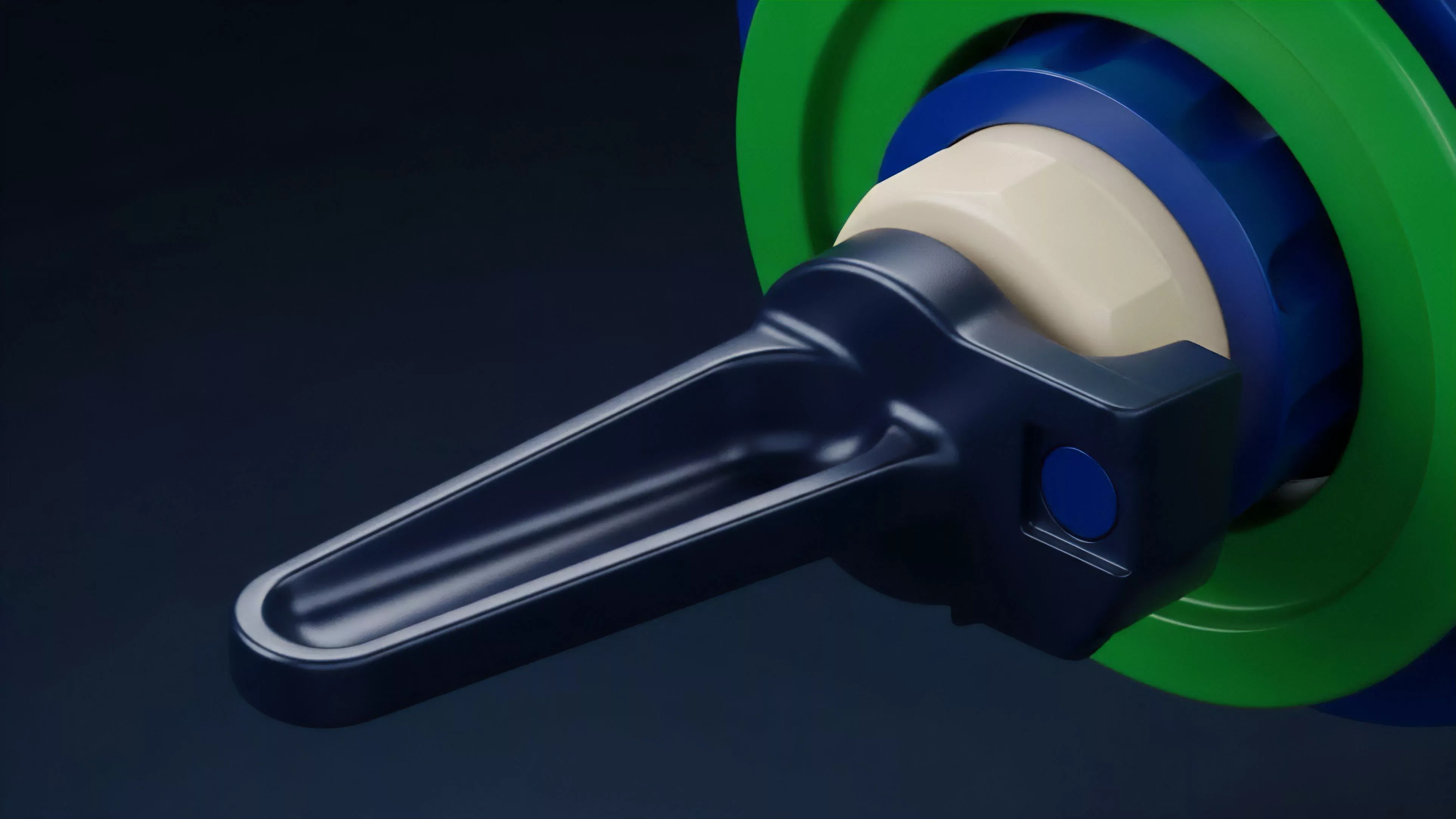

Order Book Order Flow Control System Design

Meaning ⎊ Order Book Order Flow Control System Design provides the deterministic, transparent framework required for efficient price discovery in decentralized markets.

Order Flow Control Systems

Meaning ⎊ Order Flow Control Systems govern transaction sequencing to optimize trade execution, mitigate adversarial extraction, and enhance liquidity efficiency.

Order Book Order Flow Control System Design and Implementation

Meaning ⎊ Order Book Order Flow Control manages the efficient, secure, and fair matching of derivative trades within decentralized financial environments.

Point of Control

Meaning ⎊ The single price level with the highest traded volume during a specified period, indicating the strongest market consensus.

Stochastic Game Theory

Meaning ⎊ Stochastic Game Theory enables the construction of resilient decentralized financial systems by modeling interactions under persistent uncertainty.

Portfolio Control

Meaning ⎊ The active management of asset allocations and risk exposure to achieve defined financial goals within volatile markets.

Role-Based Access Control Systems

Meaning ⎊ Role-Based Access Control Systems secure decentralized protocols by restricting administrative power to granular, auditable, and predefined functions.